Production postmortemDeduplicating replication speed

A customer called us with an interesting issue. They have a decently large database (around 750GB or so) that they want to replicate to another node. They did all the usual things that you need to do and the process started running as expected. However… that wouldn’t make for an interesting postmortem post if everything actually went right…

A customer called us with an interesting issue. They have a decently large database (around 750GB or so) that they want to replicate to another node. They did all the usual things that you need to do and the process started running as expected. However… that wouldn’t make for an interesting postmortem post if everything actually went right…

Their problem was that the replication stalled midway through. There were no resource limits, but the replication didn’t progress even though the network traffic was high. So something was going on, but it didn’t move the replication for some reason.

We first ruled out the usual suspects (replication issue causing a loop, bad network, etc) and we were left scratching our heads. Everything seemed to be fine, the replication was working, but at a rate of about 1 – 2 documents a minute. In almost 12 hours since the replication started, only about 15GB were replicated to the other side. That was way outside expectations, we assumed that the whole replication wouldn’t take this long.

It turns out that the numbers we got were a lie. Not because the customer misled us, but because RavenDB does some smarts behind the scenes that end up being pretty hard on us down the road. To get the full picture, we need to understand exactly what we have in the customer’s database.

Let’s say that you store data about Players in a game. Each player has a bunch of stats, characters, etc. Whenever a player gets an achievement, the game will store a screenshot of the achievement. This isn’t the actual scenario, but it should make it clear what is going on. As players play the game, they earn achievements. The screenshots are stored as attachments inside of RavenDB. That means that for about 8 million players, we have about 72 million attachments or so.

That explains the size of the database, of course, but not why we aren’t making progress in the replication process. Digging deeper, it turns out that most of the achievements are common across players (naturally), and that in many cases, the screenshots that you store in RavenDB are also identical.

What happens when you store the same attachment multiple times in RavenDB? Well, there is no point in storing it twice, RavenDB does transparent de-duplication behind the scenes and only stores the attachment’s data once. Attachments are de-duplicated based on their content, not their name or the associated document. In this scenario, completely accidentally, the customer set up an environment where they would upload a lot of attachments to RavenDB, which are then de-duplicated by RavenDB.

None of that is intentional, it just came out that way. To be honest, I’m pretty proud of that feature, and it certainly helped a lot in this scenario. Most of the disk space for this database was taken by attachments, but only a small number of the attachments are actually unique. Let’s do some math, then.

Total attachments' size is: 700GB. There are about half a million unique attachments. There are a total of 72 million attachments. That means that the average size of an attachment is about 1.4MB or so. And the total size of attachments (without de-duplication) is over 100 TB.

I’ll repeat that again, the actual size of the data is 100 TB. It is just that RavenDB was able to optimize that using de-duplication to have significantly less on disk due to the pattern of data that is stored in the database.

However, that applies at the node level. What happens when we have replication? Well, when we send an attachment to the other side, even if it is de-duplicated on our end, we don’t know if it is on the other side already. So we always send the attachments. In this scenario, where we have so many duplicate attachments, we end up sending way too much data to the other side. The replication process isn’t sending 750GB to the other side but 100 TB of data.

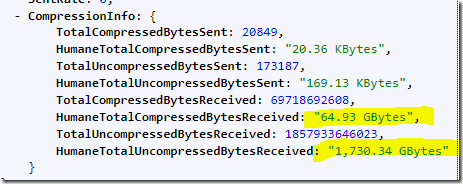

The customer was running RavenDB 5.2 at the time, so the first thing to do when we figured this out was to upgrade to RavenDB 5.3. In RavenDB 5.3 we have implemented TCP compression for internal data (replication, subscription, etc). Here are the results of this change:

In other words, we were able to compress the 1.7 TB we sent to under 65 GB. That is a nice improvement. But the situation is still not ideal.

De-duplication over the wire is a pretty tough problem. We don’t know what is the state on the other side, and the cost of asking each time can be pretty high.

Luckily, RavenDB has a relevant feature that we can lean on. RavenDB has to handle a scenario where the following sequence of events occurs (two nodes, A & B, with one way replication happening from A to B):

- Node A: Create document – users/1

- Node B: Replication document: users/1

- Node A: Add attachment to users/1 (also modifies users/1)

- Node B: Replication of attachment for users/1 & users/1 document

- Node A: Modify users/1

- Node B: Replication of users/1 (but not the attachment, it was already sent)

- Node B: Delete users/1 document (and the associated attachment)

- Node A: Modify users/1

- Node B: Replication of users/1 (but not the attachment, it was already sent)

- Node B is now in trouble, since it has a missing attachment

Note that this sequence of events can happen in a distributed system, and we don’t want to leave “holes” in the system. As such, RavenDB knows to detect this properly. Node B will tell Node A that it is missing an attachment and Node A will send it over.

We can utilize the same approach. RavenDB will now remember the last 16K attachments that it sent in the current connection to a node. If the attachment was already sent, we can skip sending it. But if it is missing on the other side, we fall back to the missing attachment behavior and send it anyway.

In a scenario like the one we face, where we have a lot of duplicated attachments, that can reduce the workload by a significant amount, without having to change the manner in which we replicate data between nodes.

More posts in "Production postmortem" series:

- (07 Apr 2025) The race condition in the interlock

- (12 Dec 2023) The Spawn of Denial of Service

- (24 Jul 2023) The dog ate my request

- (03 Jul 2023) ENOMEM when trying to free memory

- (27 Jan 2023) The server ate all my memory

- (23 Jan 2023) The big server that couldn’t handle the load

- (16 Jan 2023) The heisenbug server

- (03 Oct 2022) Do you trust this server?

- (15 Sep 2022) The missed indexing reference

- (05 Aug 2022) The allocating query

- (22 Jul 2022) Efficiency all the way to Out of Memory error

- (18 Jul 2022) Broken networks and compressed streams

- (13 Jul 2022) Your math is wrong, recursion doesn’t work this way

- (12 Jul 2022) The data corruption in the node.js stack

- (11 Jul 2022) Out of memory on a clear sky

- (29 Apr 2022) Deduplicating replication speed

- (25 Apr 2022) The network latency and the I/O spikes

- (22 Apr 2022) The encrypted database that was too big to replicate

- (20 Apr 2022) Misleading security and other production snafus

- (03 Jan 2022) An error on the first act will lead to data corruption on the second act…

- (13 Dec 2021) The memory leak that only happened on Linux

- (17 Sep 2021) The Guinness record for page faults & high CPU

- (07 Jan 2021) The file system limitation

- (23 Mar 2020) high CPU when there is little work to be done

- (21 Feb 2020) The self signed certificate that couldn’t

- (31 Jan 2020) The slow slowdown of large systems

- (07 Jun 2019) Printer out of paper and the RavenDB hang

- (18 Feb 2019) This data corruption bug requires 3 simultaneous race conditions

- (25 Dec 2018) Handled errors and the curse of recursive error handling

- (23 Nov 2018) The ARM is killing me

- (22 Feb 2018) The unavailable Linux server

- (06 Dec 2017) data corruption, a view from INSIDE the sausage

- (01 Dec 2017) The random high CPU

- (07 Aug 2017) 30% boost with a single line change

- (04 Aug 2017) The case of 99.99% percentile

- (02 Aug 2017) The lightly loaded trashing server

- (23 Aug 2016) The insidious cost of managed memory

- (05 Feb 2016) A null reference in our abstraction

- (27 Jan 2016) The Razor Suicide

- (13 Nov 2015) The case of the “it is slow on that machine (only)”

- (21 Oct 2015) The case of the slow index rebuild

- (22 Sep 2015) The case of the Unicode Poo

- (03 Sep 2015) The industry at large

- (01 Sep 2015) The case of the lying configuration file

- (31 Aug 2015) The case of the memory eater and high load

- (14 Aug 2015) The case of the man in the middle

- (05 Aug 2015) Reading the errors

- (29 Jul 2015) The evil licensing code

- (23 Jul 2015) The case of the native memory leak

- (16 Jul 2015) The case of the intransigent new database

- (13 Jul 2015) The case of the hung over server

- (09 Jul 2015) The case of the infected cluster

Comments

Comment preview