Production postmortemPrinter out of paper and the RavenDB hang

I spoke about this in the video, and it seems to have caught a lot of people yes, so I thought that I would talk here a bit how we trace the root cause of a RavenDB critical issue to a printer being out of paper.

I spoke about this in the video, and it seems to have caught a lot of people yes, so I thought that I would talk here a bit how we trace the root cause of a RavenDB critical issue to a printer being out of paper.

What is the relationship, I can hear you ask, between RavenDB, a document database, and a printer being out of paper? That is a good question. The answer is pretty much none. There is no DocPrint module inside of RavenDB and the last time yours truly wrote printer code was over fifteen years ago. But the story started, like all good tech stories, with a phone call. An inconveniently timed phone call, I might add.

Imagine, the year is 2013, and I’m enjoying the best part of the year, December. I love December for a few reasons. I was born in it, and it is a time where pretty much all our customers are busy doing other stuff and we can focus on pure development. So imagine my surprise when, on the other side of the line, I got a pretty upset admin that had to troubleshoot a RavenDB instance that would refuse to start. To make things interesting, this admin had drawn the short straw, I assume, and had to man the IT department while everyone else was on vacation. He had no relation to RavenDB during normal operations and only had the bare minimum information about it.

The symptoms were clear, luckily. RavenDB would simply refuse to start, hanging almost immediately, it seemed. No network access, nothing in the logs to indicate a problem. Just… hanging…

Here is the stack trace that we captured:

And that was… it. We started to look into it, and we run into this StackOverflow question, which was awesome. Indeed, restarting the print spooler would fix the problem and RavenDB would immediately start running. But I couldn’t leave it like this, and I guess the admin on the other side was kinda bored, because he went along with my investigation.

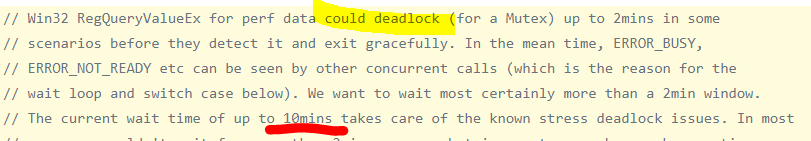

We now have access to the code (we didn’t in 2013) and can look at exactly what is going on. This comment had me… upset:

The service manager in Windows will consider any service that didn’t finish initialization in 30 seconds to be failed and kill it. You might be putting the things together at this point?

After restarting the print spooler, we were able to start RavenDB, but restarting it cause another failure. Eventually we tracked it down to a network printer that was out of paper (presumably no one in the office to notice / fill it up). My assumption is that the print spooler was holding a register key hostage while making a network call to the printer that would time out because it didn’t have any paper. If at that time you would attempt to use a performance counter, you would hang, and if you are running as service, would be killed.

I’m left with nothing to do but quote Leslie Lamport:

A distributed system is one in which the failure of a computer you didn’t even know existed can render your own computer unusable.

Oh so true.

We ended up ditching performance counters entirely after running into so many issues around them. As of 2017, people were still running into this issue, so I think that was a great decision.

More posts in "Production postmortem" series:

- (07 Apr 2025) The race condition in the interlock

- (12 Dec 2023) The Spawn of Denial of Service

- (24 Jul 2023) The dog ate my request

- (03 Jul 2023) ENOMEM when trying to free memory

- (27 Jan 2023) The server ate all my memory

- (23 Jan 2023) The big server that couldn’t handle the load

- (16 Jan 2023) The heisenbug server

- (03 Oct 2022) Do you trust this server?

- (15 Sep 2022) The missed indexing reference

- (05 Aug 2022) The allocating query

- (22 Jul 2022) Efficiency all the way to Out of Memory error

- (18 Jul 2022) Broken networks and compressed streams

- (13 Jul 2022) Your math is wrong, recursion doesn’t work this way

- (12 Jul 2022) The data corruption in the node.js stack

- (11 Jul 2022) Out of memory on a clear sky

- (29 Apr 2022) Deduplicating replication speed

- (25 Apr 2022) The network latency and the I/O spikes

- (22 Apr 2022) The encrypted database that was too big to replicate

- (20 Apr 2022) Misleading security and other production snafus

- (03 Jan 2022) An error on the first act will lead to data corruption on the second act…

- (13 Dec 2021) The memory leak that only happened on Linux

- (17 Sep 2021) The Guinness record for page faults & high CPU

- (07 Jan 2021) The file system limitation

- (23 Mar 2020) high CPU when there is little work to be done

- (21 Feb 2020) The self signed certificate that couldn’t

- (31 Jan 2020) The slow slowdown of large systems

- (07 Jun 2019) Printer out of paper and the RavenDB hang

- (18 Feb 2019) This data corruption bug requires 3 simultaneous race conditions

- (25 Dec 2018) Handled errors and the curse of recursive error handling

- (23 Nov 2018) The ARM is killing me

- (22 Feb 2018) The unavailable Linux server

- (06 Dec 2017) data corruption, a view from INSIDE the sausage

- (01 Dec 2017) The random high CPU

- (07 Aug 2017) 30% boost with a single line change

- (04 Aug 2017) The case of 99.99% percentile

- (02 Aug 2017) The lightly loaded trashing server

- (23 Aug 2016) The insidious cost of managed memory

- (05 Feb 2016) A null reference in our abstraction

- (27 Jan 2016) The Razor Suicide

- (13 Nov 2015) The case of the “it is slow on that machine (only)”

- (21 Oct 2015) The case of the slow index rebuild

- (22 Sep 2015) The case of the Unicode Poo

- (03 Sep 2015) The industry at large

- (01 Sep 2015) The case of the lying configuration file

- (31 Aug 2015) The case of the memory eater and high load

- (14 Aug 2015) The case of the man in the middle

- (05 Aug 2015) Reading the errors

- (29 Jul 2015) The evil licensing code

- (23 Jul 2015) The case of the native memory leak

- (16 Jul 2015) The case of the intransigent new database

- (13 Jul 2015) The case of the hung over server

- (09 Jul 2015) The case of the infected cluster

Comments

Comment preview