NewsBlur (a personal news aggregator) suffered from a data breech / ransomware “attack”. I’m using the term “attack” here in quotes because this is the equivalent to having your car broken into after you left it with the engine running with the keys inside in a bad part of town.

NewsBlur (a personal news aggregator) suffered from a data breech / ransomware “attack”. I’m using the term “attack” here in quotes because this is the equivalent to having your car broken into after you left it with the engine running with the keys inside in a bad part of town.

As a result of the breech, users’ data including personal RSS feeds, access tokens for social media, email addresses and other sundry items of various import. It looks like about 250GB of data was taken hostage, by the way.

The explanation about what exactly happened is really interesting, however.

NewsBlur moved their MongoDB instance from its own server to a container. Along the way, they accidently (looks like a Docker default configuration) opened the MongoDB port to the whole wide world. By default, MongoDB will only listen to the localhost, in this case, I think that from the perspective of MongoDB, it was listening to the local port, it is Docker infrastructure that did the port forwarding and tied the public port to the instance. From that point on, it was just a matter of time. It apparently took two hours or so for some automated script to run into the welcome mat and jump in, wreak havoc and move on.

I’m actually surprised that it took so long. In some cases, machines are attacked in under a minute from showing up on the public internet. I used the term bad part of town earlier, but it is more accurate to say that the entire internet is a hostile environment and should be threated as such.

That lead to the next problem. You should never assume that you are running in anywhere else. In the case above, we have NewsBlur assuming that they are running on a private network where only the internal servers can access. About a year ago, Microsoft had a similar issue, they exposed an Elastic cluster that was supposed to be on an internal network only and lost 250 million customer support records.

In both cases, the problem was lack of defense in depth. Once the attacker was able to connect to the system, it was game over. There are monitoring solutions that you can use, but in general, the idea is that you don’t trust your network. You authenticate and encrypt all the traffic, regardless of where you are running it. The additional encryption cost is not usually meaningful for typical workloads (even for demanding workloads), given that most CPUs have dedicated encryption instructions.

When using RavenDB, we have taken the steps to ensure that:

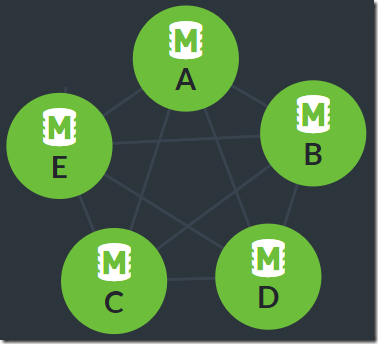

- It is simple and easy to run in a secure mode, using X509 client certificate for authentication and all network communications are encrypted.

- It is hard and complex to run without security.

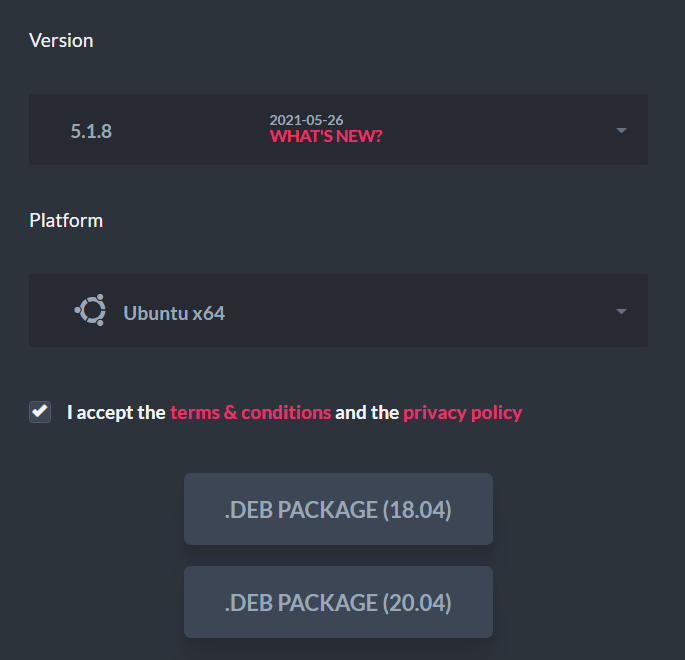

If you run the RavenDB setup wizard, it takes under two minutes to end up with a secured solution, one that you can expose to the outside world and not worry about your data taking a walk.

_thumb.png)