In just 20 minutes, I try to condense ~15 years of working on a database engine in C# / .NET and show how you can use C# to build system software. I think it ended up being really nice:

When you search for some text in RavenDB, you’ll use case insensitive search by default. This means that when you run this query:

You’ll get users with any capitalization of “Oren”. You can ask RavenDB to do a case sensitive search, like so:

In this case, you’ll find only exact matches, including casing. So far, that isn’t really surprising, right?

Under what conditions will you need to do searches like that? Well, it is usually when the data itself is case sensitive. User names on Unix are a good example of that, but you may also have Base64 data (where case matters), product keys, etc.

What is interesting is that this is a property of the field, usually.

Now, how does RavenDB handles this scenario? One option would be to index the data as is and compare it using a case insensitive comparator. That ends up being quite expensive, usually. It’s cheaper by far to normalize the text and compare it using ordinals.

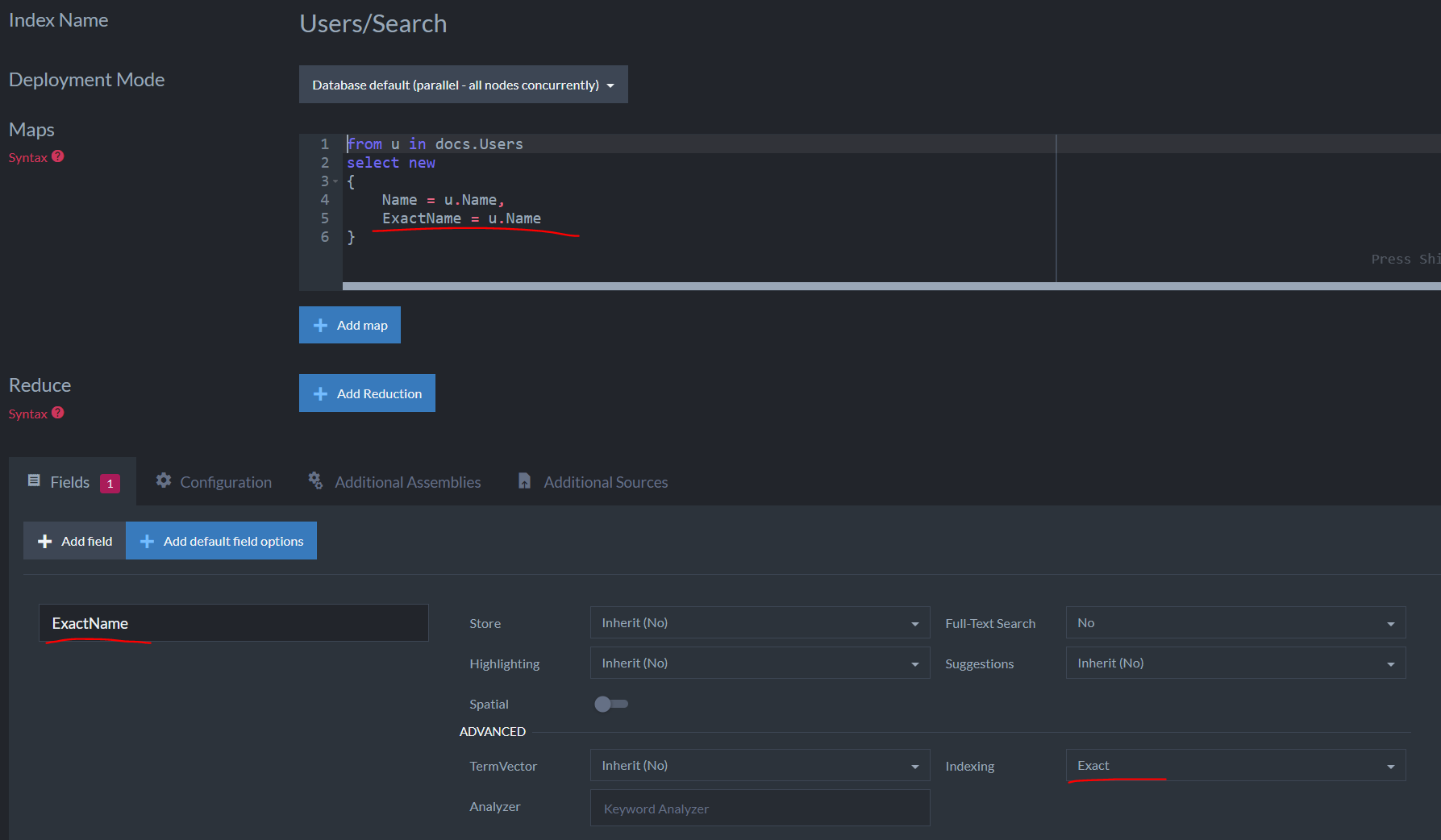

The exact() method tells us how the field is supposed to be treated. This is done at indexing time. If we want to be able to query using both case-sensitive and case-insensitive manner, we need to have two fields. Here is what this looks like:

We indexed the name field twice, marking it as case sensitive for the second index field.

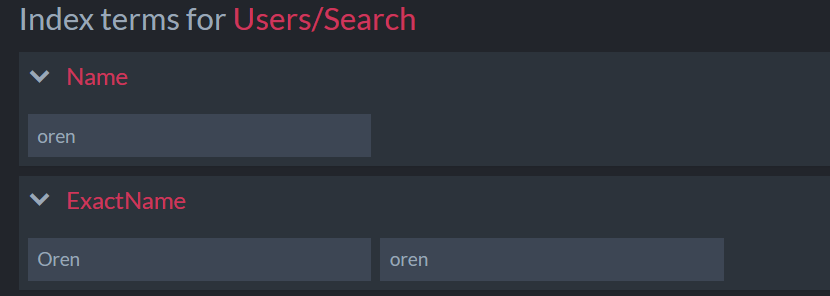

Here is what actually happens behind the scenes because of this configuration:

The analyzer used determines the terms that are generated per index field. The first index field (Name) is using the default LowerCaseKeywordAnalyzer analyzer, while the second index field (ExactName) is using the default exact KeywordAnalyzer analyzer.

We’ll be in QCon San Francisco next week (Oct 24 – 26), and we’ll be very happy to meet you in person.

We are going to show off some of the new features in RavenDB 5.4, discuss what is on the roadmap for RavenDB and present some really cool aspects of what you can do with our database.

Trevor Hunter (CTO of @kobo Inc) will present a session on: Our Journey Into High Performance and Reliable Document Databases with RavenDB.

Looking forward to seeing you there.

I’m not talking about this much anymore, but alongside RavenDB, my company produces a set of tools to help you work with OR/M (object relational mappers) such as NHibernate or Entity Framework as well as tracking what is going on with Cosmos DB.

The profilers are implemented as two separate components. We have the Appender, which runs inside the profiled process, and the Profiler, which is a WPF application that analyzes and shows you the results of the profiling. For the profilers, all the execution is done on the users’ machine.

We have crash reporting enabled and we are diligent in fixing any and all errors from the field. We recently ran into a whole spate of errors, looking something like this:

System.NullReferenceException: Object reference not set to an instance of an object. at System.Windows.Controls.VirtualizingStackPanel.UpdateExtent(Boolean areItemChangesLocal) at System.Windows.Controls.VirtualizingStackPanel.ShouldItemsChangeAffectLayoutCore(Boolean areItemChangesLocal, ItemsChangedEventArgs args) at System.Windows.Controls.VirtualizingPanel.OnItemsChangedInternal(Object sender, ItemsChangedEventArgs args) at System.Windows.Controls.Panel.OnItemsChanged(Object sender, ItemsChangedEventArgs args) at System.Windows.Controls.ItemContainerGenerator.OnItemAdded(Object item, Int32 index, NotifyCollectionChangedEventArgs collectionChangedArgs) at System.Windows.Controls.ItemContainerGenerator.OnCollectionChanged(Object sender, NotifyCollectionChangedEventArgs args) at System.Windows.WeakEventManager.ListenerList`1.DeliverEvent(Object sender, EventArgs e, Type managerType) at System.Windows.WeakEventManager.DeliverEvent(Object sender, EventArgs args) at System.Collections.Specialized.NotifyCollectionChangedEventHandler.Invoke(Object sender, NotifyCollectionChangedEventArgs e) at System.Windows.Data.CollectionView.OnCollectionChanged(NotifyCollectionChangedEventArgs args) at System.Windows.WeakEventManager.ListenerList`1.DeliverEvent(Object sender, EventArgs e, Type managerType) at System.Windows.WeakEventManager.DeliverEvent(Object sender, EventArgs args) at System.Windows.Data.CollectionView.OnCollectionChanged(NotifyCollectionChangedEventArgs args) at System.Windows.Data.ListCollectionView.ProcessCollectionChangedWithAdjustedIndex(NotifyCollectionChangedEventArgs args, Int32 adjustedOldIndex, Int32 adjustedNewIndex) at System.Collections.Specialized.NotifyCollectionChangedEventHandler.Invoke(Object sender, NotifyCollectionChangedEventArgs e) at System.Collections.ObjectModel.ObservableCollection`1.OnCollectionChanged(NotifyCollectionChangedEventArgs e) at Caliburn.Micro.BindableCollection`1.OnCollectionChanged(NotifyCollectionChangedEventArgs e) at System.Collections.ObjectModel.ObservableCollection`1.InsertItem(Int32 index, T item) at Caliburn.Micro.BindableCollection`1.OnUIThread(Action action) at HibernatingRhinos.Profiler.Client.Sessions.SessionListModel.Add(SessionModel model)

And here is the relevant code:

This is called from a timer thread (not from the UI) one, and the Items collection in this case is a BindableCollection<T>.

The error is happening deep in the guts of WPF and it seems like it has been triggered by some recent Windows update. Here is the “fix” for this issue:

Basically, don’t report this error, and continue executing normally (the next UI operation will fix the UI state, usually within < 200 ms).

This is the right call in terms of development time and effort, but I got to say, this makes me feel quite uncomfortable to see a change like that.

A long while ago, I was involved in a project that dealt with elder home care. The company I was working for was contracted by the government to send care workers to the homes of elderly people to assist in routine tasks. Depending on the condition of the patient in question, they would spend anything from 2 – 8 hours a day a few days a week with the patient. Some caretakers would have multiple patients that they would be in charge of.

There were a lot of regulations and details that we had to get right. A patient may be allowed a certain amount of hours a week by the government, but may also pay for additional hours from their own pocket, etc. Quite interesting to work on, but not the topic of this post.

A key aspect of the system was actually paying the workers, of course. The problem was the way it was handled. At the end of each month, they would designate “office hours” for the care workers to come and submit their time sheets. Those had to be signed by the patients or their family members and were submitted in person at the office. Based on the number of hours worked, the workers would be paid.

The time sheets would look something like this:

A single worker would typically have 4 – 8 of those, and the office would spend a considerable amount of time deciphering those, computing the total hours and handing over payment.

The idea of the system I was working on was to automate all of that. Here is more or less what I needed to do:

For each one of the timesheet entries, the office would need to input the start & end times, who the care worker took care of, whether the time was approved (actually, whether this was paid by the government, privately, by the company, etc), and whether it was counted as overtime, etc.

The rules for all of those were… interesting, but we got it working and tested. And then we got to talk with the actual users.

They took one look at the user interface they had to work with and absolutely rebelled. The underlying issue was that during the end of the month period, each branch would need to handle hundreds of care workers, typically within four to six hours. They didn’t have the time to do that (pun intended). Their current process was to review the submitted time sheet with the care worker, stamp it with approved, and put just the total hours worked into the payroll system.

Having to spend so much time on data entry was horrendous for them, but the company really wanted to have that level of granularity, to be able to properly track how many hours were worked and handle its own billing more easily.

Having to spend so much time on data entry was horrendous for them, but the company really wanted to have that level of granularity, to be able to properly track how many hours were worked and handle its own billing more easily.

Many of the care workers were also… non-technical, and the timesheet had to be approved by the patient or family worker. Having a signed piece of paper was easy to handle, trying to get them to go on a website and enter hours was a non-starter. That was also before everyone had a smartphone (and even today I would assume that would be difficult in that line of business).

As an additional twist, it turns out that the manual process allowed the office employees to better manage the care workers. For example, they may give them a 0.5 / hour adjustment for a particular month to manage a difficult client or deal with some specific issue, or approve (at their discretion) overtime pay when it wasn’t quite "proper” to do so.

One of the reasons that the company wanted to move to a modern system was to avoid this sort of “initiatives”, but they turned out to be actually quite important for the proper management of the care workers and actually getting things done. For an additional twist, they had multiple branches, and each of those had a different methodology of how that was handled, and all of them were different from what HQ thought it should be.

The process turned out to be even more complex than we initially got, because there was a lot of flexibility in the system that was actually crucial for the proper running of the business.

If I recall properly, we ended up having a two-stage approach. During the end of the month rush, they would fill in the gross hours and payment details. That part of the system was intentionally simplified to the point where we did almost no data validation and trusted them to put the right values.

After payroll was processed, they had to actually put in all those hours and we would run a check on the expected amount / validation vs. what was actually paid. That gave the company the insight into what was going on that they needed, the office people were able to keep on handling things the way they were used to, and discrepancies could be raised and handled more easily.

Being there in the “I’m just a techie” role, so I got to sit on the sidelines and see tug-of-war between the different groups. It was quite interesting to see, I described the process above in a bit of a chaotic manner, but there were proper procedures and processes in the offices. They were just something that the company HQ never even realized was there.

It also taught me quite a lot about the importance of accepting “invalid” data. In many cases, you’ll see computerized solutions flat out refuse to accept values that they consider to be wrong. The problem is that often, you need to record reality, which may not agree with validation rules on your systems. And in most cases, reality wins.

A few days ago I had an interview with Milan Groeneweg, I think it was quite interesting, even if I say so myself ![]() .

.

A customer called us with a problem. They set up a production cluster successfully, they could manually verify that everything is working, except that it would fail when they try to connect to it via the client API.

The error in question looked something like this:

CertificateNameMismatchException: You are trying to contact host rvn-db-72 but the hostname must match one of the CN or SAN properties of the server certificate: CN=rvn-db-72, OU=UAT, OU=Computers, OU=Operations, OU=Jam, DC=example, DC=com, DNS Name=rvn-db-72.jam.example.com

That is… a really strange error. Because they were accessing the server using: rvn-db-72.jam.example.com, and that was the configured certificate for it. But for some reason the RavenDB client was trying to connect directly to rvn-db-72. It was able to connect to it, but failed on the hostname validation because the certificates didn’t match.

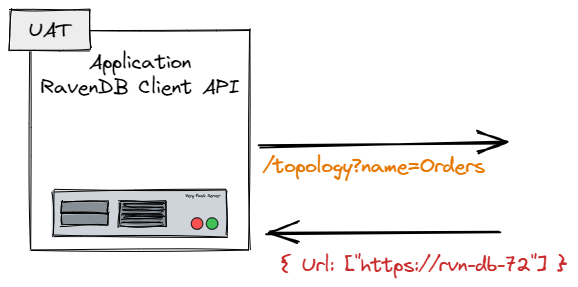

Initially, we suspected that there is some sort of a MITM or some network appliance that got in the way, but we finally figured out that we had the following sequence of events, shown in the image below. The RavenDB client was properly configured, but when it asked the server where the database is, the server would give the wrong URL, leading to this error.

This deserves some explanation. When we initialize the RavenDB client, one of the first things that the client does is query the cluster for the URLs where it can find the database it needs to work with. This is because the distribution of databases in a cluster doesn’t have to match the nodes in the cluster.

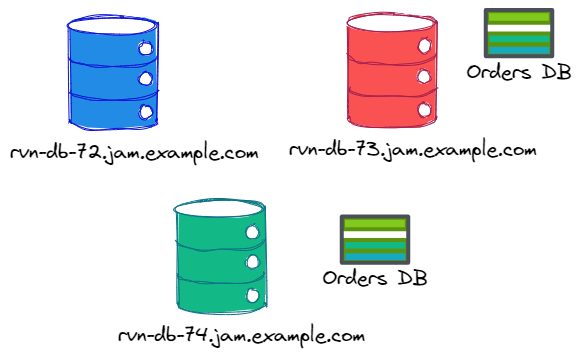

Consider this setup:

In this case, we have three nodes in the cluster, but the “Orders DB” is located only on two of them. If we query the rvn-db-72 database for the topology of “Orders DB”, we’ll get nodes rvn-db-73 and rvn-db-74. Here is what this will look like:

Now that we understand what is going on, what is the root cause of the problem?

A misconfigured server, basically. The PublicServerUrl for the server in question was left as the hostname, instead of the full domain name.

This configuration meant that the server would give the wrong URL to the client, which would then fail.

This is something that only the client API is doing, so the Studio behaved just fine, which made it harder to figure out what exactly is going on there. The actual fix is trivial, naturally, but figuring it out took too long. We’ll be adding an alert to detect and resolve misconfigurations like that in the future.