In the previous post I showed how we can mess around with the encrypted text, resulting in a valid (but not the original) plain text. We can use that for many nefarious reasons, as you can imagine. Luckily, there is a straightforward solution for this issue. We can implement something called MAC (message authentication code) to ensure that the encrypted data wasn’t tampered with. That is pretty easy to do, actually, since we have HMAC already, which is meant for exactly this purpose.

The issue here is an interesting one, what shall we sign? Here are the options:

- Sign the plain text of the message, using a hashed key function (HMAC-MD5, in our case). Because we are using the secret key to compute the hash, just looking at the hashed value will not tell us anything about the plain text (for example, if we were using just MD5, we could use rainbow tables to figure out what the plain text was from the hash). Since there is no security issue with making the signature public, we can just append that to the output of the encryption as plain text. At least, I don’t think it does. I’ll remind you again that I’m not a cryptographer by trade.

- Sign the plain text of the message (using a hashed key function or a regular cryptographic hash function) and append the hash (encrypted) to the output message.

- Sign the encrypted value of the message, and append that hash to the output message.

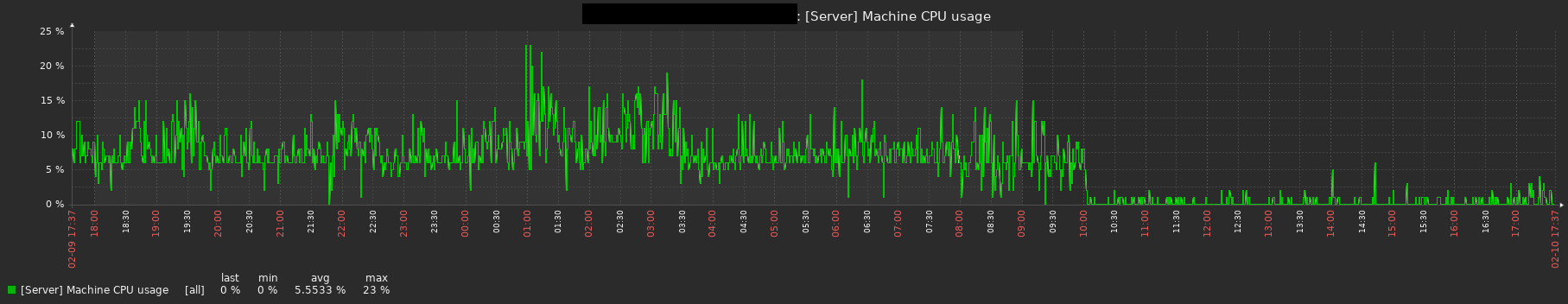

A visualization might make it easier to understand. If you want to read more, there is a great presentation here.

The first two options are bad. Using those methods will leak your data in various ways. There is something that is apparently called the Cryptographic Doom principle, which is very relevant here. The idea is simple, we don’t trust the encrypted value, it may have been modified by an adversary. The first two options that we have require us to first take an action (decrypting the data) before we authenticate the message. We can then use various tricks to rip apart the whole scheme. That means that the very first thing we do is verify that the encrypted text we were handled was indeed signed by a trusted party (that has the secret key).

If you’ll look closely at the image above, you can see that I’m using two keys here, instead of one: key1 and key2. What is that about?

In cryptography, there is a strong reluctance to reuse the same key in different contexts. The issue is that if we use a single key in multiple scenarios (such as encryption and authentication), a weakness in one of them can be exploited in the other. Remember, cryptography is just math, and the fear is that given two values that were computed with the same key, but using different algorithms, you can do something with that. That has led to practical attacks in the past, so the general practice is to avoid reusing keys. The good thing is that given a single cryptographic key, it is easy to generate a new key using a key derivation function.

I’m still going to limit myself to HMAC-Md5 only (remember, none of this code is meant to actually be used), so I can derive a new key from an existing one using the following mechanism:

The idea is that we use the HMAC and the static domain string we get to generate the new key. In this case, we actually use it twice, with the nonce being used to inject even more entropy into the mix. Since HMAC-Md5 outputs 16 bytes and I need a 32 bytes key, I’m doing that twice, with a different counter value each time. I also rearrange the order of the (nonce, domain) and (domain, nonce) fields on each hashing attempt to make it more interesting.

A reminder: I didn’t spend any time trying to figure out what kind of security this sort of system brings. It looks very much like what Sodium does for key derivation, but I wouldn’t trust it with anything.

With that in place, here is the new code for encryption:

We have a lot going on here. In the initWithNonce() function, we generate the derived keys for two domains. Then we generate the block of key stream, as we previously did. The last stage in the initWithNonce() function is initializing the MAC computation. Note that in addition to using a derived key for the MAC, I’m also adding the nonce as the first thing that we’ll hash. That should have no effect on security, but it ties the output hash even closer to this specific encryption.

In the xorWithKeyStream() function, you’ll note that I’m now passing both an input and an output buffer, aside from that, this is exactly the same as before (with the actual key stream generation moved to genKeyStreamBlock()). Things get interesting in the encrypytBlock() function. There we XOR the value that we encrypt with the key stream and push that to the output. We also add the encrypted value to the MAC that we generate.

The idea with encryptBlock() is to allow you to build an encrypted message in a piecemeal fashion. Once you are done with the data you want to encrypt, you need to call to finalize(). That would copy the nonce and complete the computation of the MAC of the encrypted portion.

The encrypt() function is provided in order to make it easier to work with the data when you want to encrypt a single buffer. (And yes, I’m not doing any explicit bounds check here, relying on Zig’s to panic if we need to. I mentioned that this isn’t production level code already?)

For encryption, we can pass either a single buffer to encrypt or we can pass it in pieces. For decryption, on the other hand, the situation isn’t as simple. To decrypt the data properly, we first need to verify that it wasn’t modified. That means that to decrypt the data, we need all of it. The API reflects this behavior:

The decrypt() function does do some checks. We are dealing here with input that is expected to be malicious. As such, the first thing that we do is to extract the MAC and the nonce from the cipher text buffer. I decided it would be simpler to require that as a single buffer (although, as you can imagine, it would be very simple to change the API to have that as independent values).

Once we have the nonce, we can initialize the struct with the key and nonce (which will also derive the keys and setup the macGen properly). The next step is to compute the hash over the encrypted text and verify that it matches our expectation.

Yes, I’m using eql() here for the comparison. This is a short circuiting operation, and I’m doing that intentionally so I can talk about this in a future post.

If the MAC that I compute is a match to the MAC that was provided, we know that the message hasn’t been tampered with. At that point we can simply XOR the encrypted text with the key stream to get the original value back.

A single bit out of line with this model will ensure that the decryption fails. What is more, note that we don’t do anything with the decryption until we validate the provided MAC and cipher text. To do anything else would invite cryptographic doom, so it is nice that we were able to avoid it.

In the next post, I’m going to cover timings attacks.