The new blog is up, and it has all been relatively painless. One thing that I learned, I can centralize servers and drop one of my machines, because it seems like I am using a lot less resources  , I might even do that tomorrow.

, I might even do that tomorrow.

I thought that it would be fun to detail how it worked using feedback from twitter. In timeline order, here are the the tweets and my responses…

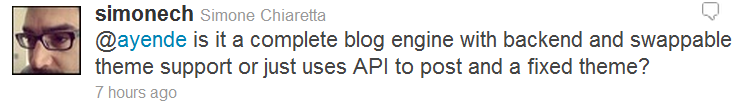

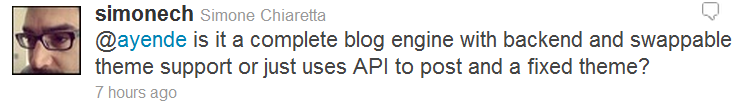

Yes, it is a real blog. Theme support isn’t something that I actually care about, so it is mostly CSS if you want to switch between stuff, but it is all there, and quite simple to figure out.

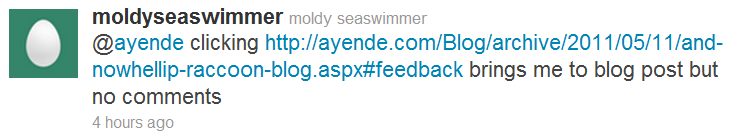

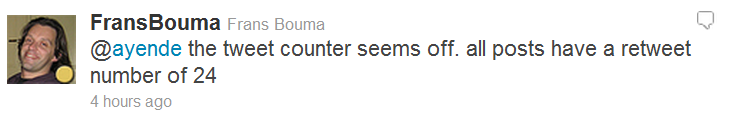

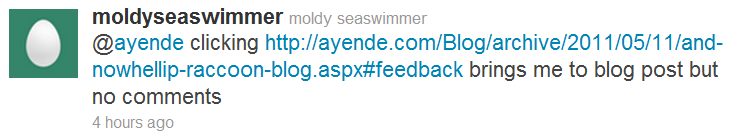

That was during the update process, I disabled comments on the old blog.

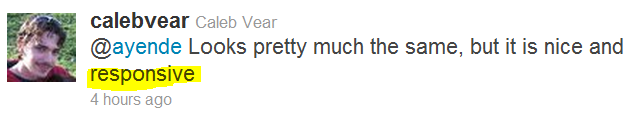

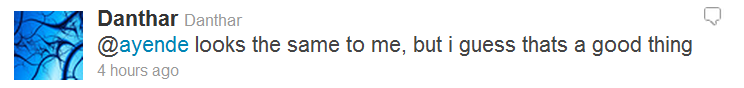

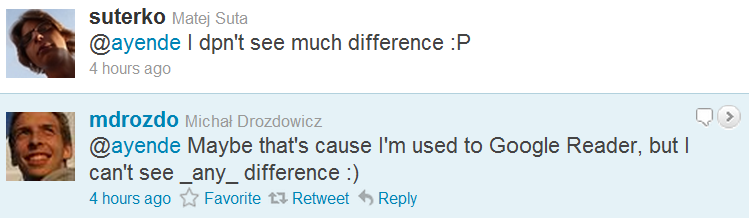

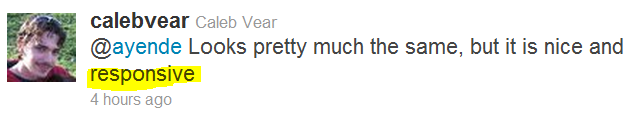

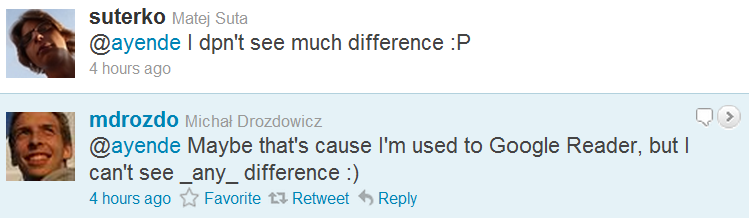

Success! We aimed to give you the exact same feeling, externally. There are a lot of good stuff that are there, of course, but I’ll touch them later.

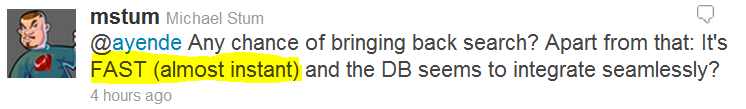

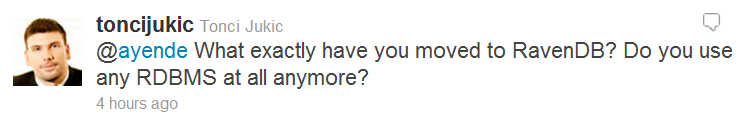

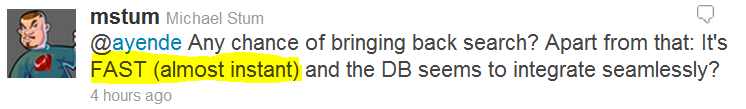

We actually never had search  , but we will do some form of search soon, yes. RavenDB integrates very seamless, and the code is beautiful to work with.

, but we will do some form of search soon, yes. RavenDB integrates very seamless, and the code is beautiful to work with.

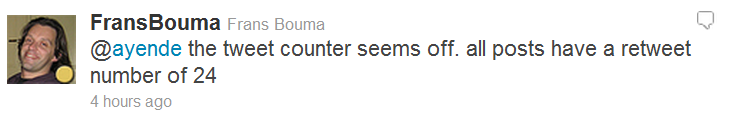

Yes, it actually counted the root url, not the post url. Was actually surprising complicated to solve, because I run into a problem with string vs. MvcHtmlString that caused me some confusion. I understand why, it just brings back bad memories of C++ and std::string , cstr BSTR, etc.

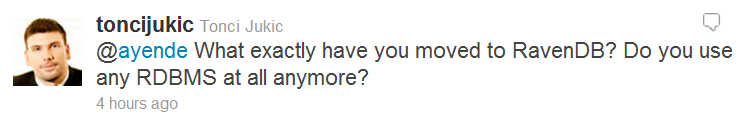

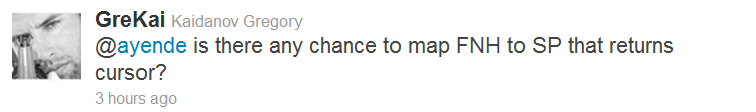

Working with RavenDB is a pleasure, and I try to avoid RDBMS if I can.

That was the goal

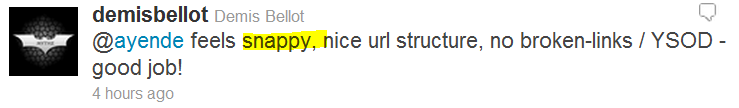

Yes, we worked hard to ensure that there are no broken links, and I even think we managed to get it right.

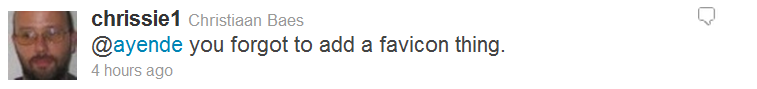

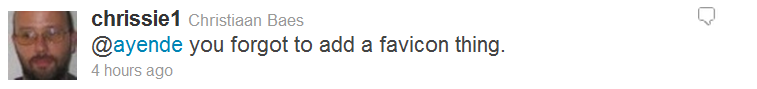

Never thought to do so, but a great idea, implemented.

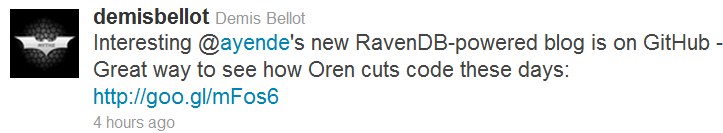

Agreed, that would be a good idea, go right ahead: https://github.com/ayende/RaccoonBlog/

Happy to hear it.

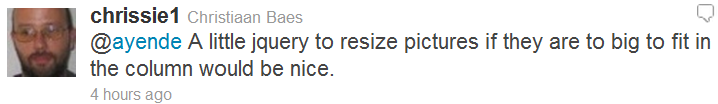

I tend to fix minor stuff, there now.

Success

Indeed…

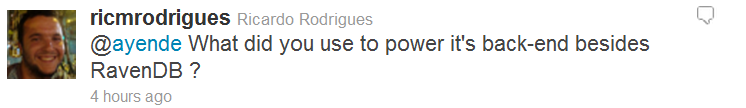

ASP.Net MVC3 for the UI, and some very minimal infrastructure.

I sense a theme here…

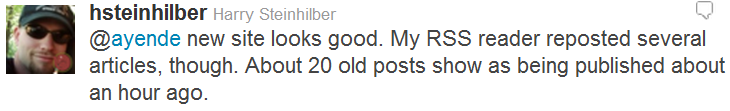

Yes, we use different ids for the RSS, so that caused a lot of ids to show up, but it is a one time thing, and it wasn’t work the effort to maintain ids.

Um, what are you doing in this post?

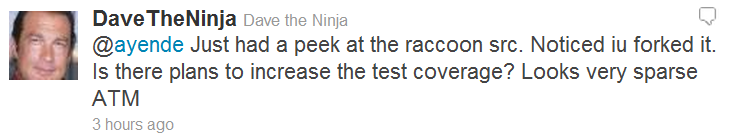

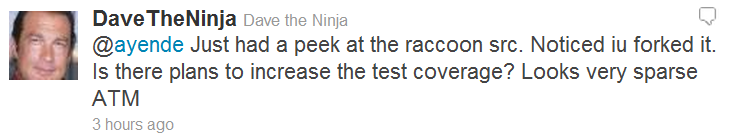

Sparse is one way of putting it, it is a lot closer to non existing. We probably will create some sort of system tests, but I don’t see a lot of value in those sort of tests, mostly because most of the code is basically user interface, and that is usually hard to test.

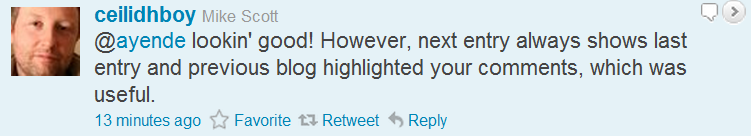

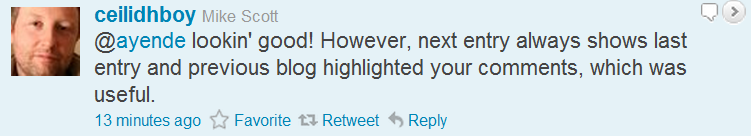

Forgot to add the appropriate CSS there, I fixed that. And I had an issue with desc/ asc ordering for the next/prev posts. Fixed now, thanks.

Overall, I am very happy about the new blog engine.

One of the things that people kept bugging me about is that I am not building applications any longer, another annoyance was with my current setup using this blog.

One of the things that people kept bugging me about is that I am not building applications any longer, another annoyance was with my current setup using this blog.