This feature is now available on the NHibernate trunk. Please note that it is currently only available when using the Castle Proxy Factory.

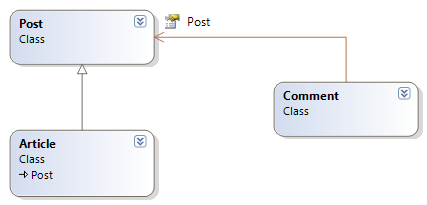

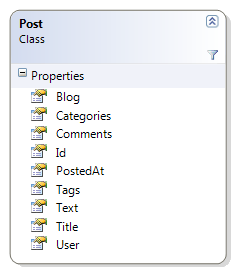

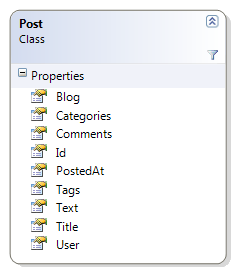

Lazy properties is a very simple feature. Let us go back to my usual blog example, and take a look at the Post entity:

As you can see, it is pretty simple example, but we have a problem. The Text property may contain a lot of text, and we don’t want to load that unless we explicitly asks for it.

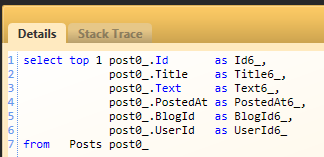

If we would try to execute this code:

var post = session.CreateQuery("from Post")

.SetMaxResults(1)

.UniqueResult<Post>();

You can see from the SQL that NHibernate will load the Text property. In large columns (text, images, etc), the cost of loading a column value is prohibitive, and should be avoided unless absolutely needed.

This new feature allows you to mark a specific property as lazy, like this:

<property name="Text" lazy="true"/>

Once that is done, we can try querying for posts:

var post = session.CreateQuery("from Post")

.SetMaxResults(1)

.UniqueResult<Post>();

System.Console.WriteLine(post.Text);

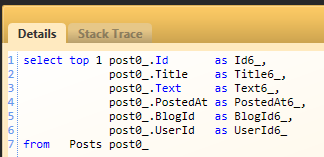

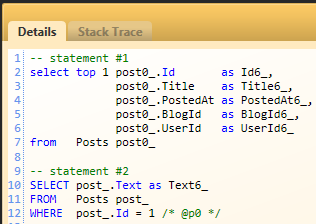

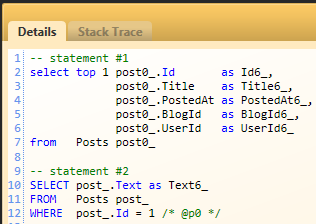

And the resulting SQL is going to be:

Note that we aren’t loading the Text property when we query for the post, and if we will inspect the stack trace of the second query we can see it being generated from the Console.WriteLine call.

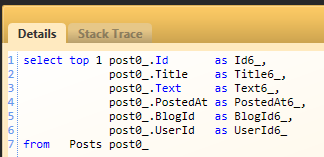

But what if we want to query for posts with their Text property? Doing it this way may very well lead to SELECT N+1 if we need to load all the posts Text properties. NHibernate provide the HQL hint to allow this:

var post = session.CreateQuery("from Post fetch all properties")

.SetMaxResults(1)

.UniqueResult<Post>();

System.Console.WriteLine(post.Text);

Which will result in the following SQL:

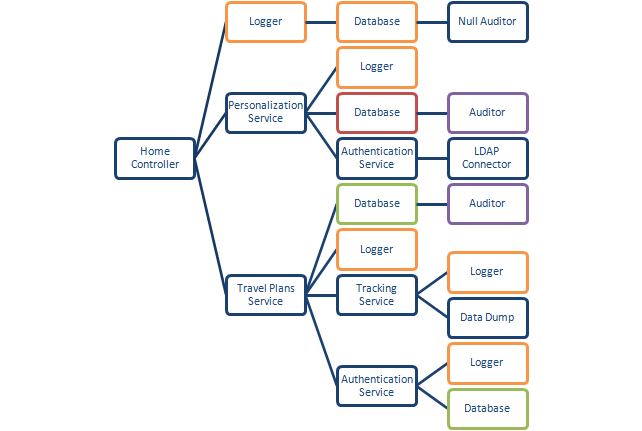

What about multiple lazy properties? NHibernate support them, but you need to keep one thing in mind. NHibernate will load all the entity’s lazy properties, not just the one that was immediately accessed. By that same token, you can’t eagerly load just some of an entity’s lazy properties from HQL.

This feature is mostly meant for unique circumstances, such as Person.Image, Post.Text, etc. As usual, be cautious in over using it.

One last word of caution, this feature is implemented via property interception (and not field interception, like in Hibernate). That was a conscious decision, because we didn’t want to add a bytecode weaving requirement to NHibernate. What this means is that if you mark a property as lazy, it must be a virtual automatic property. If you attempt to access the underlying field value, instead of going through the property, you will circumvent the lazy loading of the property, and may get unexpected results.