A couple of days, a fire started in OVH’s datacenter. You can read more about this here:

They use slightly different terminology, but translating that to the AWS terminology, an entire "region” is down, with SGB1-4 being “availability zones” in the region.

For reference, there are some videos from the location that make me very sad. This is what it looked like under active fire:

I’m going to assume that this is a total loss of everything that was in there.

RavenDB Cloud isn’t offering any services in any OVH data centers, but this is a good time to go over the Disaster Recovery Plan for RavenDB and RavenDB Cloud. It is worth noting that the entire data center has been hit, with the equivalent to an entire AWS region going down.

I’m not sure that this is a fair comparison, it doesn’t looks like that SBG 1-4 are exactly the same thing as AWS availability, but it is close enough to draw parallels.

So far, at least, there have been no cases where Amazon has lost an entire region. There were occasions were a whole availability zone was down, but never a complete region. The way Amazon is handling Availability Zones seems to most paranoid, with each availability zone distanced “many kilometers” from each other in the same region. Contrast that with the four SGB that all went down. For Azure, on the other hand, they explicitly call out the fact that availability zones may not provide sufficient cover for DR scenarios. Google Cloud Platform also provides no information on the matter. For that matter, we also have direct criticism on the topic from AWS.

Yesterday, on the other hand, Oracle Cloud had a DNS configuration error that took effectively the entire cloud down. The good news is that this is just inability to access the cloud, not actual loss of a region, as was the case on OVH. However, when doing Disaster Recovery Planning, having the the entire cloud drop off the face of the earth is also something that you have to consider.

With that background out of the way, let’s consider the impact of losing two availability zones inside AWS, losing a entire region in Azure or GCP or even losing an entire cloud platform. What would be the impact on a RavenDB cluster running in that scenario?

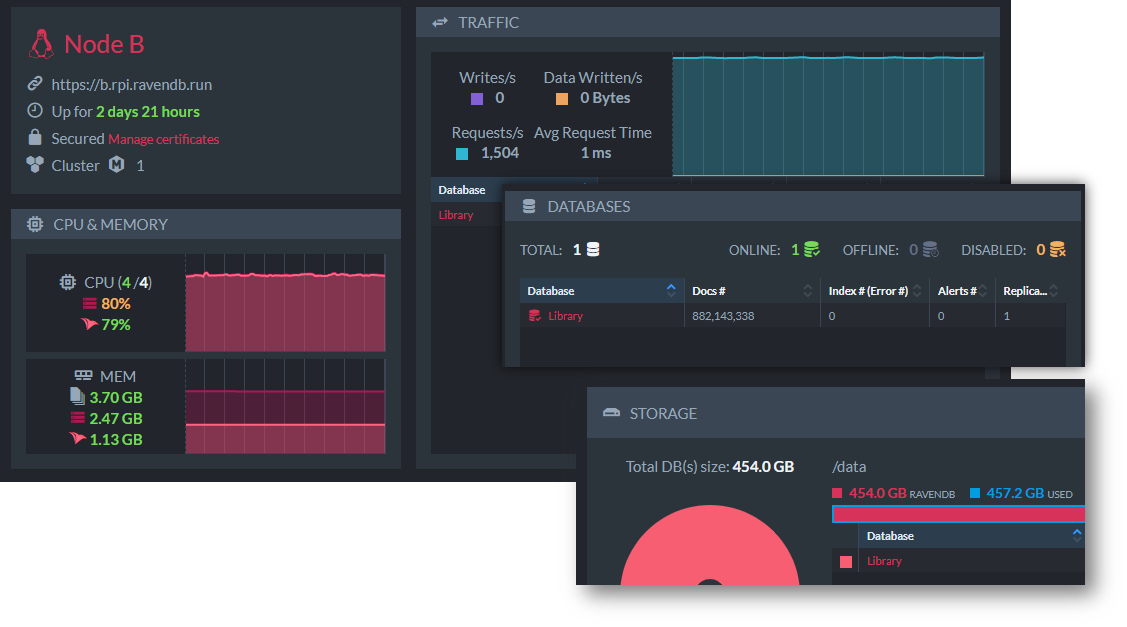

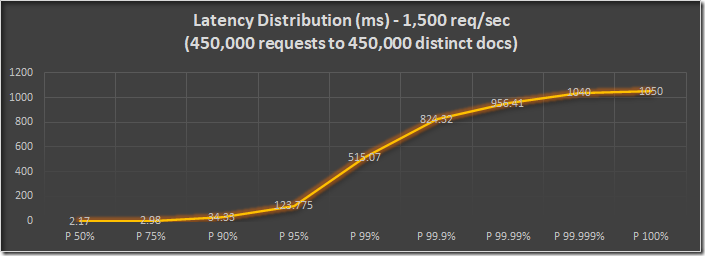

RavenDB is designed to be resilient. Using RavenDB Cloud, we make sure that each of the nodes in the cluster is running on a separate availability zone. If we lose two zones in a region, there is still a single RavenDB instance that can operate. Note that in this case, we won’t have a quorum. That means that certain operations won’t be available (you won’t be able to create new databases, for example) but read and write operations will work normally and your application will fail over silently to the remaining server. When the remaining servers recover, RavenDB will update them with all the missing data that was modified while they were down.

The situation with OVH is actually worse than that. In this case, a datacenter is gone. In other words, these nodes aren’t coming back. RavenDB will allow you to perform emergency operations to remove the missing nodes and rebuilt the cluster from the single surviving node.

What about the scenario where the entire region is gone? In this case, if there are no more servers for RavenDB to run on, it is going to be down. That is the time to enact the Disaster Recovery Plan. In this case, it means deploying a new RavenDB Cluster to a new region and restoring from backup.

RavenDB Cloud ensures full daily backups as well as hourly incremental backups for all databases, so the amount of data loss will be minimal. That said, where are the backups located?

By default, RavenDB stores the backups in S3, in the same region as the cluster itself. Amazon S3 has the best durability guarantees in the market. This is beyond just the number of nines that they provide in terms of data durability. A standard S3 object is going to be residing in three separate availability zones. As mentioned, for AWS, we have guarantees about distance between those availability zones that we haven’t seen from other cloud providers. For that reason, when your cluster reside in AWS, we’ll store the backups on S3 in the same region. For Azure and GCP, on the other hand, we’ll also use AWS S3 for backup storage. For a whole host of reasons, we select a nearby region. So a cluster on Azure US East would store its backups on AWS S3 on US-East-1, for example. And a cluster on Azure in the Netherlands will store its backups on AWS S3 on the Frankfurt region. In addition to all other safeguards, the backups are encrypted, naturally.

The cloud has been around for 15 years already (amazing, I know) and so far, AWS has had a great history with not suffering catastrophic failures like the one that OVH has run into. Then again, until last week, you could say the same about OVH, but with 20+ years of operations. Part of the Disaster Recovery Process is knowing what risks are you willing to accept. And the purpose of this post is to expand on what we do and how we plan to react to such scenarios, be they ever so unlikely.

RavenDB actually has a number of features that are applicable for handling these sorts of scenarios. They aren’t enabled in the cloud by default, but they are important to discuss for users who need to have business continuity.

- Multi-region or Multi-cloud clusters are available. You can setup RavenDB across multiple disparate location in several manners, but the end result is that you can ensure that you have no single point of failure, while still using RavenDB to its fullest potential. This is commonly used in large applications that are naturally geo distributed, but it also serve as a way to not put all your eggs in a single basket.

- In addition to the default backup strategy (same AWS region on AWS or nearby AWS region for Azure or GCP), you can setup backups to additional regions.

One of the key aspects of business continuity is the issue of the speed in which you can go back to normal operations. If you are running a large database, just the time to restore from backup can be a significant amount of time. If you have a database (or databases) whose backup are in the hundreds of GB range, just the time it takes to get the backups can be measures in many hours, leaving aside the restore costs.

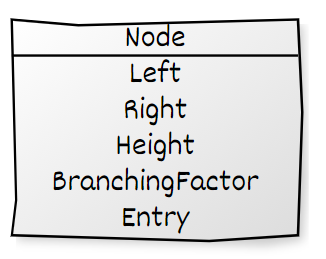

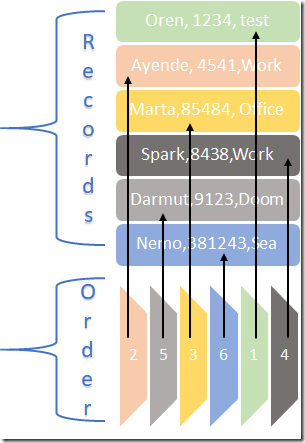

For that reason, RavenDB also support the notion of an offsite observer. That can be a single isolated node or a whole cluster. We can take advantage of the fact that the observer is not in active use and under provision it, in that case, when we need to switch over, we can quickly allocate additional resources to it to ramp it up to production grade. For example, let’s assume that we have a cluster running in Azure Northern Europe region, composed of 3 nodes with 8 cores each. We also have setup an observer cluster in Azure Norway East region. Instead of having to allocate a cluster of 3 nodes with 8 cores each, we can allocate a much smaller size, say 2 cores only (paying less than a third of the original cluster cost as a premium). In the case of disaster, we can respond quickly and within a matter of minutes, the Norway East cluster will be expanded so each of the nodes will have 8 cores and can handle full production traffic.

Naturally, RavenDB is likely to be only a part of your strategy. There is a lot more to ensuring that your users won’t notice that something is happening while your datacenter is on fire, but at least as it relates to your database, you know that you are in good hands.