Production postmortemhigh CPU when there is little work to be done

A RavenDB user called us with a very strange issue. They are running on RavenDB 3.5 and have run out of disk space. That is expected, since they are storing a lot of data. Instead of simply increasing the disk size, they decided to take the time and simply increase the machine overall capacity. They moved from a 16 cores machine to a 48 cores machine with a much larger disk.

After the move, they found out something worrying. RavenDB now used a lot more CPU. If previous the average load was around 60% CPU utilization, now they were looking at 100% utilization on a much more powerful machine. That didn’t make sense to us, so we set out to figure out what was going on. A couple of mini dumps and we were able to figure out what was going on.

It got really strange because there was the following interesting observation:

- Under minimal load / idle – no CPU at all

- Under high load – CPU utilization in the 40%

- Under medium load – CPU utilization at 100%

That was strange. When there isn’t enough load, we are at a 100%? What gives?

The culprit was simple: BlockingCollection.

“Huh”, I can hear you say. “How can that be?”

A BlockingCollection should not be the cause of high CPU, right? It is in the name, it is blocking. Here is what happened. That blocking collection is used to manage tasks, and by default we are spawning threads to handle that at twice the number of available cores. All of these threads are sitting in a loop, calling Take() on the blocking collection.

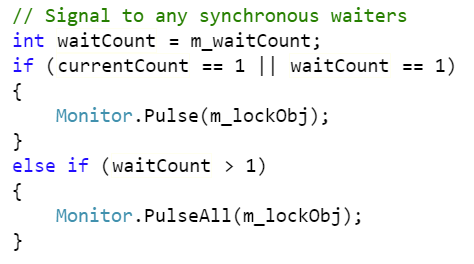

The blocking collection internally is implemented as using a SemaphoreSlim, which call Wait() and Release() on the values as needed. Here is the Release() method notifying waiters:

What you can see is that if we have more than a single waiter, we’ll update all of them. The system in question had 48 cores, so we had 96 threads waiting for work. When we add an item to the collection, all of them will wake and try to pull an item from the collection. Once of them will succeed, and then rest will not.

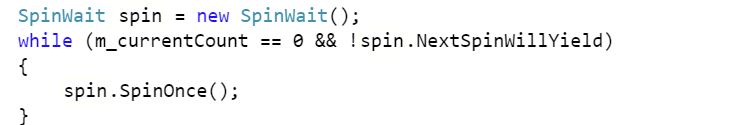

Here is the relevant code:

As you can imagine, that means that we have 96 threads waking up and spending a full cycle just spinning. That is the cause of our high CPU.

If we have a lot of work, then the threads are busy actually doing work, but if there is just enough work to wake the threads, but not enough to give them something to do, they’ll set forth to see how hot they can make the server room.

The fix was to reduce the number of threads waiting on this queue to a more reasonable number.

The actual problem was fixed in .NET Core, where the SemaphoreSlim will only wake as many threads as it has items to free, which will avoid the spin storm that this code generates.

More posts in "Production postmortem" series:

- (07 Apr 2025) The race condition in the interlock

- (12 Dec 2023) The Spawn of Denial of Service

- (24 Jul 2023) The dog ate my request

- (03 Jul 2023) ENOMEM when trying to free memory

- (27 Jan 2023) The server ate all my memory

- (23 Jan 2023) The big server that couldn’t handle the load

- (16 Jan 2023) The heisenbug server

- (03 Oct 2022) Do you trust this server?

- (15 Sep 2022) The missed indexing reference

- (05 Aug 2022) The allocating query

- (22 Jul 2022) Efficiency all the way to Out of Memory error

- (18 Jul 2022) Broken networks and compressed streams

- (13 Jul 2022) Your math is wrong, recursion doesn’t work this way

- (12 Jul 2022) The data corruption in the node.js stack

- (11 Jul 2022) Out of memory on a clear sky

- (29 Apr 2022) Deduplicating replication speed

- (25 Apr 2022) The network latency and the I/O spikes

- (22 Apr 2022) The encrypted database that was too big to replicate

- (20 Apr 2022) Misleading security and other production snafus

- (03 Jan 2022) An error on the first act will lead to data corruption on the second act…

- (13 Dec 2021) The memory leak that only happened on Linux

- (17 Sep 2021) The Guinness record for page faults & high CPU

- (07 Jan 2021) The file system limitation

- (23 Mar 2020) high CPU when there is little work to be done

- (21 Feb 2020) The self signed certificate that couldn’t

- (31 Jan 2020) The slow slowdown of large systems

- (07 Jun 2019) Printer out of paper and the RavenDB hang

- (18 Feb 2019) This data corruption bug requires 3 simultaneous race conditions

- (25 Dec 2018) Handled errors and the curse of recursive error handling

- (23 Nov 2018) The ARM is killing me

- (22 Feb 2018) The unavailable Linux server

- (06 Dec 2017) data corruption, a view from INSIDE the sausage

- (01 Dec 2017) The random high CPU

- (07 Aug 2017) 30% boost with a single line change

- (04 Aug 2017) The case of 99.99% percentile

- (02 Aug 2017) The lightly loaded trashing server

- (23 Aug 2016) The insidious cost of managed memory

- (05 Feb 2016) A null reference in our abstraction

- (27 Jan 2016) The Razor Suicide

- (13 Nov 2015) The case of the “it is slow on that machine (only)”

- (21 Oct 2015) The case of the slow index rebuild

- (22 Sep 2015) The case of the Unicode Poo

- (03 Sep 2015) The industry at large

- (01 Sep 2015) The case of the lying configuration file

- (31 Aug 2015) The case of the memory eater and high load

- (14 Aug 2015) The case of the man in the middle

- (05 Aug 2015) Reading the errors

- (29 Jul 2015) The evil licensing code

- (23 Jul 2015) The case of the native memory leak

- (16 Jul 2015) The case of the intransigent new database

- (13 Jul 2015) The case of the hung over server

- (09 Jul 2015) The case of the infected cluster

Comments

I was just reading Raymond Cohen's blog before coming here and this issue reminded me of the topic he had today that was ultimately about waking too many threads at once and wasting CPU cycles.

https://devblogs.microsoft.com/oldnewthing/20200323-00/?p=103584

Interesting how the worlds collide on a topic sometimes.

Great post by the way. So Blocking Collection in full framework has this efficiency but Core is smarter. I can appreciate why MS wouldn't want to backport that kind of change. Just another reason for me to take the plunge and move our app to Core in the next few months.

Comment preview