Production postmortemThe evil licensing code

A customer gave us a call about a failure they were experiencing in their production environment. They didn’t install the license that they purchased for some reason, and when they tried to install that, RavenDB will not run.

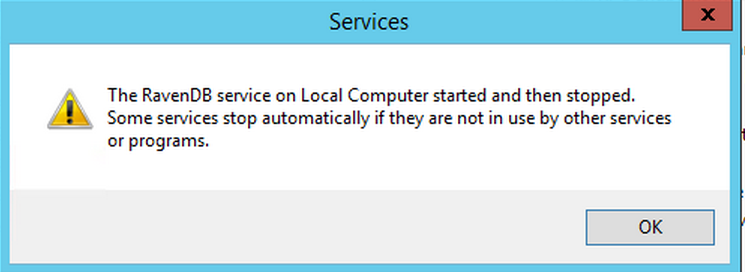

There is what this looked like:

Once I had all those details, it was pretty easy to figure out what was going on. I asked the client to send me the Raven.Server.exe.config file, just to verify it, and sure enough, here are the problematic lines:

<add key="Raven/AnonymousAccess" value="Admin"/> <add key="Raven/Licensing/AllowAdminAnonymousAccessForCommercialUse" value="false" />

This is the default configuration, and this failure is actually the expected and desired behavior.

What is going on? This customer was running RavenDB in a development mode, without a license. That means that the server is open to all. When you install a license, that is a pretty strong indication that you are using RavenDB in production. It is actually common to see users installing the development mode in production, and registering the license at a later date, for various reasons.

The problem with that is that this means that at least for a while, they were running with “everyone is admin” mode, which is great for development, but horrible for production. If you install RavenDB for production usage (by providing a license during the setup process), it will set itself up in locked down mode, so only users explicitly granted access can get to it. But if you started at development installation, then added the license…

It is common for customers to forget or actually be unaware of that setting. And not setting it is going to end up with a production installation that is open to the whole wide world.

When that happens, that tend to be… bad. Over 30,000 instances that are wide open bad.

Because of that, if you are running a license, and you had previously installed the development mode, you need to make a choice. Either you setup anonymous access so only authorized people can access the database, or you explicitly decided to grant everyone access, likely because you are already running in secured environment.

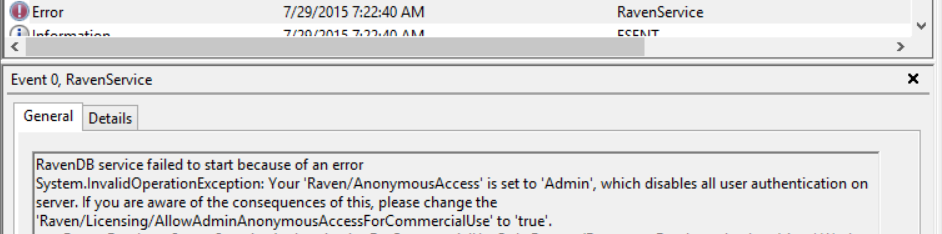

Error reporting from services is a bit hard, because there is no good way to send error messages to the service managers. But in the event log, we can see the actual error with the full details.

More posts in "Production postmortem" series:

- (07 Apr 2025) The race condition in the interlock

- (12 Dec 2023) The Spawn of Denial of Service

- (24 Jul 2023) The dog ate my request

- (03 Jul 2023) ENOMEM when trying to free memory

- (27 Jan 2023) The server ate all my memory

- (23 Jan 2023) The big server that couldn’t handle the load

- (16 Jan 2023) The heisenbug server

- (03 Oct 2022) Do you trust this server?

- (15 Sep 2022) The missed indexing reference

- (05 Aug 2022) The allocating query

- (22 Jul 2022) Efficiency all the way to Out of Memory error

- (18 Jul 2022) Broken networks and compressed streams

- (13 Jul 2022) Your math is wrong, recursion doesn’t work this way

- (12 Jul 2022) The data corruption in the node.js stack

- (11 Jul 2022) Out of memory on a clear sky

- (29 Apr 2022) Deduplicating replication speed

- (25 Apr 2022) The network latency and the I/O spikes

- (22 Apr 2022) The encrypted database that was too big to replicate

- (20 Apr 2022) Misleading security and other production snafus

- (03 Jan 2022) An error on the first act will lead to data corruption on the second act…

- (13 Dec 2021) The memory leak that only happened on Linux

- (17 Sep 2021) The Guinness record for page faults & high CPU

- (07 Jan 2021) The file system limitation

- (23 Mar 2020) high CPU when there is little work to be done

- (21 Feb 2020) The self signed certificate that couldn’t

- (31 Jan 2020) The slow slowdown of large systems

- (07 Jun 2019) Printer out of paper and the RavenDB hang

- (18 Feb 2019) This data corruption bug requires 3 simultaneous race conditions

- (25 Dec 2018) Handled errors and the curse of recursive error handling

- (23 Nov 2018) The ARM is killing me

- (22 Feb 2018) The unavailable Linux server

- (06 Dec 2017) data corruption, a view from INSIDE the sausage

- (01 Dec 2017) The random high CPU

- (07 Aug 2017) 30% boost with a single line change

- (04 Aug 2017) The case of 99.99% percentile

- (02 Aug 2017) The lightly loaded trashing server

- (23 Aug 2016) The insidious cost of managed memory

- (05 Feb 2016) A null reference in our abstraction

- (27 Jan 2016) The Razor Suicide

- (13 Nov 2015) The case of the “it is slow on that machine (only)”

- (21 Oct 2015) The case of the slow index rebuild

- (22 Sep 2015) The case of the Unicode Poo

- (03 Sep 2015) The industry at large

- (01 Sep 2015) The case of the lying configuration file

- (31 Aug 2015) The case of the memory eater and high load

- (14 Aug 2015) The case of the man in the middle

- (05 Aug 2015) Reading the errors

- (29 Jul 2015) The evil licensing code

- (23 Jul 2015) The case of the native memory leak

- (16 Jul 2015) The case of the intransigent new database

- (13 Jul 2015) The case of the hung over server

- (09 Jul 2015) The case of the infected cluster

Comments

if so many customers trip over this and do it wrong, could it be that your approach needs to be thought over?

Daniel,

Where did you get "many customers" tripping over this?

In the time we had this feature, it caused one support issue

You could do Environment.Exit(1) during startup, so the message would be less benign and would show "The service failed to start", hopefully triggering the user to check the event log.

Comment preview