Production postmortemThe case of 99.99% percentile

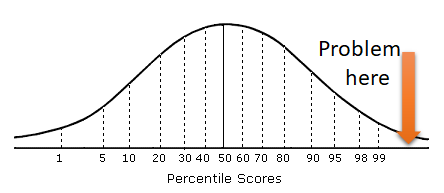

A customer called us with a problem, for the most part, RavenDB was very well behaved, but they were worried about the 99.99% percentile of request duration. While the 99.9% was excellent (around a few milliseconds), the 99.99% was measured in seconds.

A customer called us with a problem, for the most part, RavenDB was very well behaved, but they were worried about the 99.99% percentile of request duration. While the 99.9% was excellent (around a few milliseconds), the 99.99% was measured in seconds.

Usually, this is a particularly slow request that happens, and we can handle that by figuring out what is slow on that particular request and fix it. Common causes for such an issue is a request that returns a lot of unnecessary data, such as large full documents, when it needs just a few fields.

In this case, however, our metrics told us that the problem was pretty widespread. There wasn’t a particular slow request, rather, at what appeared to be random times, certain requests would be slow. We also realized that it wasn’t a particular request that was slow, but all requests within a given time period.

That hinted quite strongly at the problem, it was very likely that this is a GC issue that caused a long pause. We still had to do some work to rule out other factors, such as I/O / noisy neighbors, but we narrowed down on GC as being the root cause.

The problem in this case was that the customer was running multiple databases on the same RavenDB process. And each of them was fairly large. The total amount of memory that the RavenDB process was using was around 60GB of managed memory. At that level, anything that would cause a GC can cause a significant pause and impact operations.

The solution in this case was to break that into multiple separate processes, one for each database. In this manner, we didn’t have a single managed heap that the GC had to traverse, each heap was much smaller, and the GC pause times were both greatly reduced and spaced much further apart.

More posts in "Production postmortem" series:

- (07 Apr 2025) The race condition in the interlock

- (12 Dec 2023) The Spawn of Denial of Service

- (24 Jul 2023) The dog ate my request

- (03 Jul 2023) ENOMEM when trying to free memory

- (27 Jan 2023) The server ate all my memory

- (23 Jan 2023) The big server that couldn’t handle the load

- (16 Jan 2023) The heisenbug server

- (03 Oct 2022) Do you trust this server?

- (15 Sep 2022) The missed indexing reference

- (05 Aug 2022) The allocating query

- (22 Jul 2022) Efficiency all the way to Out of Memory error

- (18 Jul 2022) Broken networks and compressed streams

- (13 Jul 2022) Your math is wrong, recursion doesn’t work this way

- (12 Jul 2022) The data corruption in the node.js stack

- (11 Jul 2022) Out of memory on a clear sky

- (29 Apr 2022) Deduplicating replication speed

- (25 Apr 2022) The network latency and the I/O spikes

- (22 Apr 2022) The encrypted database that was too big to replicate

- (20 Apr 2022) Misleading security and other production snafus

- (03 Jan 2022) An error on the first act will lead to data corruption on the second act…

- (13 Dec 2021) The memory leak that only happened on Linux

- (17 Sep 2021) The Guinness record for page faults & high CPU

- (07 Jan 2021) The file system limitation

- (23 Mar 2020) high CPU when there is little work to be done

- (21 Feb 2020) The self signed certificate that couldn’t

- (31 Jan 2020) The slow slowdown of large systems

- (07 Jun 2019) Printer out of paper and the RavenDB hang

- (18 Feb 2019) This data corruption bug requires 3 simultaneous race conditions

- (25 Dec 2018) Handled errors and the curse of recursive error handling

- (23 Nov 2018) The ARM is killing me

- (22 Feb 2018) The unavailable Linux server

- (06 Dec 2017) data corruption, a view from INSIDE the sausage

- (01 Dec 2017) The random high CPU

- (07 Aug 2017) 30% boost with a single line change

- (04 Aug 2017) The case of 99.99% percentile

- (02 Aug 2017) The lightly loaded trashing server

- (23 Aug 2016) The insidious cost of managed memory

- (05 Feb 2016) A null reference in our abstraction

- (27 Jan 2016) The Razor Suicide

- (13 Nov 2015) The case of the “it is slow on that machine (only)”

- (21 Oct 2015) The case of the slow index rebuild

- (22 Sep 2015) The case of the Unicode Poo

- (03 Sep 2015) The industry at large

- (01 Sep 2015) The case of the lying configuration file

- (31 Aug 2015) The case of the memory eater and high load

- (14 Aug 2015) The case of the man in the middle

- (05 Aug 2015) Reading the errors

- (29 Jul 2015) The evil licensing code

- (23 Jul 2015) The case of the native memory leak

- (16 Jul 2015) The case of the intransigent new database

- (13 Jul 2015) The case of the hung over server

- (09 Jul 2015) The case of the infected cluster

Comments

Maybe this is a good testcase for Project Snowflake https://www.microsoft.com/en-us/research/publication/project-snowflake-non-blocking-safe-manual-memory-management-net/#

It would be interesting to hear what those gigs of RAM are composed of, in terms of both short- and long-lived objects. I know some of this might be private knowledge but I would imagine you're careful to use as much RAM as possible and use it to the greatest effect.

Do the various flavours of GC help at all with this? Server vs Workstation. Concurrent or not. I suspect you're using server and concurrent but am still curious if you've tried doing regular GC compaction anyway, and if you've tried asking it to do the large object heap or not at the same time.

Pop,

We are looking at Snowflake very seriously.

It is something that could really help us control what is going on, and that is GOOD.

Rik,

We are assuming that the split processes are using roughly the same amount of memory, actually

Or even a bit higher, probably. The savings here is that when a GC run, it has to do a lot less and doesn't impact everything at once.

Ian,

We are already running in server mode, so I don't think it would matter too much. And we aren't compacting the LOH at all.

Comment preview