Production postmortemThe allocating query

A customer was experiencing large memory spikes in some cases, and we were looking into the allocation patterns of some of the queries that were involved. One of the things that popped up was a query that allocated just under 30GB of managed memory during its processing.

Let me repeat that, because it bears repeating. That query allocated 30(!) GB(!) during its execution. Now, that doesn’t mean that it was consuming 30 GB, it was just the allocations involved. Most of that memory was immediately discarded during the operation. But 30 GB of garbage to cleanup puts a lot of pressure on the system. We took a closer look at the offensive query. It looked something like this:

from index “Notifications/RoutingAndPriority”

where startsWith(Route, $routeKeyPrefix)

order by Priority desc

That does not seem like a query that should be all that expensive. But details matter, so we dove into this. For this particular query, the routes are hierarchical structures that are unique for each message. Something like:

- notifications/traffic/new-york-city/67a81019-941b-4d04-a0db-0559ed45343c

- notifications/emergency/las-vegas/0a8e18fb-563b-4b6a-8e93-e10e08239656

And the queries that were generated were using the city & topic to filter the information that they were interested in.

The customer in question had a lot of notifications going on at all times. And each one of their Routes was unique. Internally, RavenDB uses Lucene (currently ![]() ) to handle searches, and Lucene is using an inverse index to execute queries.

) to handle searches, and Lucene is using an inverse index to execute queries.

The usual way to think about is like this:

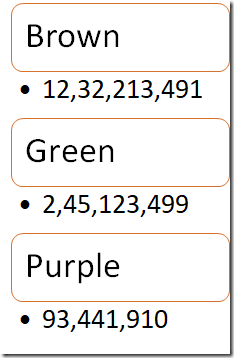

We have a list of terms (Brown, Green & Purple) and each of them has a list of the matching documents that contain the particular term.

The process of issuing a prefix query then is easy, scan all entries that match the prefix and return their results. This is indeed what Lucene is doing. However… while it is doing that, it will do something like this:

Pay close attention to what is actually happening here. There are two enumerators that we work with. One for the terms for the field and one for the documents for a specific term.

All of this is perfectly reasonable, but there is an issue. What happens when you have a lot of unique values? Well, then Lucene will have a lot of iterations of the loop. In this case, each term has just a single match, and Lucene is pretty good at optimizing search by specific term.

The actual problem is that Lucene allocates a string instance for each term. If we have 30 million notifications for New York’s traffic, that means that we’ll allocate 30 million strings during the processing of the query. We aren’t retaining these strings, mind. They’ll be cleaned up by the GC quickly enough, but that is an additional cost that we don’t actually want.

Luckily, in this case, there is a much simple solution. Given that the pattern of the route is known, we can skip the unique portion of the route. That means that in our index, we’ll do something similar to:

Route = doc.Route.Substring(0, doc.Route.LastIndexOf('/') + 1)

Once that is done, the number of unique matches there would be negligible. There would be no more allocations galore to observe and overall system performance is much improved.

We looked into whether there is something that we can do with Lucene to avoid this allocations issue, but it is endemic to the way the API works. The longer term plan is to fix that completely, of course. We are making great strides there already ![]() .

.

In short, if you are doing startsWith() queries or similar, pay attention to the number of unique terms that you have to go through. A simple optimization on the index like the one above can bring quite a bit of dividends.

More posts in "Production postmortem" series:

- (07 Apr 2025) The race condition in the interlock

- (12 Dec 2023) The Spawn of Denial of Service

- (24 Jul 2023) The dog ate my request

- (03 Jul 2023) ENOMEM when trying to free memory

- (27 Jan 2023) The server ate all my memory

- (23 Jan 2023) The big server that couldn’t handle the load

- (16 Jan 2023) The heisenbug server

- (03 Oct 2022) Do you trust this server?

- (15 Sep 2022) The missed indexing reference

- (05 Aug 2022) The allocating query

- (22 Jul 2022) Efficiency all the way to Out of Memory error

- (18 Jul 2022) Broken networks and compressed streams

- (13 Jul 2022) Your math is wrong, recursion doesn’t work this way

- (12 Jul 2022) The data corruption in the node.js stack

- (11 Jul 2022) Out of memory on a clear sky

- (29 Apr 2022) Deduplicating replication speed

- (25 Apr 2022) The network latency and the I/O spikes

- (22 Apr 2022) The encrypted database that was too big to replicate

- (20 Apr 2022) Misleading security and other production snafus

- (03 Jan 2022) An error on the first act will lead to data corruption on the second act…

- (13 Dec 2021) The memory leak that only happened on Linux

- (17 Sep 2021) The Guinness record for page faults & high CPU

- (07 Jan 2021) The file system limitation

- (23 Mar 2020) high CPU when there is little work to be done

- (21 Feb 2020) The self signed certificate that couldn’t

- (31 Jan 2020) The slow slowdown of large systems

- (07 Jun 2019) Printer out of paper and the RavenDB hang

- (18 Feb 2019) This data corruption bug requires 3 simultaneous race conditions

- (25 Dec 2018) Handled errors and the curse of recursive error handling

- (23 Nov 2018) The ARM is killing me

- (22 Feb 2018) The unavailable Linux server

- (06 Dec 2017) data corruption, a view from INSIDE the sausage

- (01 Dec 2017) The random high CPU

- (07 Aug 2017) 30% boost with a single line change

- (04 Aug 2017) The case of 99.99% percentile

- (02 Aug 2017) The lightly loaded trashing server

- (23 Aug 2016) The insidious cost of managed memory

- (05 Feb 2016) A null reference in our abstraction

- (27 Jan 2016) The Razor Suicide

- (13 Nov 2015) The case of the “it is slow on that machine (only)”

- (21 Oct 2015) The case of the slow index rebuild

- (22 Sep 2015) The case of the Unicode Poo

- (03 Sep 2015) The industry at large

- (01 Sep 2015) The case of the lying configuration file

- (31 Aug 2015) The case of the memory eater and high load

- (14 Aug 2015) The case of the man in the middle

- (05 Aug 2015) Reading the errors

- (29 Jul 2015) The evil licensing code

- (23 Jul 2015) The case of the native memory leak

- (16 Jul 2015) The case of the intransigent new database

- (13 Jul 2015) The case of the hung over server

- (09 Jul 2015) The case of the infected cluster

Comments

Comment preview