Making code fasterThe interview question

Interview questions are always tough to design. On the one hand, you need to create something that will not be trivial to do, and on the other hand, you have a pretty much hard time limit to a reasonable solution. For example, while implementing a linked list is something that I would expect anyone to be able to do in an interview, implementing a binary tree (including the balancing), is probably not going to be feasible.

Interview tasks (that candidate can do at home) are somewhat easier, because you don’t have the same time constraints, but at the same time, if you ask for something that takes a week to write, candidates will skip the question and the position entirely. Another issue here is that if you ask a candidate to send a binary tree as a interview task, they are going to google –> copy & paste –> send, and you learn absolutely nothing*.

* Oh, sometimes you learn quite a lot, if a candidate cannot do that, they are pretty much disqualified themselves, but we could do that more easily with Fizz Buzz, after all.

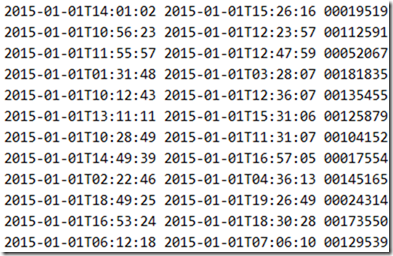

So I came up with the following question, we have the following file (the full data set is 276 MB), that contains the entry / exit log to a parking lot.

The first value is the entry time, the second is the exit time and the third is the car id.

Details about this file: This is UTF8 text file with space separated values using Windows line ending.

What we need to do is to find out how much time a car spent in the lot based on this file. I started out by writing the following code:

You can find the full file here. The only additional stuff is that we measure just how much this cost us.

And this code process the 276MB file in 30 seconds, using a peak working set of 850 MB and allocating a total of 7.6 GB of memory.

I’m pretty sure that we can do better. And that is the task we give to candidates.

This has the nice advantage that this is a pretty small and compact problem, but to improve upon it you actually need to understand what is going on under the covers.

I’ll discuss possible optimizations for this next week.

More posts in "Making code faster" series:

- (24 Nov 2016) Micro optimizations and parallel work

- (23 Nov 2016) Specialization make it faster still

- (22 Nov 2016) That pesky dictionary

- (21 Nov 2016) Streamlining the output

- (18 Nov 2016) Pulling out the profiler

- (17 Nov 2016) I like my performance unsafely

- (16 Nov 2016) Going down the I/O chute

- (15 Nov 2016) Starting from scratch

- (14 Nov 2016) The obvious costs

- (11 Nov 2016) The interview question

Comments

Few points:

*instead of using linq, use a dictionary to hold the sum per id, if (and only if) the dictionary is growing too large you could use files calc the sum per id

// Ryan

Ryan,

What do you mean by the last one about dictionary and files?

Am i missing something?

ReadLine (record);

carDurations[record.carId] += record.timeTo - record.timeFrom;

repeat until EOF;

saveToFile(carDurations);

Of course we can optimise data reading, allocating strings for one line, but i don't get where are 7gb :)

Rytis,

That isn't 7 GB memory used, that is 7 GB memory allocated

Using lambdas for properties should have no performance impact, since the IL compiles to the same as if the property was declared without a lambda.

Read a line at a time and keep a Dictionary<int, int> /<car id, time in ms>/ and update dictionary accordingly, fast and easy!

You shouldn't read whole file at a time.

Read it line by line :)

Hmm, the markdown didn't work?

Obviously the way to go is to use a dictionary, but if there are a lot of unique ids, you could write intermediate sums to a few files, merge the files until you have one file.

But that in it self is probably a whole different domain problem altogether ...

// Ryan

@ Jason, the properties are accessed multiple times, each time you will split a string resulting in small strings.

Each time allocating memory that you are going to throw away ...

// Ryan

replacing File.ReadAllLines with File.ReadLines

String.Split with substring

and using AsParallel and Dictionary<long,long> for results reduces

memory usage from 832MB to 0.39MB and

time from 45 seconds to 12 seconds

How much time is given to candidate to do it on interview ?

it really depends what are your limits, if you want to do small memory usage, you would have to read the file twice. if you want performance assuming you don't have memory limit for the dictionary - just hold them by dictionary id and add the sums.

Read the file in binary mode, records are fixed size, so you can read each line into the same byte array. Manually parse each record from the byte array taking advantage of ASCII table: digit = byte - 48 (digits in UTF8 are encoded like in ASCII). Use a dictionary<int, long> for aggregating:

First thing I would try and measure is change to File.ReadLines.

Second to change all Record props to get; private set; and set the props in the Record ctor.

Where can I find the 276MB file?

@Ryan Ahh, right - I wasn't talking about the implementation of the property itself (or how they are used), simply that using a lambda to declare it results in the same IL as not using one, in response to this comment:

Initially, when I read that comment, it seemed that lambda syntax was being singled out as an issue. I appreciate now that you meant the usage of the properties, potentially in multiple calls, could be a perf problem that needs looking into.

I'm not sure which order .NET uses to parse dates with

DateTime.Parse, but as the date format seems to be fixed, it should be faster to useDateTime.ParseExactinstead of having .NET guess the correct format.Apart from the fact that the timestamps and car id should probably not be parsed every time the property is accessed, it is most likely even worse that

_line.Split(' ')is run on every getter. :)Since the content size is fixed, use a stream reader and read only the text needed instead of relying on ReadAllLines or ReadLines. That will remove a lot of string allocations from read lines and splits.

My gut says the DateTime.Parse calls are likely to cost more CPU time than string allocations.

While we are doing a lot of allocations, I would by now expect I/O to be dominating in the profiler.

Regarding the use of a fixed-size buffer: the file is specified to be UTF-8, not necessarily ASCII. It's probably reasonable to assume that it contains no non-ASCII characters, but I'd still want clarification on that point before assuming I could handle it as such!

Tomasz,

Typically, 10 - 60 minutes if done on site, and a few days if at home.

The quality of the solution has to match, though

Jesús,

That is a possibility, and explicitly why the file is designed in such a manner.

However, you'll probably be surprised to learn that at this point, dictionary accesses are 50%+ of your time

Jesus,

https://drive.google.com/file/d/0B-GYDT6Flp-saDFrdXQzQVRFZlE/view?usp=sharing

Is it possible to get the 276 MB file in question?

Arseny,

See the comment,

https://drive.google.com/file/d/0B-GYDT6Flp-saDFrdXQzQVRFZlE/view?usp=sharing

About 45MB max memory usage and 20 seconds or so under the profiler...

So I had some spare time tonight and since I've been optimizing code all week I thought I give it a try.

This is how the given code performed on my laptop (Dell M6700 i7-3940XM), I took the best of three runs:

Code from blog: "Took: 38.051 ms and allocated 9.661.701 kb with peak working set of 1.167.448 kb"

Code from the 7-Zip File: "Took: 33.425 ms and allocated 4.587.464 kb with peak working set of 1.260.084 kb"

So here is my code. I went a little crazy. It's neither very readable nor maintainable, but you did not ask for pretty, you asked for fast and memory efficient:

Here are the numbers: "Took: 1.300 ms and allocated 65.706 kb with peak working set of 33.080 kb"

So compared to the blog version it runs about 29,5 times faster, allocates 0.7% of the memory and has 3% of the peak working set.

Maybe I should give some explanations:

Nice question! Here's my solution: https://gist.github.com/zeux/999654a6ce2a32e219a2ed0f1f0fa935 (sorry, I don't work in .NET any more...)

It clocks at around 185 ms (=> 1.5 GB/s). It should be possible to make it faster but unfortunately VS 15 Preview 5 doesn't seem to have a functioning profiler and I've already spent an hour on this so I'll leave it as is :)

mktime() is surprisingly slow. Based on Michael's results .NET's DateTime is actually much better - if I remove the same-day optimization my code takes 2.8 sec.

Record validation is compiled out since it's in an assertion; compiling it in reduces the time to 320 ms. Validation code could be optimized as well.

P.S. mmap is actually pretty useless here - it saves about 20 ms based on my measurements. Not really worth it but I left it there because why not.

Adam,

At a guess, you'll find that quite a lot of your performance is going on string alloc & parsing.

But a lot of that is also going to the dictionary

Michael,

You can probably reduce the time further by removing the new DateTime calls, they are expensive.

And you can write the date time without generating a string each time

Arseny,

Nice, I wasn't away that you could do overloading based on the size of the array, but this make the code a pleasure to read.

Note that in .NET, a lot of the performance goes to actually writing this out as string, though. So I'll probably need to optimize that as well

Arseny,

However, note that your code has a major difference in behavior.

You are assuming that the number of ids is small. And in fact, the higher id value in the sample file is 203,220.

That means that your array is under 1 MB in size, and you save all the dictionary lookups.

However, if you consider the size of the id, it has 8 chars, so it can be a max of 99,999,999, in which case you'll be using 400 MB of RAM or so.

Doable, but quite expensive.

Looks interesting but I cannot find the time to try.

Will it make a lot of difference if you check if the date is identical on byte level (which it probably is a lot of the times in a parking garage), and then compare the time manually on byte level?

This will probably save a lot of DateTime allocation

Arseny,

Oh, and you output the time in seconds, rather than the TimeSpan format (that actually matter a lot for perf) :-)

Paul,

That is an interesting idea, I was able to take that and optimize things even further, thanks

This thread became very interesting! , I'm sure there are even more optimization such as write c++ external dll and include asm functions so all parsing and dictionary costs can be even more efficient and with less cpu instructions.

In this case , many of the costs are going to the dictionary's lookups so maybe a smarter dictionary implementation can same some more

Very interesting!

Another assumption, we can get the file size and compute how many lines there are, so do the smart dictionary allocations before and with all the required size, this might give another boost

Well the first question should be what the performance goals are, in terms of wall time, memory usage and cpu load. But given the question we could assume that we want the best possible performance in all these aspects balanced with priority to wall time first, memory usage second and cpu load last.

We have fixed size, fixed format records as input: two timestamps with seconds resolution and an 8 digit id with the alphabet '0'..'9'.

Requested output is an unordered sequential access collection with entries consisting of:

I would:

Dictionary<uint, uint>.It is an interesting enough question. I may give this a go.

Oren,

Re max id size - yeah. Standard hash map is significantly slower and I wasn't up for writing a custom one (which would still be slower of course, but not as much). 400 MB is not too much, and if you preallocate 400 MB the speed doesn't tank dramatically (goes to ~250 ms or so). Obviously you will need 400 MB if the car ids are dense and occupy the entire range - in fact, my solution in this case will occupy far less memory than any other, because for any dictionary you'll pay a very hefty price - OTOH 4b for key, 4b for value, 8b for "next" pointer assuming chained hashing, 8b for the pointer in the bucket array, 8b for the allocation overhead (not on all systems) - so 4x to 6x what I have. Even with a dense hash table it'll still be 2x-3x what I have. So my solution is better for the worst case, which is the case you should be optimizing for :)

Re: timespan output format - fair point. I was pretty surprised that the output portion does not seem to take any meaningful time in my case - I'm used to printf being slow. As I said I don't have a working profiler which limits my ability to analyze this code :( anyway, I think this is fixable without changing the resulting time that much because printf basically has to do roughly the same operations when formatting the number that you'd need to do here, it just does it in decimal as opposed to base 60. I'll update the code to match the output format and we'll see what the impact is.

Ah, wait, I am not counting the time it takes to output :( with printf it's actually 230 ms. Damn it.

Updated gist (https://gist.github.com/zeux/999654a6ce2a32e219a2ed0f1f0fa935) with new output code and new validation code - 175 ms now with both output and validation enabled (validation doesn't check that your time format is precisely right, but it does check all separators and digits for sanity).

@Arseny, this is quite impressive! I think you optimized almost everything

Ok, picking off where I left, I optimized my solution a bit further.

12. Loose the struct and go for a Dictionary<int,long>, pretty obvious

13. Loose the BinaryReader and BinaryWriter and read and write directly to the filestream

14. If StartDate and EndDate are the same, compute the Ticks directly using

We are under a second now: Took: 896 ms and allocated 60.807 kb with peak working set of 29.616 kB Not bad. But let's not stop yet.

15. When writing the output, compute the timespan string ourselves (ripped straight from TimeSpanFormatter)

16. Use DateTime just when the dates have different year (just about 900 cases).

Now the allocated memory is down to 21.914 kB.

It's time for the gloves to come off. Dictionary<,> has to go.

17. We are storing the values in a LinkList<long[1024][1024]>. Array indices are computed with >> and &. Long[1024] are allocated on the go as needed. Output is written straight from the data (no Enumerator)

18. Some finishing touches: Unroll the Loop when parsing the id, remove some ProcessInput function and move it's contents to the inner read loop

And here are the numbers: Took: 442 ms and allocated 1.681 kb with peak working set of 13.528 kB (net462, .net core is a little faster)

I'm now 86x faster than the original solution, allocating just 0,02% of memory and have a peak working set that just 1% of the original.

And still i'm using no unsafe code. I know I could loose the LinkedList<> for the given data set, but right now the solution is correct, fast and memory efficient for any well-formed Input file, even if it is 10x the size, so removing it would be kind of cheating.

Thing's tried that did not pay off:

PS: Bringing C++ to a C# fight. So unfair! ;-)

Source code: http://pastebin.com/jHvYNDBc

This was a quite interesting assignment. I did not reach the optimization levels some of the others in this post but I got down to 3 993 kb with peak working set of 13 320 kb. For anyone interested my code is at gist.

Some people seem to roll their own date calculations but that feels quite risky to me. There are so many things to consider such as leap years, daylight savings time (depending on country), leap seconds and even events such as Russia getting rid of daylight savings time.

Anders, I thought about DST and I believe there are two issues with considering it:

Michael, nice! This actually matches my past experience pretty well - my rule of thumb from the days of working with .NET was that tuned .NET code is roughly 3x slower than tuned C++ code for compute-bound problems, assuming .NET code does not use unsafe. I was thinking of a two-level array structure, guess I'll go ahead and do that as well.

https://gist.github.com/zeux/90a49b85c8cfdf04ffa5489ec8916271 - 135 ms. Two-level array on its own makes no difference for the time, but allows a nicer structure for multi-threading; this is using 4 threads on a 2-core HT system (same time as 2 threads, really).

@Ayende. The dictionary can be replaced by a 800Mb array of longs, it would be faster but it would require more memory:

I think an array of int's would be enough

@Anders: You are absolutely right regarding the date calculations. It's really problematic, that's why didn't include it in my first version. However with the given data set there is no difference between using DateTime or calculating it directly, so I mainly was interested in how fast I could make it. At least I adjusted for leap years and used DateTime for cases when the start is in the old year and the end is in the new year to account for leap seconds. I would not do such an optimization in production code unless I know it's safe. Let's just pretend it's UTC and they forgot or decided to leave out the Z in the ISO 8061 format. ;-)

@Arseny: That's a really great solution! I might try your multi-threading approach if I find the time. I tried multi-threading before, but your approach is much better. I guess I was thinking to complicated.

There is one more thing I'd like to share. I tried using unsafe code to do a poor man's variant of SIMD. I pinned the memory for my read buffer and then obtained a ulong* pointer to do some calculations. First I convert the ASCII digits to numbers (-'0'), then I convert the Id to an Int32. Please note that the constants for substractions and the Int32 conversion AND masks are adjusted for the little-endianness of Intel CPUs. This operation makes the code run a little faster, but it's allocates more memory (because of pinned memory?) and peak working set is higher.

Oren,

How can I obtain a copy of that 267Mb data file?

With some unsafe code (see the approach I outlined earlier) and a significant amount of validity checking. It looks like performance might not be too far from a c++ implementation (@Arseny) and not much faster than optimized c# code that does not require "unsafe" constructs (@Michael). Numbers for the code in this gist:

So the Reference version is 155 times slower, allocates 2450 times more memory and has a 4.29 times higher peak working set.

Using the "FlexArray" (basically a single level trie, or "jagged array" using the first 12 bits for its first level) performs significantly better than a regular pre-allocated fixed size array

uint[100000000]or aDictionary<uint,uint>.I would expect that a proper cache conscious trie implementation would improve this even more, because (I think) we are seeing primarily processor cache effects making "FlexArray" faster.

I also expect performance improvements from @Michael's "bit hacking magic" above. It is similar to the approach I used in an earlier "Ayende Challenge" w.r.t. Etag parsing (see https://ayende.com/blog/169796/excerpts-from-the-ravendb-performance-team-report-etags-and-evil-code-part-i).

@alex: Great solution! I ran it on my computer and it was a little bit faster with about 225ms. But I took a page out of your book and improved my solution and ended up with 157ms. If you want to know how, check the next blog entry (The obvious costs).

on a consumer grade SSD, only read this file from disk will take about 0.5 sec (550 MB/sec / 276MB). so you need to have a RAM disk to make it faster.

you should be able to parallelize it if you have a read block of 64K (or so) and process it in one thread (record size is 50 bytes) . So you have one thread which is reading and storing what read into kind of a queue ( disruptor pattern, non blocking ) and others reading 64K blocks from that queue and processing it in own thread data storage - again non blocking. Once threads are done, you combine results from each thread into final result. this assumes you can read as fast as you can process data.

DNF,

Because we are running this multiple times, the typical scenario is that the file is in the FS cache, so it isn't subject to the I/O limitation

@Ayende: I knew that. The point was that IO may impact tests/processing and depending how it impacts you will need to change strategy for parsing. The other point that it should be possible to have parallel algorithm which is faster than single threaded if you can avoid blocking/sharing data between threads, since others were getting opposite results. With the cache or RAM disk you can probably open file for read in each thread, and seek to position (increment position by read block size 64K or more, seek, read, process, in each thread) which can the only thing shared across threads. Obviously you don't need more threads than you have CPUs.

The cost of performing a reasonable amount of validation of the input (input values that are in range of their respective domains and formats that match expectation), for the solution I posted above is around 50 ms, i.e. around 20% of total run time. I would still consider that well worth it.

Do you allow candidates to browse the internet when working on this question?

Dan,

Yes, of course

I took this log file as an exercise in my ongoing learning of F#. The language doesn't matter, but I was shocked by this huge perf gain just due to the overall algorithm. The problem was to return the time span for a given id/date. Here's how the exercise progressed:

FizzBuzz can get pretty interesting sometimes!

http://joelgrus.com/2016/05/23/fizz-buzz-in-tensorflow/

Comment preview