The Guts n’ Glory of Database InternalsManaging records

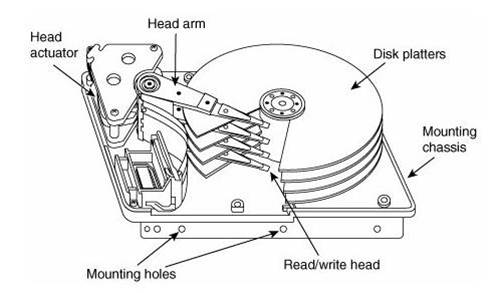

One of the things that a database has to deal with is the fact that the actual physical data is stored on something like this:

Well, that isn’t true today (HDD, SSD, NVM, etc), but all of them have roughly the same external interface. You have a series of blocks of various size (from 512 bytes to 4KB, typically), that you can fill with some data, and that is pretty much it. That doesn’t sound too bad, right?

Well, consider what happens the case of a simple text file, and the need to add a line at the beginning of the file, you can’t ask the hard disk or the file system to do that, you have to re-write the entire file in its entirety, first prepending the new line, then writing the previous content of the file. This is obvious when you look at the basic file system API:

You can open the file, seek to a particular location, and write there. There is no way for you to say fappend() in a particular position, or something like that. Note that I’m using the C API definition because this limitation is pretty much shared by any API in any language that you care to name. Oh, you can fake it, which is what all IDEs pretty much do, but that breaks down when you start working on large files.

Database routinely deal with large files, a small database today is at least a GB or so, and typical database sizes are usually in the hundreds of GB or multiple TB. If we need to update an entry, we can’t just start moving this around. That is why a pretty large part of managing a database is about how you structure the data on disk so you can move it around.

There are typically two ways to do that. Append only and pages.

We’ll start with the append only model, because it is conceptually simpler. Instead of worrying about allocation of data on disk, and how to manage that, we’ll simply always write at the end of the file. That way, we can let the file system worry about where to find the new space for our data. This has the advantage of being pretty simple, and it also is a great help when you are worrying about consistency and durability (a subject for another post). The problem is that obviously, you end up having a lot of wasted space, especially if you have a commonly modified record. Each time that it changes, you write it to the end of the file, but the older version still exists. This requires you to do merges, to get rid of the older data occasionally.

It should be noted that the choice of the algorithm for storing the data and the choice of how to actually manage the data are typically pretty closed together. Most LSM solutions will use some form of append only mode (indeed, that is practically a requirement). While most databases using trees has a tendency to use paging (more on that later).

A good example of why mixing and matching the two doesn’t really work is the problem of the CouchDB file format. CouchDB uses append only mode for crash recovery, but it uses B+Trees as its data structure. That leads to some interesting problems. To start with, every change must modify the full height of the tree. As the database grows, the amount of data that you need to write on every change also grows. Another issue that database authors need to think about is the hot path for the file system cache. Modern database will try to lean on what the operating system can give them, and by always writing to the end of the file, you keep filling the file system cache with new data, so a lot of the old data (which might be useful) ends up being kicked out, eventually forcing you to do more (expensive) I/O.

The other alternative is that instead of writing at the end of the file, we’ll divide the file into evenly sized pieces. Those are typically called pages, and they are 4KB to 4MB in size, on most databases. Note that the actual size matter quite a lot in how you work with them. But for now, we’ll deal strictly with 4KB pages, because that is easiest. In this mode, whenever we need to modify some piece of data, we can modify its page as a single operation (seek to that page position in the file, overwrite the whole page). Since B+Trees are naturally paged, this make it a very easy way to work with them. Except if you have a record whose size exceed the size of the page.

In this case, you allocate as many pages as you need to fit the record, and typically call this overflow. In relational database, those would be TEXT and BLOB columns, for example. They aren’t stored directly inside the B+Tree, instead, they are stored on the side. That additional hop is also why they tend to be more expensive than an embedded value inside the record.

The good thing about pages is that assuming that you are using the same pages all the time, you can teach the file system cache where your hot spots are, and it will try to keep them in memory as much as possible. The problem with managing pages yourself is that you also need to manage free space. If someone went ahead and deleted a bunch of records and freed a whole bunch of pages, you now need to be aware that those are free, and when you next need to allocate a page, you want to allocate it from the free list, not from the end of the file.

Using pages also allow you to do some interesting tricks. For example, you can pre-allocate the file, which will give the file system better opportunity to give you continuous segment of space on the disk, so you reduce the file system fragmentation. Note that so far I’m ignoring concurrency and durability entirely, those are topics for another post.

But there is something that we need to talk about with pages, and that is what happens when you have large pages. If a typical page size is 4KB in size, then just writing the whole page out whenever any value changes there is reasonable. But if your page size is much larger (for example, 2MB), that becomes quite expensive, and not something that you want to do. Instead, what you typically do is you write the changes to the page to a journal file (another topic that I’ll cover in the future), and keep the changes to that page in memory. Whenever a certain threshold is reached, you rearrange the whole page with all of the changes that are required (amortizing the cost of writing the change among many changes) and then write it out once.

As you can imagine, this sort of thing has a major impact on the design of the database. If your page sizes are small (4 KB – 32 KB, let us say ), you will handle things very differently than if your page size is much larger.

More posts in "The Guts n’ Glory of Database Internals" series:

- (08 Aug 2016) Early lock release

- (05 Aug 2016) Merging transactions

- (03 Aug 2016) Log shipping and point in time recovery

- (02 Aug 2016) What goes inside the transaction journal

- (18 Jul 2016) What the disk can do for you

- (15 Jul 2016) The curse of old age…

- (14 Jul 2016) Backup, restore and the environment…

- (11 Jul 2016) The communication protocol

- (08 Jul 2016) The enemy of thy database is…

- (07 Jul 2016) Writing to a data file

- (06 Jul 2016) Getting durable, faster

- (01 Jul 2016) Durability in the real world

- (30 Jun 2016) Understanding durability with hard disks

- (29 Jun 2016) Managing concurrency

- (28 Jun 2016) Managing records

- (16 Jun 2016) Seeing the forest for the trees

- (14 Jun 2016) B+Tree

- (09 Jun 2016) The LSM option

- (08 Jun 2016) Searching information and file format

- (07 Jun 2016) Persisting information

Comments

Comment preview