I talk a lot about journals, and how to make sure they are durable and safe. What I haven’t talked about is what you put in the journal. Carsten reminded me of this lack, and I want to thank him for that.

The first thing we need to understand is what the journal is supposed to do. The whole point of the journal is to make sure that you if you had some sort of error (including power loss), you can use the information on the journal to recover up to the same point you were at before the failure. More formally, in order to maintain ACID, we need to ensure that any transactions we committed (so clients rely on that) are there, and any ongoing transactions have been rolled back properly. The journal is the key to that, but how?

A lot of this depends on the database engine itself, and that has an impact on the file format you used in the journal. The way you work with the journal has also a lot to do with the internals of the database.

Let us take a relational database as an example. We want to insert a new row to a table. The size of the row is usually pretty small, a few dozen to a few hundred bytes. So it’s cool, but how does it play into the journal? Well, before the database can actually modify the data, it needs to write its intent to do so in the journal, and flush it. Only then can it proceed, knowing that there is a stable record in the case of an error.

One way of doing this is to write the following this record into the journal file:

{ position: 1234, value: [binary] }

After it is flushed, we can go ahead and modify the file itself. If we crash, during recovery we’ll see that we have this entry in the journal, and we’ll write it again to the same place in the data file. Either this will be a no-op, because it was previously applied, or we’ll re-apply the change. At any rate, as an external observer, the fact that the journal entry exists ensures that on recovery, the value will be the same. Hence, the fact the entry was written and flushed to the journal ensures that the transaction change was committed.

So this is the high level overview. The devil is in the details. The simplest journal file just records binary data and position, so we can write it out to the data file on recovery. But while that works, it is limiting the database engine in what it can do. If you have multiple concurrent transactions all of which are generating entries to the log, you don’t want to force them to be written to the journal only on transaction commit, you want them to be appended to the log and flushed (so you can amortize the cost), and you want to write them out immediately.

But this means that you are writing uncommitted changes to the journal file. So each of your entry also has a transaction number, and your transaction commit is another entry in the journal that orders to apply all operations from that particular transaction. At this point, you usually have different actors inside the database engine. You have an actor that is responsible for writing to the journal file, and one is typically merging multiple entries from multiple concurrent transactions to allow the maximum level of performance. Consider the implications of the following journal actor implementation:

All pending entries to the journal are actually written using buffered I/O, so they are very fast. However, there are a few things to notice here.

- We keep a record of the hash (CRC, MD5, etc) of all the writes.

- When we get a transaction commit entry, we write it to the disk, and then write the hash for all the entries that were written since the last transaction commit.

- We flush to disk, ensuring that we are actually persisting on spinning rust.

- We let the caller know the transaction has been safely journaled to disk.

This code has a lot of tiny implications that are not immediately obvious. For example, whenever we send a journal entry to be written, we don’t need to wait for it, we can proceed immediately to the next change in the transaction. And because the writes are buffered, even the I/O on the journal actor is very cheap.

However, when we send a commit transaction entry, we do a few more things. Writing the hash of the all the entries that were written since the last transaction allows us to verify that all entries that were written were written successfully. If there was a power failure while we were writing the transaction, we would know and realize that this transaction isn’t committed, and that is where the log ends.

And while I don’t need to wait for the journal entry to be flushed to disk, I do have to wait for the transaction itself to be written to disk before I let the user know that it has been committed. This type of structure also has the advantage of transactions sharing the load. If we have a long transaction that does something, its journal entries will be flushed to disk along with the other committing transactions. When we need to commit the long transaction, the amount of work we’ll actually have to do is a lot less.

The structure of the journal entry can range from the simple mode of writing what binary data to modify where, to actually have meaning for the database engine (SET [column id]=[value]). The latter is more complex, but it gives you more compact representation for journal entries, which means that you can pack more of them in the same space and I/O is at a premium.

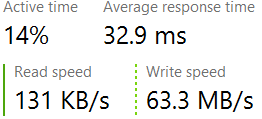

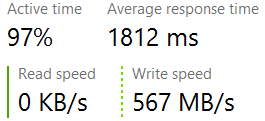

For the technical reasons mentioned in previous posts (alignment, performance, etc.), we typically write only on page boundaries (in multiples of 4KB), so we need to consider whether it is actually worth our time to write things now, or wait a bit and see if there is additional data coming that can complete the target boundary.

What I described so far is one particular approach to handle that. It is used by databases such as PostgresSQL, Cassandra, etc. But it doesn’t work quite in this manner. In the case of something like LevelDB, the journal is written one transaction at a time, under the lock, and in multiples of 32KB. The values in the journal are operations to perform (add / delete from the database), and that is pretty much it. It can afford to do that because all of its data files are immutable. PostgresSQL uses 8KB multiples and only stores in the journal only the data it needs to re-apply the transaction. This is probably because of the MVCC structure it has.

SQL Server, on the other hand, stores both redo and undo information. It make sense, if you look at the journal actor above. Whenever we write an entry to the journal file, we don’t yet know if the transaction would be committed. So we store the information we need to redo it (if committed) and undo it (if it didn’t). Recovery is a bit more complex, and you can read about it the algorithm used most frequently (at least as the base) here: ARIES.

With Voron, however, we choose to go another way. Each transaction is going to keep track of all the pages it modified. And when the transaction commits, we take all of those pages and compress them (to reduce the amount of I/O we have to do), compute a hash of the compressed data, and write it on 4KB boundary. Recovery then becomes trivial. Run through the journal file, and verify each transaction hash at a time. Once this is done, we know that the transaction is valid, so we can decompress the dirty pages and write them directly to the data file. Instead of handling operations log, we handle full pages. This means we don’t actually care what modifications run on that page, we just care about its state.

It makes adding new facilities to Voron very simple, because there is a very strict layering throughout, changing the way we do something has no impact on the journaling / ACID layer.

![image[3] image[3]](https://ayende.com/blog/Images/Open-Live-Writer/The-Guts-n-Glory-of-Database-Internals-L_256E/image[3]_thumb.png)

With all the focus on writing to disk and ensuring the high speed of the database, I almost missed a very important aspect of databases, how do we actually communicate with the outside world.

With all the focus on writing to disk and ensuring the high speed of the database, I almost missed a very important aspect of databases, how do we actually communicate with the outside world.