This isn’t actually about a production system, per se. This is about a performance regression we noticed in a scenario for 4.0. We recently started doing structured performance testing as part of our continuous integration system.

This isn’t actually about a production system, per se. This is about a performance regression we noticed in a scenario for 4.0. We recently started doing structured performance testing as part of our continuous integration system.

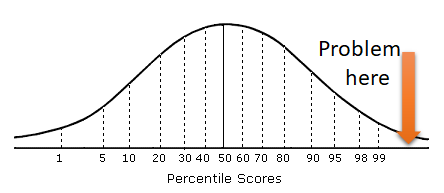

There goes the sad trombone sound, because what that told us is that at some point, we had a major performance regression. To give you that in numbers, we used to be around 50,000 writes per second (transactional, fully ACID, hit the disk, data is safe) and we dropped to around 35,000 writes per second. Fluctuations in performance tests are always annoying, but that big a drop couldn’t be explained by just random load pattern causing some variance in the results.

This was consistent and major difference between the previous record. Now, to explain things, the last firm numbers we had about this performance test was from Dec 2016. Part of the release process for 4.0 calls for a structured and repeatable performance testing, and this is exactly the kind of thing that it is meant to catch. So I’m happy we caught it, not so happy that we had to go through about 7 months of changes to figure out what exactly drove our performance down.

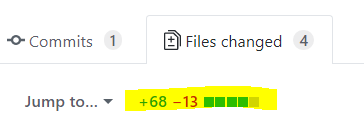

The really annoying thing is that we put a lot of effort into improving performance, and we couldn’t figure out what went wrong there. Luckily, we could scan the history and track our performance over time. I’m extremely over simplifying here, of course. In practice this meant running bisect on all the changes in the past 7 – 8 months and running benchmarks on each point. And performance is usually not something as cut and dried as a pass / fail test.

Eventually we found the commit, and it was small, self contained and had a huge impact on our performance. The code in question?

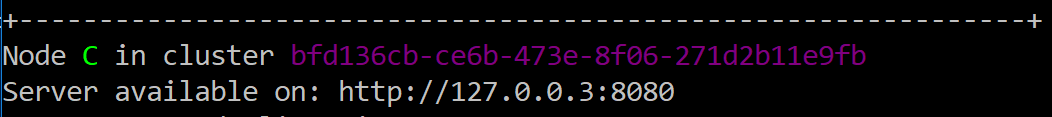

ThreadPool.SetMinThreads(MinThreads, MinThreads);

This change was part of ironically, a performance run that focused on increasing the responsiveness of the server under a specific load. More specifically, it handled the case where under a harsh load we would run into thread starvation. The problem with this load is that this specific load can only ever happen in our test suite. At some point we had run up to 80 parallel tests, each of which might spawn multiple servers. The problem is that we run them all a single process, so we run into a lot of sharing between the different tests.

That was actually quite intentional, because it allowed us to see how the code behaves in a bad environment. It exposed a lot of subtle issues, but eventually it got very tiring to have tests fail because there just weren’t enough threads to run them fast enough. So we set this value to a high enough number to not have to worry about this.

The problem is that we set it in the server, not in the tests. And that led to the performance issue. But the question is why would this setting cause such a big drop? Well, the actual difference in performance went from 20 μs to taking 28.5 μs, which isn’t that much when you think about it. The reason why this happened is a bit complex, and require understanding several different aspect of the scenario under test.

In this particular performance scenario, we are testing what happens when a lot of clients are writing to the database all at once. Basically, this is a 100% small writes scenario. Out aim here is to get sustained throughput and latency throughout the scenario. Given that we ensure that every write to RavenDB actually hit the disk and is transactional, that means that every single request needs to physically hit the disk. If we tried doing that for each individual request, that would lead to about 100 requests a second on a good disk, if that.

In order to avoid the cost of having to go to the physical disk, we do a lot of batching. Basically, we read the request from the network, do some basic processing and then send it to a queue where it will hit the disk along with all the other concurrent requests. We make heavy use of async to allow us to consume as little system resources as possible. This means that at any given point, we have a lot of requests that are in flight, waiting for the disk to acknowledge the write. This is pretty much the perfect system for high throughput server, because we don’t have to hold up a thread.

So why would increasing the amount of minimum threads hurt us so much?

To answer that we need to understand what it is exactly that SetMinThreads control. The .NET thread pool is a lazy one, even if you told it that the minimum number of threads is 1000, it will do nothing with this value. Instead, it will just accept work and distribute it among its threads. So far, so good. The problem starts when we have more work at hand then threads to process it. At this point, the thread pool need to make a decision, it can either wait for one of the existing threads to become available of it can create a new thread to handle the new load.

This is when SetMinThreads come into play. Up until the point the thread pool created enough threads to exceed the min count, whenever there is more work in the pool then there are threads, a new thread will be created. If the minimum number of threads has been reached, the thread pool will wait a bit before adding new threads. This allow the existing threads time to finish whatever they were doing and take work from the thread pool. The idea is that given a fairly constant workload, the thread pool will eventually adjust to the right amount of threads to ensure that there isn’t any work that is sitting in the queue for too long.

With the SetMinThreads change, however, we increased the minimum number of threads significantly, and that caused the thread pool to create a lot more threads then it would otherwise create. Because our workload is perfect for the kind of tasks that the thread pool is meant to handle (short, do something and schedule more work then yield) it is actually very rare that the thread pool would need to create an additional thread, since a thread will be available soon enough.

This is not an accident, we very careful set out to build it in this fashion, of course.

The problem is that with the high number of minimum threads, it was very easy to get the thread pool into a situation where it had more work then threads. Even though we would have a free thread in just a moment, the thread pool would create a new thread at this point (because we told it to do so).

That lead to a lot of threads being created, and that lead to a lot of context switches and thread contentions as the threads are fighting over processing the incoming requests. In effect, that would take a significant amount of the actual compute time that we have for processing the request. By removing the SetMinThreads calls, we reduced the number of threads, and were able to get much higher performance.