RavenDB 5.3 New FeaturesElasticsearch ETL

RavenDB tries to be a good neighbor in your systems. RavenDB is typically used in polyglot solutions and we are often brought in to existing ecosystems. One of the things that we do to make it easier to use RavenDB is to have a full suite of built-in tools to make pushing data to other destinations.

RavenDB tries to be a good neighbor in your systems. RavenDB is typically used in polyglot solutions and we are often brought in to existing ecosystems. One of the things that we do to make it easier to use RavenDB is to have a full suite of built-in tools to make pushing data to other destinations.

For example, you can define an ETL process that will push document changes from RavenDB (potentially transforming & filtering them) to a relational database, another RavenDB instance, a data lake / OLAP system and much more.

In RavenDB 5.3 we have added Elasticsearch as an ETL target for RavenDB. If you are familiar with RavenDB ETL processes, the behavior is pretty much the same as you would expect. You select which collections you want to push to Elasticsearch, you provide a script that filters and transform the data and then you are done. From that point on, it is RavenDB’s responsibility to keep the Elasticsearch target up to date with any changes that are happening inside of RavenDB.

I’ll discuss the exact details on how to make it work shortly, but first I want to talk a bit about the usage scenario for this. Elasticsearch, just like RavenDB, it using Lucene behind the scenes to implement indexes. Unlike RavenDB, however, Elasticsearch is all about… well, searching. In that context, there is a pretty big overlap between RavenDB and Elasticsearch. In fact, one of the primary reasons we see people selecting RavenDB is that they now don’t need to maintain multiple environments (one to store the data and an Elasticsearch cluster for searching on that), RavenDB is able to undertake both needs in a single highly integrated and performant package.

The most common scenario for Elasticsearch ETL is when you already have an existing investment in Elasticsearch. RavenDB will naturally integrate into your environment, without needing to make any significant changes. That can enable you to start running queries, Kibana dashboard, etc on your RavenDB documents.

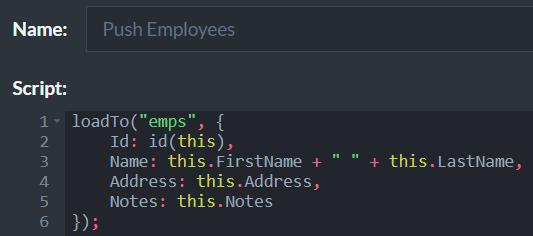

Here is the transformation script:

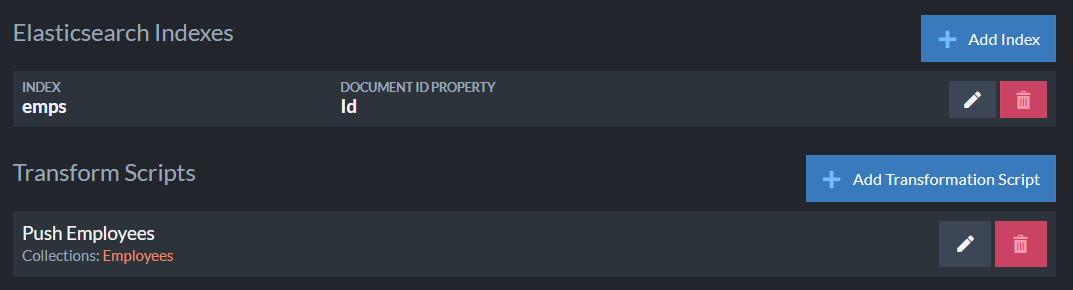

And the configuration telling RavenDB where to go:

You can push multiple collections to multiple Elasticsearch indexes. It is important to note that you must include the RavenDB document Id as a property in the script and also set it in the destination index configuration. If the Elasticsearch index doesn't already exist, RavenDB will create it for you on the fly.

This is… pretty much it. The actual feature is fully fledged, of course. You get monitoring and tracking, it will run in high availability mode and will be assigned an owner node in the cluster, etc. If there is a failure on Elasticsearch, there is no data loss, RavenDB will wait for the target to come back up and push all the data that was changed in the meantime. The ETL process is an online process, which means that you can expect to see changes in RavenDB reflected in Elasticsearch index within a few milliseconds of the transaction commit.

This feature is available in the Professional and Enterprise editions of RavenDB and will be included in the RavenDB 5.3 released, scheduled for mid November.

More posts in "RavenDB 5.3 New Features" series:

- (26 Nov 2021) Revisions includes

- (25 Nov 2021) JSON Patch

- (24 Nov 2021) Studio & Query improvements

- (23 Nov 2021) TCP Compression

- (18 Nov 2021) Experimental PostgreSQL wire protocol

- (17 Nov 2021) Elasticsearch ETL

- (15 Nov 2021) Incremental time series & implementing lambda based accounting

- (12 Nov 2021) Incremental time series

- (11 Nov 2021) Concurrent Subscriptions & Serial operations

- (10 Nov 2021) Concurrent subscriptions

Comments

Comment preview