Performance optimizations in production

Date range queries can be quite expensive for RavenDB. Consider the following query:

from index 'Users/Search'

where search(DisplayName, "Oren") and CreationDate between "2008-10-13T07:18:01.623" and "2018-10-13T07:18:01.623"

include timings()

The root issue is that we have a compound query here, we use full text search on the left but then need to match it on the right. The way Lucene works, we have to compute the set of all the documents that match the date range. If we have a lot of documents in that range, we have to scan through a lot of values here.

We spent a lot of time and effort optimizing date queries in RavenDB. Such issues also impacted heavily the design of our next-gen indexing capabilities (but more on that when it matures enough to discuss).

One of the primary design principles of RavenDB is that it learns from previous usage, and we realized that date ranges in queries are likely to repeat often. So we take advantage of that. The details are a bit complex and require that you’ll understand how Lucene stores its data in immutable segments. We are able to analyze queries on repeating date ranges and remember them, so next time we use the same type of date range, we’ll already have the set of matching documents ready.

That feature was deployed to address a specific customer scenario, where they do a lot of wide date range queries and it had a big impact on that.

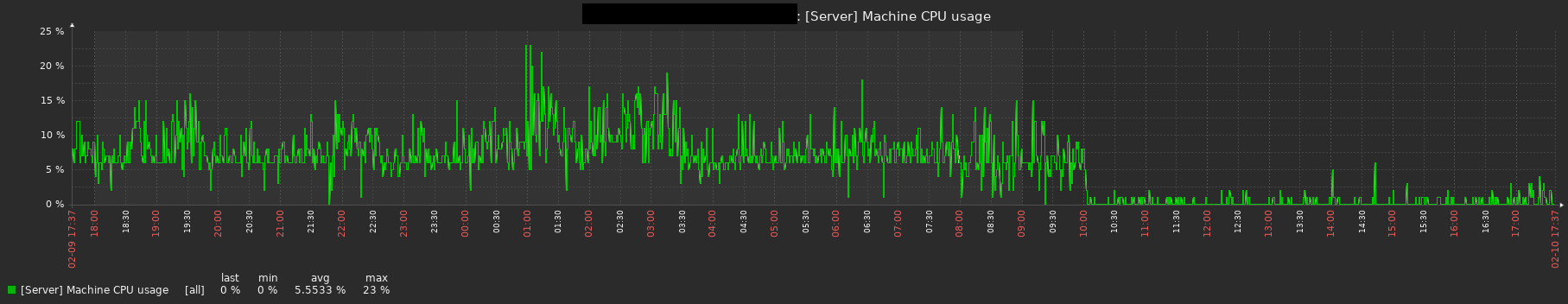

Last week we ran into some funny metrics for a completely different customer, with a very different scenario. You can probably tell at what point they moved to the updated version of RavenDB and were able to take advantage of this feature:

The really nice thing about this, from my perspective, is that none of us even considered the impact that feature would have for this scenario. They upgraded to the latest version to get access to the new features, and this is just sitting in the background, pushing their CPU utilization to near zero.

That’s the kind of news that I love to get.

Comments

Comment preview