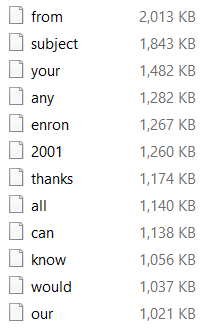

In the previous posts in this series, I explored a bit how to generate a full text index on top of the Enron data set. In particular, we looked at (rudimentary) analysis of text in the first post and looked into posting lists (list of matching documents for specific terms) in the second one. It occurred to me that we need to actually have a much better understanding of the kind of requirements that we have from posting lists in general, so let’s look at them, shall we?

- Add to the list (increasing numbers only).

- Iterate the list (all, or from starting point).

- Reduce disk space and memory utilization as much as possible.

The fact that I want to be able to add to the list is interesting. The typical use case in full text search is to generate the full blown posting list from scratch every time. The typical model is to use LSM (Log Structure Merge) and take advantage on the fact that we are dealing with sorted list to merge them cheaply.

Iterating the list is something you’ll frequently do, to find all the matches or to merge two separate lists. Here is the kind of API that I initially had in mind:

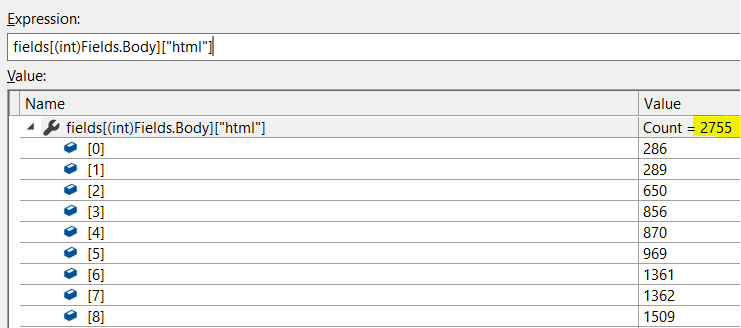

As you can see, there isn’t much there, which is intentional. I initially thought about using this an the baseline of a couple of test implementations using StreamVByte, FastPFor as well as Gorrilla compression. The problem is that there is the need to balance compression ratio with the cost of actually going over the list. Given that my test cases showed a big benefit for using Roaring Bitmaps, I decided to look at them first and see what I can get out of it.

RoaringBitamps is a way to store (efficiently) a set of bits, they are very widely used in the industry. The default implementation is also entirely suitable for my purposes. Mostly because they make use of managed memory, and a hard requirement that I have placed on this series is that I want to be able to use persistent memory. In other words, I want to be able to write the data out, then be able to do everything on top of memory mapped data, without having to parse it.

Roaring Bitmaps works in the following manner. Each 64K range of integers is divided into each own 8KB segments. Given that I’m using Voron as a persistence library, these numbers don’t work for my needs. Voron uses an 8KB page size, so we’ll drop these numbers by half. Each range will be 32K of integers and take a maximum of 4KB of disk space. This allows me to store it much more efficiently inside of Voron. Each segment, in turn, has a type. The types can be either:

- Array – if the number of set bits in the segment is less than 2048, the data will use a simple sorted array implementation, with each value taking 2 bytes.

- Bitmap – if the number of set bits in the segment is between 2048 and 30,720, the segment will use a total of 4096 bytes and be a standard bitmap.

- Reversed array – if the number of set bits in the segment is higher than 30,720, we’ll store in the segment the unset bits as a sorted array.

This gives us quite a few advantages:

- It is straightforward to build this incrementally (remember that we only ever add items in the end).

- It is quite efficient in terms of space saving in the case of sparse / busy usage.

- It is cheap (computationally) to work with and process.

- It is very simple to use from memory mapped file without having to parse / create managed objects.

The one thing that we still need to take into account is how to deal with the segment metadata. How do we know what segment belong to what range. In order to handle that, we’ll define the following:

The idea is that we need to store two important pieces of information. The start location (is always going to be a multiple of 32K) and the number of set bits (which has a maximum of 32K). Therefor, we can pack all of them into a single int64. The struct is merely there for convenience.

In other words, in addition to the segments with the actual set bits, we are also going to have an array of all the segment’s metadata. In practice, we’ll also need another value here, the actual location of the segment’s data, but that is merely another int64, so that is still very reasonable.

As this is currently a mere exercise, I’m going to skip actually building the implementation, but it seems like it should be a fairly straightforward approach. I might do another post about how to actually implement this feature on Voron, because it is interesting. But I think that this is already long enough.

We still have another aspect to consider. So far, we talked only about the posting lists, but we also need to discuss the terms. But that is a topic for the next post in the series.