The Red Alert Sleeper Agent Bug

Today I started out like most recent days, I was working on improving performance and running benchmarks. I made a small change in how we handle file allocation and mapping inside Voron. This is the kind of change that should have no observable effects. And indeed, except for making us run faster, everything worked.

Except that later today I merged some stuff from a colleague and suddenly I started getting invalid memory accesses. After quickly blaming my colleague for the issue, we eventually figured out that it was my change that caused it.

Unfortunately, the problem was a lot more serious than it immediately appeared. It wasn’t just that I needed to fix my code, what was happening there was that I made a certain situation (a new file mapping, and thus, exercising the cleanup routine) a lot more frequent. Which is all well and good, except that this is something that will happen routinely in Voron anyway, it just means that this is now much more likely.

And the problem is that we couldn’t for the life of us figure out why it was failing. Oh, we quickly figured out that we are accessing memory that has been unmapped, but how? The Voron codebase is really careful about such things, and we have quite enough production usage to know that this doesn’t really happen. But again, it might just be a sleeper…

The real problem wasn’t actually with the access violation, that was pretty obvious and would have come to our immediate attention. The problem was that the error looked like the Page Translation Table had a race condition. In this case, because we are much more eager about cleanup, it was obvious that we are accessing old information, but without this to trigger our attention, the fear was that we are actually racy, and that the Page Translation Table will serve incorrect information.

That means that Voron would violate its consistency rule, we’ll effectively be returning random garbage to the user and… Bad Things to Follow.

At various points during the day, we had five different people working on it in three continents, because it is that kind of bug. And we couldn’t figure it out. We traced the code that did that every which way. It is old code, that has been worked upon repeatedly, and it has been stable for years. And none of us could figure out what was going on. Theories ranging from cosmic rays to the wrath of Murphy has been thrown out.

Something was very rotten in Voron. Okay, after all of this exposition, let me explain what was going on. We started out with a Page Table that looked like this:

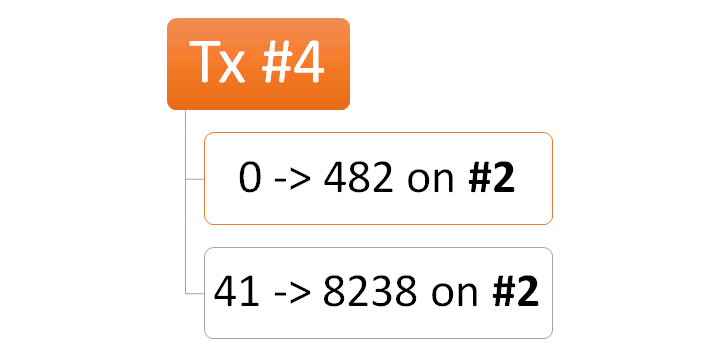

So the first number is the page number, and the second is the page number inside the scratch file (#1 or #2, above).

Basically, this means that when Transaction #3 looks asks for Page #0, it will actually get Page #238 from scratch #1. And when Transaction #4 asks for the same page, it will get it from Page #482 on scratch #2. If you got lost with the numbers, don’t worry, we did too.

The problem was… the failing issue was in transaction #5, and the problem was that it was access page #412 on scratch #1. And due to my change, we actually closed scratch #1. The problem is that we couldn’t figure out how this thing could actually happen. Crazy stuff. We tried reproducing this in all sort of crazy ways, but it would only fail on the most trivial of tests, and very unpredictably. And then we finally figured it out. Basically by tracing everything, putting locks on everything that moved or looked like it would move it I kicked it and plain head against wall rinse & repeat.

Eventually we focused on what happened around the location of the error. It always happened during a query, that was very consistent, when it happened. And finally we figured it out. We now use Lucene indexes stored inside Voron, and Lucene has some funky ideas about how it should be able to access the data. So we have to put a Voron transaction around the whole thing. And we have to flow the same transaction across multiple Lucene index input instances. So we put the transaction inside a thread local variable. And the query method is async.

I think that you can figure out what happened from here, right? When the async machinery jumped us threads, we would end up with a totally foreign transaction, our old transaction would be gone, and all of the carefully thought out premises that we have for transaction scope went out the window. Much cursing was to be heard.

So we did a quick fix and changed the ThreadLocal<Transaction> to be an AsyncLocal<Transaction>, so it would flow through the async calls. And then we run the tests, confident it would solve it. But it didn’t, in fact, we got the exact same error, in the exact same place, and we went back to head butting the wall to see who is smarter.

And then I realized that we were doing something else there. Lucene has the notion of cloning an input, which allows for multi threaded usage of an input. When we do that, we check if we are in the old transaction scope and can reuse previous work, or if we need to do the initialization for a new transaction. The problem was that we were doing this check by id.

Now, two transactions with the same id will always show the same data, period. However, how they do it is very different. Let us take a look at the Page Table diagram above. It shows that Page #412 is located on scratch #2 in position #8327. Now, we have a flushing background process that will take the data from the scratch file and move it to the data file. So the new Page Table will look like this:

Note that because the data on the data file in position #412 and scratch #2 in position #8327 is the same, that doesn’t actually matter. Except that when you have started in one transaction and started reading from scratch #2, then was bumped into another thread, and now are trying to keep on reading from the same place, only to end up blowing up entirely.

Once we have fixed this problem as well, all was well with the world. The sky wasn’t falling, and I was writing a blog post at midnight for relaxation.

Comments

Are you sure that the images are correct? image_16.png and image_19.png seem to be identical to me.

Carsten,

Thanks, I updated the post

I've been warning people in talks, workshops etc about ThreadLocal / ThreadStatic under async. Write up https://particular.net/blog/the-dangers-of-threadlocal

AsyncLocal is an option although I prefer http://www.planetgeek.ch/2016/04/26/avoid-threadstatic-threadlocal-and-asynclocal-float-the-state-instead/

Depending on the design that is not always achievable but most of the time it is. Yes it makes it more cumbersome because you need to "float" the state all over the place but still better than black magic ;)

Daniel,

That only works if you have very small amount of state, and code that you control completely.

In our case, we have to set a value, then call an external library which will then call our implementation of an interface the library implements.

For fun, the library will also freely clone and modify the instance we give it, and we need to handle it.

Comment preview