HotSpot Shell Game

One of the annoying things about doing performance work is that you sometimes feel like you found the problem. You fix it, and another pops out.

After the previous run of work, we have tackled two important perf issues:

- Immutable collections – which were replaced with more memory intensive but hopefully faster safe collections.

- Transaction contention from background work – which we reduced and close to eliminated.

Once we have done that, it is time to actually look at the code in the profiler again. Note that I am writing this while I am traveling on the train (on battery power), so you can pretty much ignore any actual numbers, we want to look at the percentages.

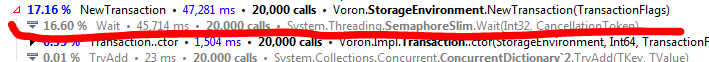

Let us look at the contention issue first. Here is the old code:

And the new one:

We still have some cost, but that appears to have been drastically cut down. I’ll call it good for now.

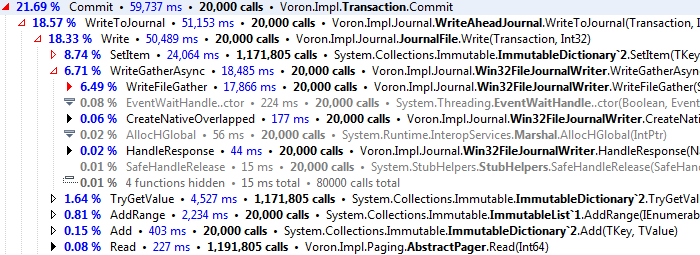

What about transaction commit? Here is the old code:

And here is the new one:

What we can see is that our change didn’t actually improve anything much. In fact, we are worse off. We’ll probably go with a more complex system of chained dictionaries, rather than the just plain copying that appears to be causing so much trouble.

However, there is something else to now. Both Commit and NewTransaction together are less than 60% of the cost of the operation. The major cost is actually in adding item to the in memory tree:

In particular, it looks like finding the right page to place the item is what is costing us so much. We added 2 million items (once sequential, once random), which means that we need to do an O(logN) operation 2 million times. I don’t know if we can improve on the way it works for random writes, but we should be able to do something about how it behaves for sequential writes.

a

Comments

(Looks like the comments link for this post doesn't show up on the blog front page? Had to click this blog post, then the comment area appeared.)

Oh, I see, the comments link shows up on the main page only when there are comments. Disregard.

Comment preview