Reviewing Lightning memory-mapped database libraryWhat about free pages?

Okay, so in my last post (and last night, from my perspective), I wrote about the process in which LMDB maintains the B-Tree when it grows. But what about when it shrinks? And how does LMDB handle things like reusing space?

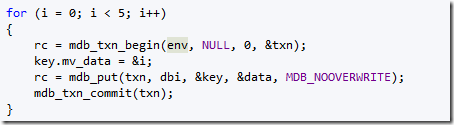

For that matter, we need to discuss another thing. As I understand this right now, LMDB is going to write a page once and only once .And when that is done, that page is now immutable. Updates to this page will result in a new page, and the old one will be marked for reuse. So you don’t actually have to worry about updating the page while others may be reading it. In other words, I would expect this code to use a lot of pages:

Actually, I would expect it to use two pages all the time. Alternating between the two after every transaction.

Indeed, following the code, it is easy to see that this magic happens during mdb_page_touch. We find (somehow, not sure how yet) a free page (or create a new one), and we mark the old one for reuse. That means that when you actually write to the data, you aren’t really writing to the old page, you are creating a new one. This drastically reduces a lot of complexity. However, it does mean that the db will be using more space, since every write transaction will use a minimum of one new page (and probably more, considering that there is the B-Tree to maintain). in fact, on average size dbs, I would be surprised if a single transaction could write less than 3 – 4 pages as a minimum. I’ll test the min number of pages per transaction in a bit, right now I want to focus on the implications of that decision.

Well, to start with, concurrency is easier. We aren’t writing to old data, so we have MVCC. The only thing we need to ensure is that we aren’t reusing a page before all of its transactions are complete. But it also has other implications, I haven’t confirmed my suspicions about transaction commits, but because we are writing to new locations, not modifying existing ones, this means that a crash midway would simply restore us to the previous state, and will not corrupt any data.

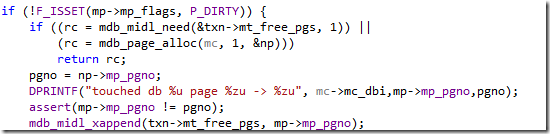

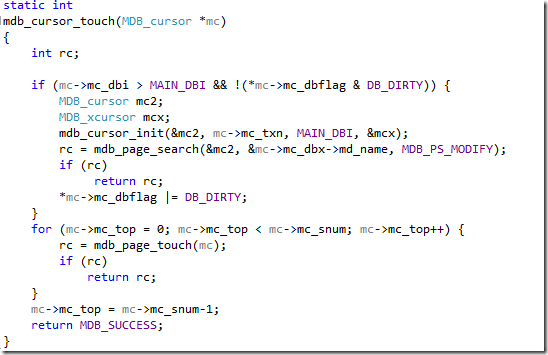

Since we don’t modify pages, it means that free pages aren’t just things that happen when we delete data, they happen all the time, so it would be interesting to see how LMDB handles them. Here is the relevant piece of the code:

So either find me a free page, or allocate a new one. Then add the existing page to the free list for the current transaction. A bit lower in the code we copy the data in the old page to the new one, and we are ready to go.

So, when we make updates, at least in this scenario, we are cycling between two pages, always allocating one and freeing the other, and on the next transaction we go the other way. This is really quite interesting, from the perspective of comparing that to other storage engines. CouchDB work in pretty much the same way, B-Tree with all the associated benefits. But CouchDB model is based on append only, and it cannot reuse the space in the file, which require compactions. LMDB model is much nicer in that regard. Since in both cases, in place updates are not allowed, there is a lot of wasted space that goes just for those updates. In LMDB’s case, that wasted space is a lot bigger, because it works in page sizes, and can reuse them. In CouchDB’s case, it doesn’t reuse space, so it doesn’t use pages (more space efficient this way).

Side note: C’s lack of native data structures beyond array is really annoying. You realize that in C, a Stack is a design pattern?!

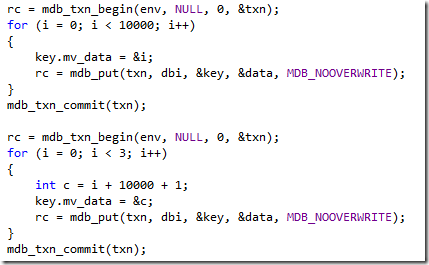

And about the minimum number of pages per transaction, let us see what is going on about that. So I wrote the following code:

This should generate a deep enough B-Tree to show what I want. And indeed, the action happens here:

Both the root node and the data node are modified, because of the cursor stack. It isn’t really a big deal, to be honest, since usually you would only need to update H pages (where H is the height of the tree) and H is very small for B-Trees.

But this leads to me to consider something else. Usually when talking about on disk structures, one of the more important things that you want to keep in mind is reducing seeks. But the B-Tree model that we have here would result in having pages pretty much all over the file. Now, in something like CouchDB, it doesn’t actually matter, because the file is append only, so you always end up scanning from the end of the file backward. But because LMDB is reusing pages, it is entirely possible for a root page to be in the middle of the file, the next level to be in the start and the actual data in the end.

Now, I am guessing that this isn’t actually an issue, because LMDB relies on the OS page cache to handle this, and that would take care of that. In practice, I expect, the branches of the tree are always going to be in memory, since they were the last written and the most accessed.

And that is enough for now. Next, I want to figure out how we return free pages to the system. In order to do that, I think that I’ll need to look at how we transactions are committed.

More posts in "Reviewing Lightning memory-mapped database library" series:

- (08 Aug 2013) MVCC

- (07 Aug 2013) What about free pages?

- (06 Aug 2013) Transactions & commits

- (05 Aug 2013) A thoughtful hiatus

- (02 Aug 2013) On page splits and other painful things

- (30 Jul 2013) On disk data

- (25 Jul 2013) Stepping through make everything easier

- (24 Jul 2013) going deeper

- (15 Jul 2013) Because, damn it!

- (12 Jul 2013) tries++

- (09 Jul 2013) Partial

Comments

Couldn't it happen that the new root page is written to disk before the new data pages are written? Then a power outage in between would cause inconsistency.

tobi: no. A transaction isn't committed until the meta page is updated. The meta page is not updated until after all data pages are synced. Anything written but not committed is ignored. LMDB is completely crash-proof.

Chu, for how long have you worked on LMDB?

What if the OS writes a page to disk without LMDB explicitly forcing a sync? That way a partially written page could be committed to disk (maybe just one word changed on it).

Tobi,

That doesn't matter, the header page hasn't been written yet. So on restart, we won't even care about that.

So I guess the page is only marked valid with the very last single-word write to its memory? Because only then a premature flushing to disk would do no harm.

Tobi,

Yeah, the header page contains the pointer to the trees, and that include the list of free space.

Until that is changed, nothing is commited, and the change is atomic.

Your comment that CouchDB makes more efficient use of space because it's not page-based is quite misleading. In an append-only btree that doesn't reuse space, within 20-30 transactions 99% of your space will consist of obsolete data. If you're not compacting continuously you will rapidly consume all of your available disk space. They think they've made a space/time tradeoff here, but it's no trade - you lose in write performance because your user writes always have to compete with the compaction writes. Same mistake as LSMs.

Howard,

I don't follow. Space comment wasn't in relation to couchdb.

And I agree WRT to compaction cost, but the question is really whatever you are under constant writes, or are do you have wait periods in which you can do background work.

Because if you can live with more space but faster response times, and more work during idle that, that actually work more nicely.

Ayende,

How do you explain this sentence, if not making a comparison about space between CouchDB and LMDB?

"In CouchDB’s case, it doesn’t reuse space, so it doesn’t use pages (more space efficient this way)."

Patrick,

Oh, I missed that one. You are correct, I was thinking about the first part of that paragraph.

Comment preview