One of the things that I noticed with the recent spate of work we have been doing is that we are doing things that we have already tried, and failed. But suddenly we are far more successful. What is the difference?

Case in point, transaction merging and early lock release. Those links both go to our initial implementation that was written in 2013. That is four years ago. Yes, today I can tell you that transaction merging was able to give us two orders of magnitude improvement and early lock release gave us 45% boost in performance. But looking at the timeline, we rolled back early lock release in early 2014.

The complexity of the feature is certainly non trivial, but the major point that led to its removal in 2014 was that it wasn’t worth it. That is, it didn’t pay enough to be worth the complexity it brought. When we sat down to design Voron for RavenDB 4.0, one of the first areas that we sought to eliminate was the transaction merging. We wanted Voron to be single threaded, by design.

And I still very much stand by those decisions. So how can I reconcile both statements? The core difference between them is where those are located, and what this means.

Transaction merging now is not done by Voron, instead, this is something that RavenDB does on top of Voron. But why?

When we had transaction merging in Voron, it meant that we had to submit transactional work to Voron in a format that it could understand. And Voron is a very low level library, so it doesn’t really understand much. This gave us very small “vocabulary” to work with. More than that, it also meant that we had to deal with such features as explicit concurrency (at the Voron level, on top of the concurrency primitives exposed by RavenDB). Let us take the simplest example. We have two threads that want to write to the same document.

That means that we have to build the buffer we want to write in memory, then submit it to Voron, with the right concurrency setting at the Voron level. This is after we already checked the concurrency semantics at the RavenDB level, and with double cost to ensure that a concurrency conflicts at all levels are properly handled. From the point of view of Voron, that meant much more common merged transaction failing (which kills perfromance) and much higher complexity overall when using it. Alongside that, we also have much higher memory usage, because we have to allocate buffers to hold the data we need to write, then submit it to Voron, so the rate of allocations was much higher.

We still saw performance improvement over not using it, but nothing that was really major as two orders of magnitude that we see today. Another aspect of this is that when we built Voron, we built it to fit our existing architecture (which was built on top of Esent), so it reflect a lot of design decisions coming from there.

With RavenDB 4.0, we took a few giant steps back and decided to design the whole system as a single integrated piece. In fact, that meant that any attempt to do concurrency at the Voron level was abandoned. That meant that the moment you had a write transaction, you were safe from concurrency, you didn’t have to worry about anyone modify the data you were looking at. There was no need to allocate special buffers and hold them, because we are always writing directly to Voron, instead of buffering in memory.

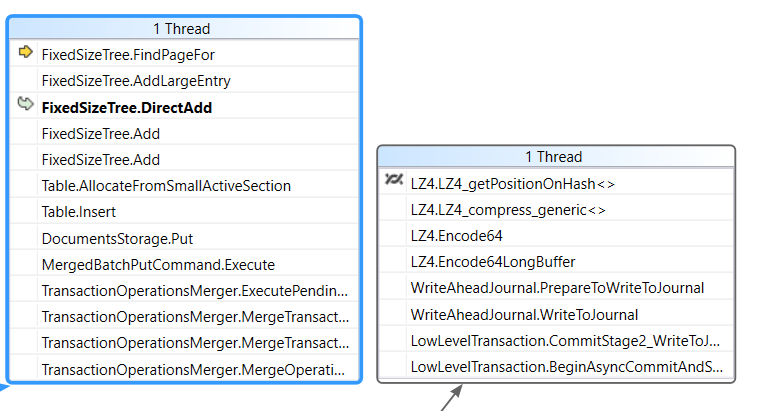

This was a dramatic simplification of the API and its usage, and it meant that the code is much more approachable and easy to understand, work with and make performant. Of course, it also meant that we had a serial lock, which is where the transaction merger became such a huge deal. But the point here is that this kind of transaction merging isn’t done at the Voron level, but at the RavenDB level, and instead of submitting primitive operations we can submit full fledge work items, including logic. So writing a document is now done by the request thread parsing the document, preparing a MergedPutCommand class and submitting it to the transaction merger.

The transaction merger will then execute the command under a write transaction, and it will directly manipulate Voron. This means that we get both high concurrency and safety from concurrency issues at the same time. Early lock release plays into that as well, we had to modify Voron to allow that, but what we did was to build low level primitives that can be used by higher levels, without making assumptions on their usage.

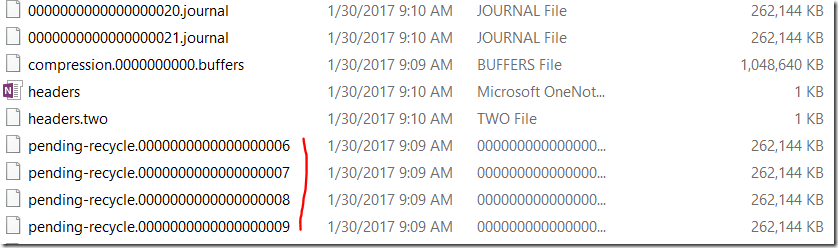

On the Voron side of things, we just have the notion of async commit (with a list of requirements that happen to be exactly fit what is going on in the transaction merging portion in RavenDB), and the actual transaction lock handoff / early lock released is handled at a higher layer, with a lot more information about the system.

The idea of transaction merging is deeply rooted into the design of RavenDB 4.0, and it isn’t something that we can (or want) to change. About 98% of all write work in RavenDB will always go through the transaction merger. That means that just releasing the lock isn’t really going to do much for us. The transaction merger thread will be busy waiting for the I/O to complete and then start a new transaction (re-acquiring the lock), so there isn’t actually any benefit here.

The idea of transaction merging is deeply rooted into the design of RavenDB 4.0, and it isn’t something that we can (or want) to change. About 98% of all write work in RavenDB will always go through the transaction merger. That means that just releasing the lock isn’t really going to do much for us. The transaction merger thread will be busy waiting for the I/O to complete and then start a new transaction (re-acquiring the lock), so there isn’t actually any benefit here.