RavenDB’s core philosophy is that It Just Works and that means that we try very hard to get things right. Conversely, that means that we are also trying to make it hard to do the wrong thing. Basically, we want to push you hard into the pit of success.

RavenDB’s core philosophy is that It Just Works and that means that we try very hard to get things right. Conversely, that means that we are also trying to make it hard to do the wrong thing. Basically, we want to push you hard into the pit of success.

Part of that approach is what we call the governors. It is a set of features that will detect and abort known bad behavioral patterns. I have already talked about Unbounded Result Sets and I recently run into this post, which shows how nasty a problem that can be, and how invisible.

Another governor we have in place is the session’s maximum request limit. A session is meant to be a scope, it has a very short duration and is typically used for a single request / processing a single message, etc. It is supposed to live as long as the business transaction. Because the session is scoped, we can reason that a single session that is making a lot of database operation is probably doing something pretty bad.

For example, it might be calling the database in a loop. Those kind of issues can be truly insidious. Let us look at the following code (taken from here):

This kind of thing is a silent performance killer. No one is likely to see this is happening, and it will silently increase the number of database operations that your application make, leading to increased DB load, higher page load times and all sort of problems associated with it.

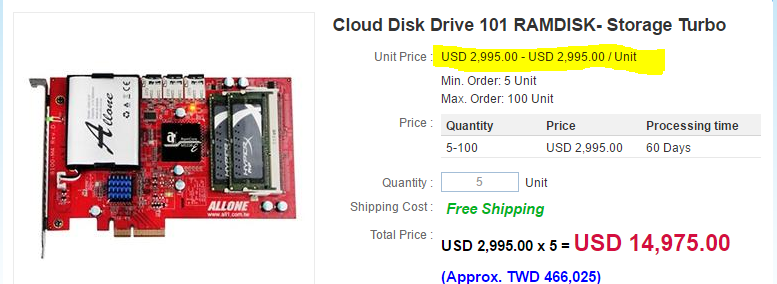

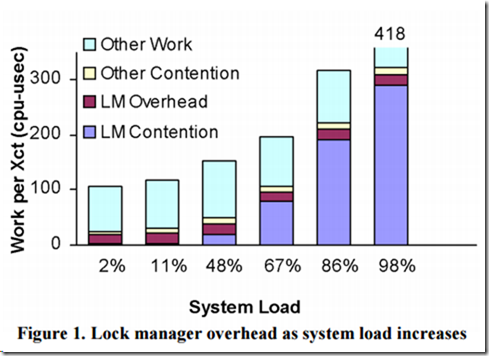

In one particular case, I saw a single page load generate 17,000 queries to the database. The software in question grew over time, and people assumed that this was just it took to run the software. Their database server was a true monster (this was about a decade ago), with dedicated RAM disks, high CPU count and a truly ridiculous amount of memory. Just to explain, we are talking about something like this:

But a decade ago, and it had a quite a bit of space. Now, this kind of beasty can do 500K IOPS (I’m drooling just thinking about it), but it is damn expensive. Just to put things in perspective, I spent several weeks at that company working on this particular problem, the cost of those weeks of work didn’t even cover the cost of the drive on that machine.

And on that monster, we were seeing page load times in the tens of seconds, and extremely high system load. I was able to bring it down to about 70 queries per page load, and their database server has pretty much idled ever since (IIRC, they turn that machine into a VM host for all the rest of their software, actually).

This is something that can bite.

To avoid that, we have the max numbers of requests in the session, which will abort excessive amount of database chatter. This have two important effects:

- It follow the “better let one bad request die rather than take down the entire application”.

- It put a budget on the number of calls that you can make.

Now, that budget is actually really interesting. Because we have it, we need to think about how we can reduce the number of database calls that we have to process the request. That led to a whole bunch of features around that. Lazy requests, includes and transformers to name just a few.

That had a positive unintended consequence. RavenDB is fast, really fast, but it is also typically deployed as a network database, that means that each database call actually go over the network, and we all remember our fallacies, right?

In our profiling, we found that most often, the real cost in a RavenDB application was the back & forth chatter with the database. Reducing the number of requests we make to the server has an immediate benefit. And RavenDB allows you to do that by pipelining requests with Lazy, predicting requests with Includes or running the whole thing on the server side with Transformers.

And, like all governors, you can control it, RavenDB allows you to decide what the limit should be (on that particular session or globally based on your actual needs and environment.

We spent some time recently looking into a lot of our old design decisions. Some of them make very little sense today (json vs. blittalbe as a good example), but made perfect sense at the time, and were essential to actually getting the product out.

We spent some time recently looking into a lot of our old design decisions. Some of them make very little sense today (json vs. blittalbe as a good example), but made perfect sense at the time, and were essential to actually getting the product out.