One accusation that has been leveled at me often is that I keep writing my own implementation of Xyz (where Xyz is just about anything). The main problem is that I can get overboard with that, but for the most part, I think that I managed to strike the right balance. Wherever possible, I re-use existing, but when I run into problems that are easier to solve by creating my own solution, I would go with that.

A case in point is the JSON parser inside RavenDB. From the get go, I used Newtonsoft.Json.dll. There wasn’t much to think of, this is the default implementation from my point of view. And indeed, it has been an extremely fine choice. It is a rich library, it is available for .NET 3.5, 4.0 & Silverlight and it meant that I had opened up a lot of extensibility for RavenDB users.

Overall, I am very happy. Except… there was just one problem, with large JSON documents, the library showed some performance issues. In particular, a 3 MB JSON file took almost half a second to parse. That was… annoying. Admittedly, most documents tends to be smaller than that, but it also reflected on overall performance when batching, querying, etc. When you are querying, you are also building large json documents (a single document that contains a list of results, for example), so that problem was quite pervasive for us.

I set out to profile things, and discovered that the actual cost wasn’t in the JSON parsing itself, that part was quite efficient. The costly part was actually in building the JSON DOM (JObject, JArray, etc). When people usually think about JSON serialization performance, they generally think about the perf from and to .NET objects. The overriding cost in that sort of serialization is actually how fast you can call the setters on the objects. Indeed, when looking at perf metrics on the subject, most of the comparisons were concentrated on that aspect almost exclusively.

That make sense, since for the most part, that is how people use it. But for RavenDB, we are using JSON DOM for pretty much everything. This is how we are representing a document, after all, and that idea is pretty central to a document database.

Before setting out to write our own, I looked at other options.

ServiceStack.Json - that one was problematic for three reasons:

- It wasn’t really nearly as rich in terms of API and functionality.

- It was focused purely on reading to and from .NET objects, with no JSON DOM supported.

- The only input format it had was a string.

The last one deserves a bit of explanation. We cannot afford to use a JSON implementation that accepts a string as input, because that JSON object we are reading may be arbitrarily large. Using a string means that we have to allocate all of that information up front. Using a stream, however, means that we can allocate far less information and reduce our overall memory consumption.

System.Json – that one had one major problem:

- Only available on Silverlight, FAIL!

I literally didn’t even bother to try anything else with it. Other stuff we have looked on had those issues or similar as well, mostly, the problem was no JSON DOM available.

That sucked. I was seriously not looking to writing my own JSON Parser, especially since I was going to add all the bells & whistles of the Newtonsoft.Json. :-(

Wait, I can hear you say, the project is open source, why not just fix the performance problem? Well, we have looked into that as well.

The actual problem is pretty much at the core of how the JSON DOM is implemented in the library. All of the JSON DOM are basically linked lists, and all operations on the DOM are O(N). With large documents, that really starts to hurt. We looked into what it would take to modify that, but it turned out that it would have to be a breaking change (which pretty much killed the notion that it would be accepted by the project) or a very expensive change. That is especially true since the JSON DOM is quite rich in functionality (from dynamic support to INotifyPropertyChanged to serialization to… well, you get the point).

Then I thought about something else, can we create our own JSON DOM, but rely on Newtonsoft.Json to fill it up for us? As it turned out, we could! So we basically took the existing JSON DOM, stripped it out of everything that we weren’t using. Then we changed the linked list support to a List and Dictionary, wrote a few adapters (RavenJTokenReader, etc) and we were off to the races. We were able to utilize quite a large fraction of the things that Newtonsoft.Json already did, we resolved the performance problem and didn’t have to implement nearly as much as I feared we would.

Phew!

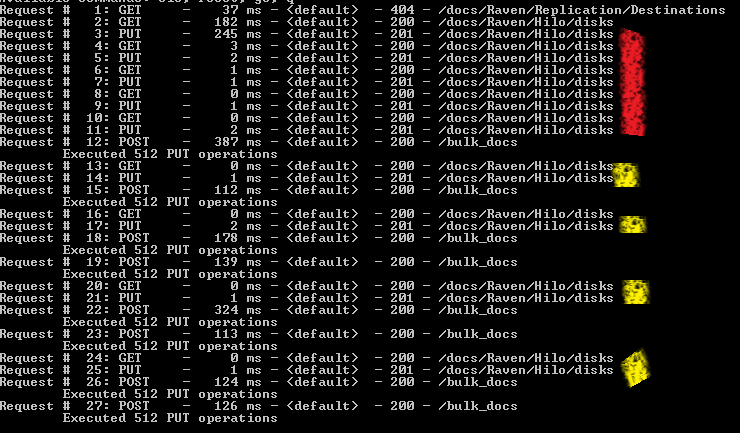

Now, let us look at the actual performance results. This is using a 3 MB JSON file:

- Newtonsoft Json.NET - Reading took 413 ms

- Using Raven.Json - Reading took 140 ms

That is quite an improvement, even if I say so myself :-)

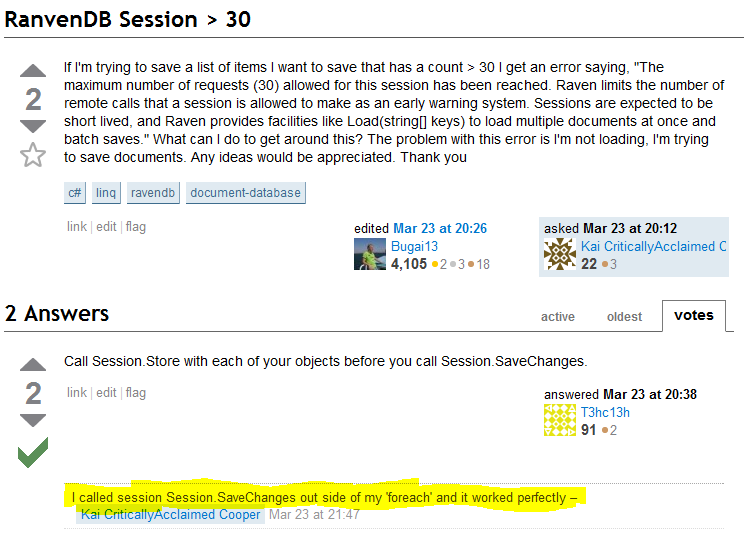

The next stage was actually quite interesting, because it was unique to how we are using JSON DOM in RavenDB. In order to save the parsing cost (which, even when optimized, is still significant), we are caching in memory the parsed DOM. The problem with caching of mutable information is that you have to return a clone of the information, and not the actual information (because then it would be mutated by the called, corrupting the cached copy).

Newtonsoft.Json supports object cloning, which is excellent. Except for one problem. Cloning is also an O(N) operation. With Raven.Json, the cost is somewhat lower. But the main problem is that we still need to copy the entire large object.

In order to resolve this exact issue, we introduced a feature called snapshots to the mix. Any object can be turned into a snapshot. A snapshot is basically a read only version of the object, which we then wrap around another object which provide local mutability while preserving the immutable state of the parent object.

It is much easier to explain in code, actually:

public void Add(string key, RavenJToken value)

{

if (isSnapshot)

throw new InvalidOperationException("Cannot modify a snapshot, this is probably a bug");

if (ContainsKey(key))

throw new ArgumentException("An item with the same key has already been added: " + key);

LocalChanges[key] = value; // we can't use Add, because LocalChanges may contain a DeletedMarker

}

public bool TryGetValue(string key, out RavenJToken value)

{

value = null;

RavenJToken unsafeVal;

if (LocalChanges != null && LocalChanges.TryGetValue(key, out unsafeVal))

{

if (unsafeVal == DeletedMarker)

return false;

value = unsafeVal;

return true;

}

if (parentSnapshot == null || !parentSnapshot.TryGetValue(key, out unsafeVal) || unsafeVal == DeletedMarker)

return false;

value = unsafeVal;

return true;

}

If the value is on the local changes, we use that, otherwise if the value is in the parent snapshot, we use that. We have the notion of local deletes, but that is about it. All changes happen to the LocalChanges.

What this means, in turn, is that for caching scenarios, we can very easily and effectively create a cheap copy of the item without having to copy all of the items. Where as cloning the 3MB json object in Newtonsoft.Json can take over 100 ms to clone, we can create a snapshot (it involves a clone, so the first time it is actually expensive, around the same cost as Newtonsoft.Json is) and from the moment we have a snapshot, we can generate children for the snapshot at virtually no cost.

Overall, I am quite satisfied with it.

Oh, and in our tests runs, for large documents, we got 100% performance improvement from this single change.