Paul Barriere has a video up of a presentation about Rhino ETL:

ETL stands for Extract, Transform, Load. For example, you receive files or other data from vendors or other third parties which you need to manipulate in some way and then insert into your own database. Rhino ETL is an open source C# package that I have used for dozens of production processes quite successfully. By using C# for your ETL tasks you can create testable, reusable components more easily than with tools like SSIS and DTS.

It is good to see more information available on Rhino ETL.

I found this ebook very informative. I very happy to learn that I at least thought about most of the things that the book recommend, but I wish I read it two years ago.

If you are an ISVN, I highly recommended, because it is not just some dry academic reading, it contains examples that you can easily relate to, probably examples that you are already familiar with.

Yesterday I gave a visual explanation about map/reduce, and the question came up about how to handle computing navigation instructions using map/reduce. That made it clear that while (I hope) what map/reduce is might be clearer, what it is for is not.

Map/reduce is a technique aimed to solve a very simple problem, you have a lot of data and you want to go through it in parallel, probably on multiple machines. The whole idea with the concept is that you can crunch through massive data sets in realistic time frame. In order for map/reduce to be useful, you need several things:

- The calculation that you need to run is one that can be composed. That is, you can run the calculation on a subset of the data, and merge it with the result of another subset.

- Most aggregation / statistical functions allow this, in one form or another.

- The final result is smaller than the initial data set.

- The calculation has no dependencies external input except the dataset being processed.

- Your dataset size is big enough that splitting it up for independent computations will not hurt overall performance.

Now, given those assumptions, you can create a map/reduce job, and submit it to a cluster of machines that would execute it. I am ignoring data locality and failover to make the explanation simple, although they do make for interesting wrinkles in the implementation.

Map/reduce is not applicable, however, in scenarios where the dataset alone is not sufficient to perform the operation. In the case of the navigation computation example, you can’t really handle this via map/reduce because you lack key data point (the starting and ending points). Trying to computing paths from all points to all other points is probably a losing proposition, unless you have a very small graph. The same applies if you have a 1:1 mapping between input and output. Oh, Map/Reduce will still work, but the resulting output is probably going to be too big to be really useful. It also means that you have a simple parallel problem, not a map/reduce sort of problem.

If you need fresh results, map/reduce isn’t applicable either, it is an inherently a batch operation, not an online one. Trying to invoke map/reduce operation for a user request is going to be very expensive, and not something that you really want to do.

Another set of problems that you can’t really apply map/reduce to are recursive problems. Fibonacci being the most well known among them. You cannot apply map/reduce to Fibonacci for the simple reason that you need the previous values before you can compute the current one. That means that you can’t break it apart to sub computations that can be run independently.

If you data size is small enough to fit on a single machine, it is probably going to be faster to process it as a single reduce(map(data)) operation, than go through the entire map/reduce process (which require synchronization). In the end, map/reduce is just a simple paradigm for gaining concurrency, as such it is subject to the benefits and limitations of all parallel programs. The most basic one of them is Amdahl's law.

Map/reduce is a very hot topic, but you need to realize what it is for. It isn’t some magic formula from Google to make things run faster, it is just Select and GroupBy, run over a distributed network.

Map/Reduce is a term commonly thrown about these days, in essence, it is just a way to take a big task and divide it into discrete tasks that can be done in parallel. A common use case for Map/Reduce is in document database, which is why I found myself thinking deeply about this.

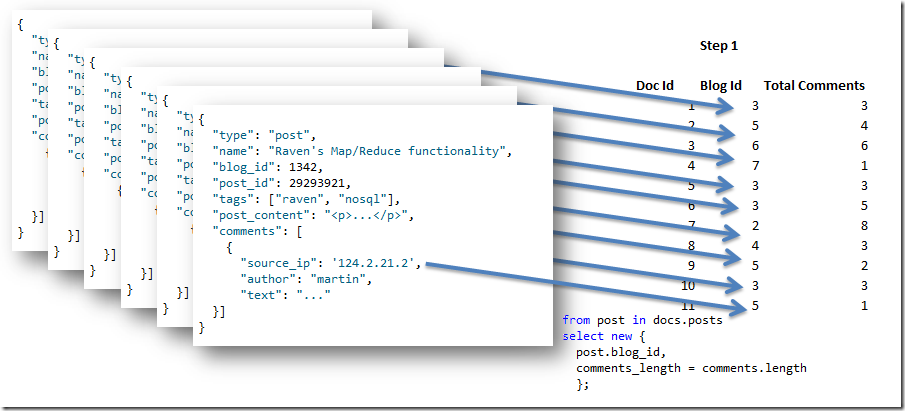

Let us say that we have a set of documents with the following form:

{

"type": "post",

"name": "Raven's Map/Reduce functionality",

"blog_id": 1342,

"post_id": 29293921,

"tags": ["raven", "nosql"],

"post_content": "<p>...</p>",

"comments": [

{

"source_ip": '124.2.21.2',

"author": "martin",

"text": "..."

}]

}

And we want to answer a question over more than a single document. That sort of operation requires us to use aggregation, and over large amount of data, that is best done using Map/Reduce, to split the work.

Map / Reduce is just a pair of functions, operating over a list of data. In C#, LInq actually gives us a great chance to do things in a way that make it very easy to understand and work with. Let us say that we want to be about to get a count of comments per blog. We can do that using the following Map / Reduce queries:

from post in docs.posts select new { post.blog_id, comments_length = comments.length }; from agg in results group agg by agg.key into g select new { agg.blog_id, comments_length = g.Sum(x=>x.comments_length) };

There are a couple of things to note here:

- The first query is the map query, it maps the input document into the final format.

- The second query is the reduce query, it operates over a set of results and produces an answer.

- Note that the reduce query must return its result in the same format that it received it, why will be explained shortly.

- The first value in the result is the key, which is what we are aggregating on (think the group by clause in SQL).

Let us see how this works, we start by applying the map query to the set of documents that we have, producing this output:

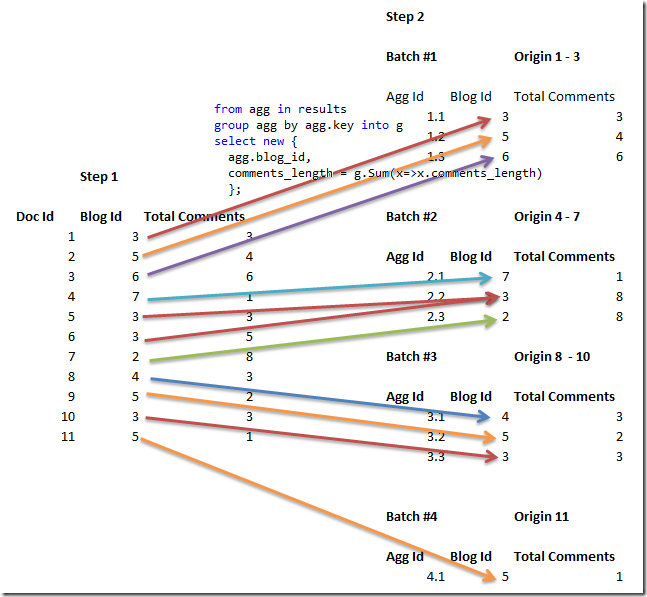

The next step is to start reducing the results, in real Map/Reduce algorithms, we partition the original input, and work toward the final result. In this case, imagine that the output of the first step was divided into groups of 3 (so 4 groups overall), and then the reduce query was applied to it, giving us:

You can see why it was called reduce, for every batch, we apply a sum by blog_id to get a new Total Comments value. We started with 11 rows, and we ended up with just 10. That is where it gets interesting, because we are still not done, we can still reduce the data further.

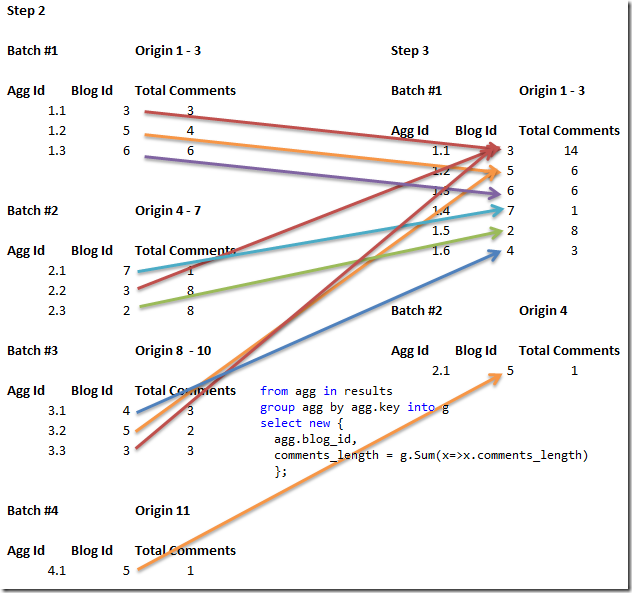

This is what we do in the third step, reducing the data further still. That is why the input & output format of the reduce query must match, we will feed the output of several the reduce queries as the input of a new one. You can also see that now we moved from having 10 rows to have just 7.

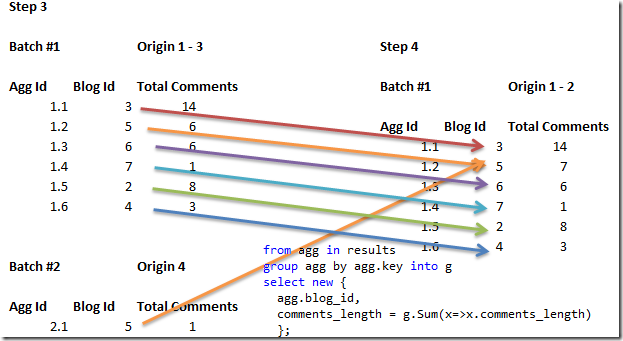

And the final step is:

And now we are done, we can't reduce the data any further because all the keys are unique.

There is another interesting property of Map / Reduce, let us say that I just added a comment to a post, that would obviously invalidate the results of the query, right?

Well, yes, but not all of them. Assuming that I added a comment to the post whose id is 10, what would I need to do to recalculate the right result?

- Map Doc #10 again

- Reduce Step 2, Batch #3 again

- Reduce Step 3, Batch #1 again

- Reduce Step 4

What is important is that I did not have to touch quite a bit of the data, making the recalculation effort far cheaper than it would be otherwise.

And that is (more or less) the notion of Map / Reduce.

A symbol server allows you to debug into code that you don’t have on your machine by querying a symbol server and a source server for the details.

SymbolSource.org has added support for NHibernate, and you can download an unofficial build of the current trunk which can be used with the symbol server here.

Moving on, all of NHibernate’s releases will be tracked on Symbol Source, so even if you don’t have the source available, you can just hit F11 and debug into NHibernate’s source.

There was a question recently in the NHibernate mailing list from a guy wanting to learn how to write database agnostic code from the NHibernate source code.

While I suppose that this is possible, I can’t really think of a worst way to learn how to write database agnostic code than reading an OR/M code. The reason for that is quiet simple, an OR/M isn’t just about one thing, it is about doing a lot of things together and bringing them together into a single whole. Yes, most OR/M are database agnostic, but trying to figure out the principles of that from the code base is going to be very hard.

That leads to an even more interesting problem, it is very hard for a beginner to actually learn something useful from a professional. That is true in any field, of course. In software, the problem is that most pros would simply skip whole steps that beginners are thought to be crucial. It isn’t from neglect, it is because they are going through those steps, but not in a conscious level.

Very often, I’ll come up with a design, and when it is only when I need to justify it to someone else that I actually realize the finer points of what have actually gone through my own head (another reason that having a blog is useful).

I think that there is a reason that we have names like code smells, you can immediately sense a problem in a smelly codebase, although it may take you a while to actually articulate it.

Lucene is a document indexing engine, that is its sole goal, and it does so beautifully. The interesting bit about using Lucene is that it probably wouldn’t be your main data store, but it is likely to be an important piece of your architecture.

The major shift in thinking with Lucene is that while indexing is relatively expensive, querying is free (well, not really, but you get my drift). Compare that to a relational database, where it is usually the inserts that are cheap, but queries are usually what cause us issues. RDBMS are also very good in giving us different views on top of our existing data, but the more you want from them, the more they have to do. We hit that query performance limit again. And we haven’t started talking about locking, transactions or concurrency yet.

Lucene doesn’t know how to do things you didn’t set it up to do. But what it does do, it does very fast.

Add to that the fact that in most applications, reads happen far more often than write, and you get a different constraint system. Because queries are expensive on the RDBMS, we try to make few of them, and we try to make a single query do most of the work. That isn’t necessarily the best strategy, but it is a very common one.

With Lucene, it is cheap to query, so it makes a lot more sense to perform several individual queries and process their results together to get the final result that you need. It may require somewhat more work (although there are things like Solr that would do it for you), but it is results in a far faster system performance overall.

In addition to that, since the Lucene index is important, but can always be re-created from source data (it may take some time, though), it doesn’t require all the ceremony associated with DB servers. Instead of buying an expensive server, get a few cheap ones. Lucene scale easily, after all. And since you only use Lucene for indexing, your actual DB querying pattern shift. Instead of making complex queries in the database, you make them in Lucene, and you only hit the DB with queries by primary key, which are the fastest possible way to get the data.

In effect, you outsourced your queries from the expensive machines to the cheap ones, and for a change, you actually got better performance overall

One of the annoying things about the Hibernate port of the profiler was that JDBC didn’t provide us with the parameters values.

Eric has just fixed and that is now live:

Enjoy…