Trusting the benchmark

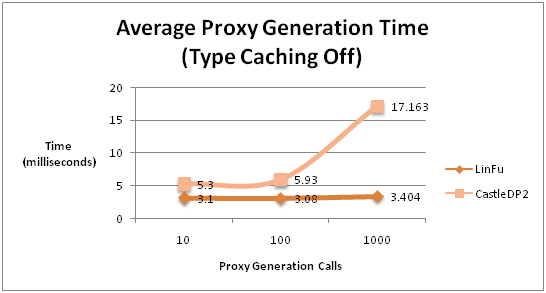

I was scanning this article when I read noticed this bit. A benchmark showing superior performance to another dynamic proxy implementation.

I was scanning this article when I read noticed this bit. A benchmark showing superior performance to another dynamic proxy implementation.

I should mention in advance that I am impressed. I have built one from scratch, and am an active member of DP2 (and DP1, when it was relevant). That is not something that you approach easily. It is hard, damn hard. And the edge cases will kill you there.

But there are two problems that I have with this benchmark. They are mostly universal for benchmarks, actually.

First, it tests unrealistic scenario, you never use a proxy generation framework with the caching off. Well, not unless you want out of memory exceptions.

Second, it tests the wrong thing. DP2 focus is not on generation efficiency (it is important, but not the main focus), the main focus are correctness (DP2 produces verifiable code) and runtime performance. You only generate a proxy type once, this means that if you can do more in the generation phase to make it run faster at runtime, you do it.

Again, it is entirely possible that the LinFu proxies are faster at runtime as well, but I have no idea, and I don't mean to test those. Benchmarks are meaningless most of the time, you need to test for performance yourself.

Comments

Any benchmarking of such a small component of a system in isolation is fairly futile beyond checking you don't have bottlenecks or concurrency issues.

Benchmarking for real performance should be done on a real implementation, and then the offending components can be dealt with in situ, which is when IoC becomes so powerful.

Thanks for the comments, Ayende. Actually I'm more impressed with what you did with Castle DP2--I don't think I would have had the patience to generate an entire AST to abstract myself away from generating the IL myself--and believe me, that takes far more patience than just having to deal with the IL directly. So for that, my hat definitely goes off to you and the rest of the Castle team. bow

For me, however, it seemed to be a bit of overkill to create an entire abstraction layer over generating IL, especially since the only purpose of the code generation is to forward those calls back to an interceptor. While I understand that IL certainly has a steep learning curve and is difficult to generate, I still have to question whether or not having all that ASTcode is actually necessary.

Based on what I've seen in Castle DP2, there seems to be an underlying assumption here that by writing an abstraction layer over the IL, you immediately protect yourself from generating unverifiable (or worse, invalid) IL code--all at the expense of simplicity.

In other words, instead of spending all that time coding that whole AST, wouldn't have been easier just to become proficient with IL and write only the code that you need? Do you really need to invent a second language (the AST) within your favorite language just to generate another one ( IL)? Do you realize that you're using two languages to generate one (C# + AST = IL)? Whatever happened to just generating IL?

I'm a big fan of Castle, but it seems like you guys invented a fifth wheel since you didn't want to get your hands dirty in IL.

Philip,

One of the goals of DP2 is that it will be possible to work with it without being aware of all the details of IL generation.

We are a diverse group, and not everyone can read IL. Having the AST mean that people can work with it in a much simpler way.

It also mean that it is simple to add new features, or fix bugs.

Above all, it means that the AST make the code much more maintainable.

Hi Ayende,

I'm interested in redoing the benchmark to time the actual proxy-to-target method calls for each library, but I can't seem to figure out how to persist the proxies that Castle generates to disk. I want to compare the IL generated by each library, but I can't do that unless I can get it to save the AssemblyBuilder to disk. Do you know how to do this? I'm not very familiar with Castle.DynamicProxy2's object model, so if you could help me, I'd certainly appreciate it. Thanks!

You need to use PersistentProxyBuilder for that.

Comment preview