Integer compressionPorting simdcomp to C#

In the code of the simdcomp library there is a 25KLOC file that does evil things to SIMD registers to get bit packing to work. When I looked at the code the first few dozen times, I had a strong desire to run away screaming. Luckily, this isn’t just some pile of complicated code, but well-thought-out set of functions that are meant to provide optimal code for specific tasks. In particular, the code is specialized for each bit width that you may want to bit pack (0 .. 32). Even better, no one actually sat down to write it out by hand, there is a Python script that would generate the code.

The first step was to understand what exactly is going on in the code, and then see how we can translate that to C#. Even just a few years ago, that would have been either an impossible dream or required the use of a native library (with the associated PInvoke overhead). However, .NET today has a very robust intrinsics support, and vectors / SIMD instructions are natively supported.

I actually had a tougher challenge, since I want this code to run on x64 and ARM64 instances. The original code is x64 only, of course. The nice thing about SIMD support for .NET is that most of that can be done in a cross platform manner, with the JIT deciding what instructions will actually be emitted. There is still a very strong correlation between the vectorized code and the instructions that are being emitted, which means that I get both great control of what is going on and the appropriate abstraction. I was actually able to implement the whole thing without dropping to architecture-specific code, which makes me very happy.

Before we get any deeper, let’s take a simple challenge. We want to take an array of 32 integers and pack each one of them in 4 bits into an array of 16 bytes. Here is what the code will look like:

This is a bit dense, but let’s see if I can explain what is going on here.

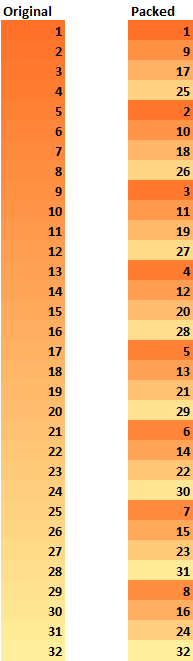

We load from the array a vector (4 items) at the 0, 4, 8, 12, 16, 20, 24, and 28 intervals. For each one of those, we shift the values by the required offset and or all of them together. Note that this means that the first item’s four bits go in the first nibble, but the second item’s bits go in the fifth nibble, etc.

The idea is that we are operating on 4 items at a time, reducing the total number of operations we have to perform. It may be easier to understand if you see those changes visually:

What is happening here, however, is that we are able to do this transformation in very compact code. That isn’t just an issue of high-level code, let’s take a look at the assembly instructions that this generates:

I’m going to assume that you aren’t well versed with assembly, so let’s explain what is going on. This code contains zero branches, it does four reads from memory, mask the elements, shift them and or them together.

The relevant instructions are:

- vmovupd – read 4 integers to the register

- vpand – binary and with a value (masking)

- vpslld – shift to the left

- vpor – binary or

- vmovdqu – write 16 bytes to memory

There are no branches, nothing to complicate the code at all. This is about as tight as you can get, at the level of machine instructions.

Of particular interest here is the C# code. The entire thing is basically a couple of lines of code, and I could express the whole thing as a single expression in a readable manner. Let’s look at the C code, to compare what is going on:

Note that both should generate the same machine code, but being able to rely on operator overloading means that I can now get a far more readable code.

From that point, the remaining task was to re-write the Python script so it would generate C# code, not C. In the next post I’m going to be talking more about the constraints we have and what we are actually trying to solve with this approach.

More posts in "Integer compression" series:

- (21 Jun 2023) FastPFor in C#, results

- (20 Jun 2023) Implementing FastPFor decoding in C#

- (19 Jun 2023) Implementing FastPFor encoding in C#

- (16 Jun 2023) Adapting FastPFor to RavenDB

- (15 Jun 2023) Porting simdcomp to C#

- (14 Jun 2023) The FastPFor code

- (13 Jun 2023) Understanding FastPFor

- (12 Jun 2023) SIMD bit packing and unusual usages

- (08 Jun 2023) Using SIMD bit packing in practice

- (07 Jun 2023) Understanding Simd Compression by Lemire

- (06 Jun 2023) delta encoding + variable size integers

Comments

Comment preview