The bare minimum a distributed system developer should know aboutBinding to IP addresses

It is easy to think about a service that listen to the network as just that, it listens to the network. In practice, this is often quite a bit more complex than that.

For example, what happens when I’m doing something like this?

In this case, we are setting up a web server with binding to the local machine name. But that isn’t actually how it works.

At the TCP level, there is no such thing as machine name. So how can this even work?

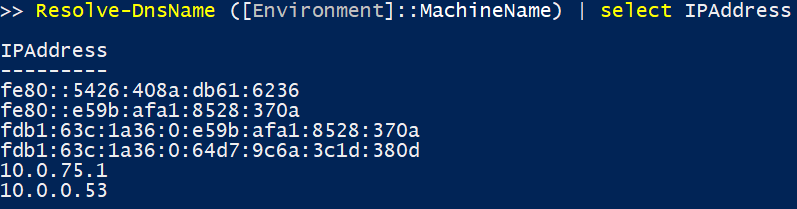

Here is what is going on. When we specify a server URL in this manner, we are actually doing something like this:

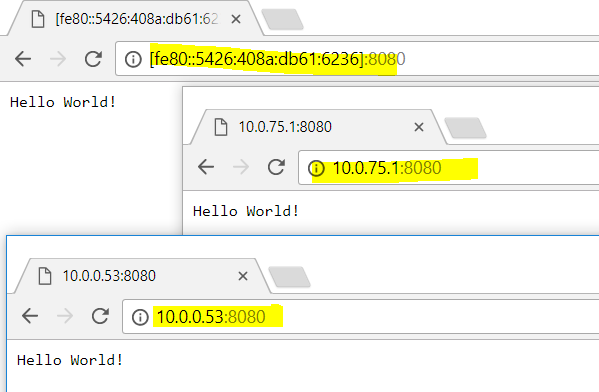

And then the server is going to bind to each and every one of them. Here is an interesting tidbit:

What this means is that this service doesn’t have a single entry point, you can reach it through multiple distinct IP addresses.

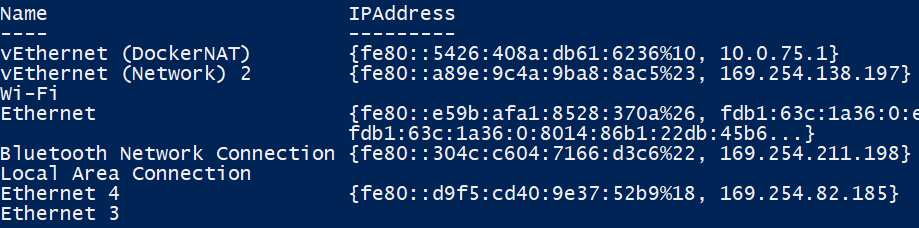

But why would my machine have so may IP addresses? Well, let us take a look. It looks like this machine has quite a few network adapters:

I got a bunch of virtual ones for Docker and VMs, and then the Wi-Fi (writing on my laptop) and wired network.

Each one of these represent a way to bind to the network. In fact, there are also over 16 million additional IP addresses that I’m not showing, the entire 127.x.x.x range. (You probably know that 127.0.0.1 is loopback, right? But so it 127.127.127.127, etc.).

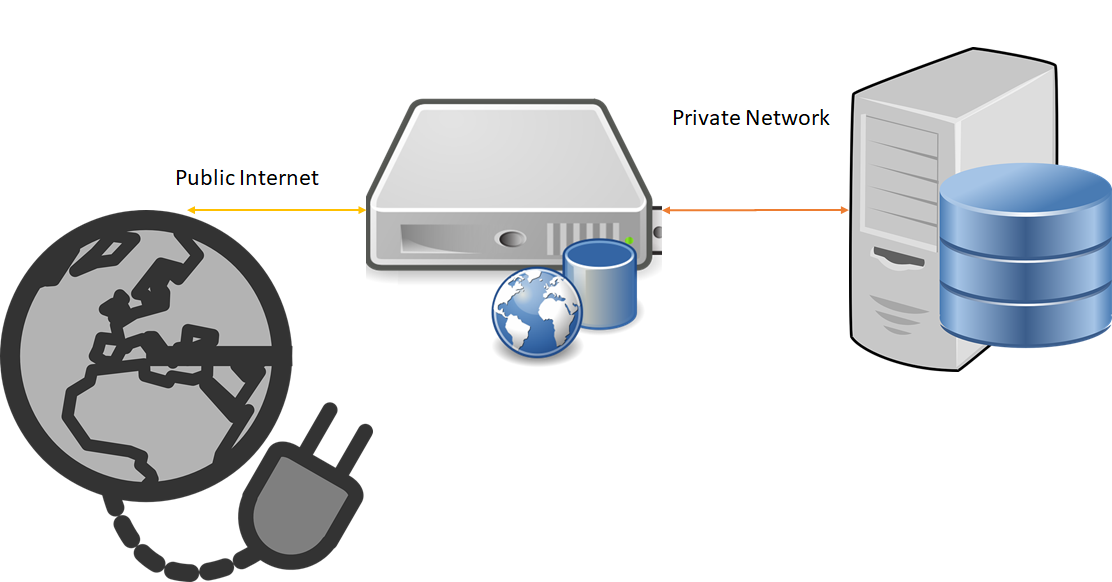

All of this is not really that interesting, until you realize that this has real world implications for you. Consider a server that has multiple network cards, such as this one:

What we have here is a server that has two totally separate network cards. One to talk to the outside world and one to talk to the internal network.

When is this useful? In pretty much every single cloud provider you’ll have very different networks. On Amazon, the internal network gives you effectively free bandwidth, while you pay for the external one. And that is leaving aside the security implications

It is also common to have different things bound to different interfaces. Your admin API endpoint isn’t even listening to the public internet, for example, it will only process packets coming from the internal network. That adds a bit more security and isolation (you still need encryption, authentication, etc of course).

Another deployment mode (which has gone out of fashion) was to hook both network cards to the public internet, using different routes. This way, if one went down, you could still respond to requests, and usually you could also handle more traffic. This was in the days where the network was often the bottleneck, but nowadays I think we have enough network bandwidth that program efficiency is of more importance and this practice somewhat fell out of favor.

More posts in "The bare minimum a distributed system developer should know about" series:

- (20 Nov 2017) Binding to IP addresses

- (15 Nov 2017) HTTPS Negotiation

- (06 Nov 2017) DNS

- (03 Nov 2017) Certificates

- (01 Nov 2017) Transport level security

- (31 Oct 2017) networking

Comments

Comment preview