RavenDB on Raspberry PI

This has been a dream of mine for quite some time, to be able to run RavenDB on the Raspberry PI. The Raspberry PI is a great toy to play with, maybe do some home automation or stream some videos to your TV. But while that is cool, what I really wanted to see is if we could run RavenDB on top of the Raspberry PI, and when we do that, what kind of behavior we’ll see.

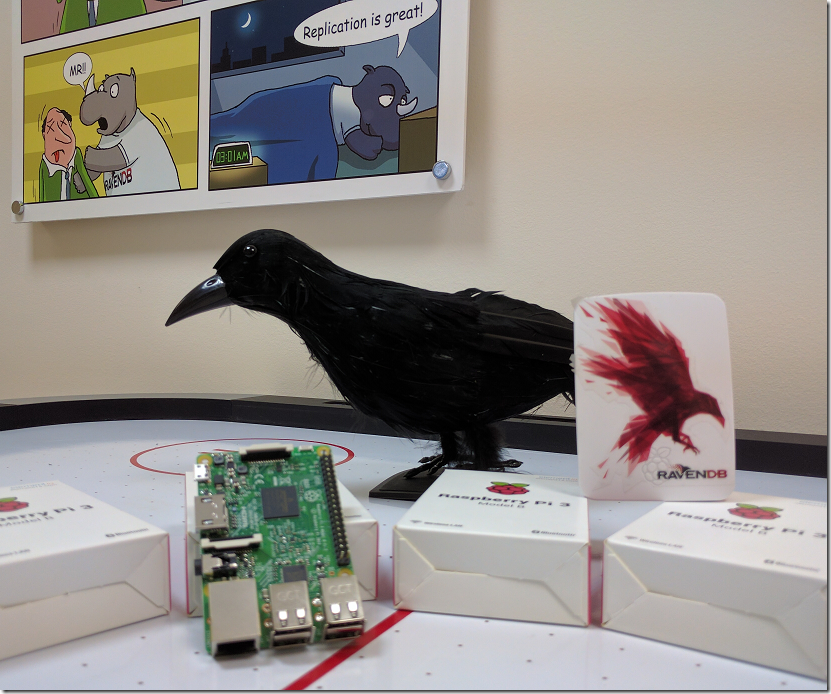

We got it working, and we are so excited that we are raffling off a brand new Raspberry PI, in its role as a RavenDB 4.0 server.

The first step along that way, running on Linux, was already achieved over a year and a half ago, but we couldn’t just run on the Raspberry PI, it is an ARM architecture, and we needed the CoreCLR ported there first. Luckily for us, quite a few people are also interested in running .NET on the ARM, and we have been tracking the progress of the project for over a year, trying to see if anyone will ever get to the hello world moment.

For the past month, it looked like things has gotten settled, the number of failing tests dropped to close to zero, and we decided that this is time to take another stab at running RavenDB on the Raspberry PI.

Actually getting there wasn’t trivial, unlike using the CoreCLR, we don’t have the dotnet executable to manage things for us, we are directly invoking corerun, and getting everything just right for this was… finicky. For example, trying to compile the CoreCLR on the Raspberry PI resulted in the Raspberry PI experiencing a heat death that caused no little excitement at the office.

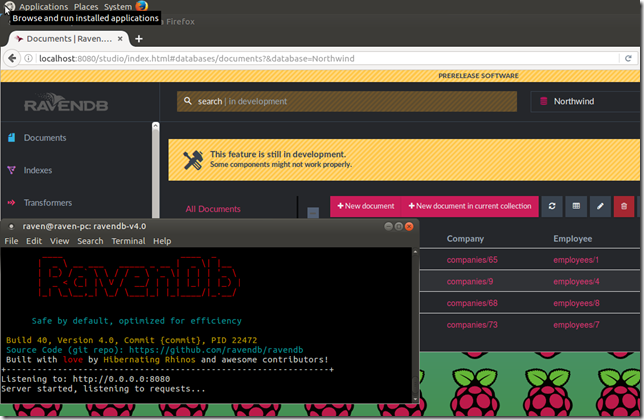

RavenDB is running on that using CoreCLR (and quite a bit of sweat) on top of a Ubuntu 16.04 system. To my knowledge, RavenDB is the first significant .NET project that has managed to run on the Pi. As in, the first thing beyond simple hello world.

Naturally, once we got it working, we had to put it through its paces and see what we can get out of this adorable little machine.

The current numbers are pretty good, but on the other hand, we can’t currently compile with –O3, so that limits us to –O1. We are also just grabbed a snapshot of our code to test it, without actually taking the time to profile and optimize for that machine.

With all of those caveats, allow me to share what is probably the best part of it is:

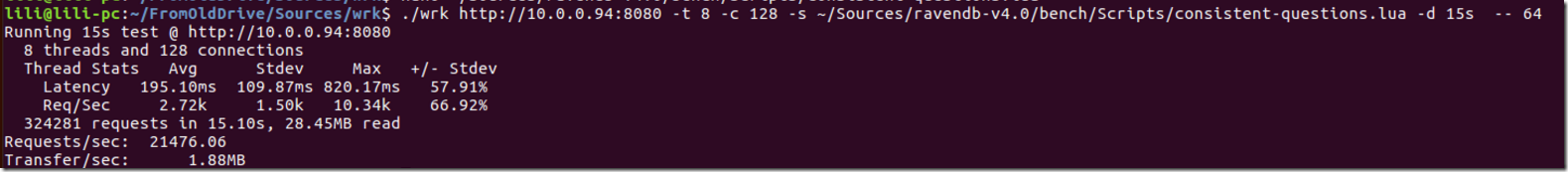

This is a benchmark from a different Ubuntu machine to the Raspberry PI, asking to get a whole bunch of documents.

This is processing requests at a rate of 21,476 req / sec. That is a pretty awesome number.

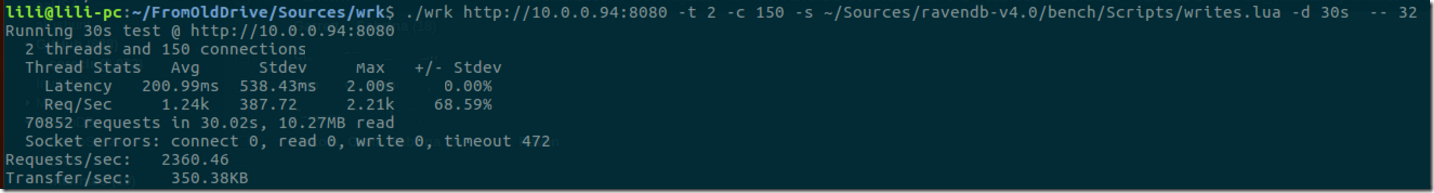

How about writes?

So that is 2,360 writes / sec.

Our next project, setting up a cluster of those, you can see that we have already started the work…

We’ll be posting a lot more about what you can now do with this in the next few days.

Comments

Wow! this is very impressive, not only because of the PI, also because its opens a new door to run large clusters of database in another architecture!

if ARM continue to improve in the future, maybe there is a chance to get large scale multiple cores machines with better IOPS / $ rate.

fantastic news and very exciting. still can't believe you did it.

Uri,

We are currently running a cluster of them together, replicating from one another. It is going to make it very simple to test cluster stuff, when the entire cluster fit into your lap :-)

wouldn't testing a cluster of aws t2.nano instances be somewhat more applicable to real life implementations?

That is freaking awesome Oren! Freaking. Awesome!!

Peter,

t2.nano is actually quite a bit weaker than the Pi3.

But there is a low to be said by being able to physically play with the hardware. And it makes for some pretty awesome demos.

Good work, man!

wow that's cool.

Were you not 4 years late to the party? https://twitter.com/gregyoung/status/281145652652691456

Daniel,

We never run on Mono, because that was unworkable and crashed when you breath near it

So, would it run it on a scaleway.com BareMetal ARM-Server? :)

Would be freakin awesome!

Sven,

I don't see any reason why not.

Comment preview