Benchmarking with Go

Every now and then, you really need to get out of your comfort zone, I decided that what I want to do is to play a bit with Go, which I haven’t done yet. Oh, I have read Go code, quite a lot of it, but it isn’t the same as writing and actually using it.

We are doing a lot of performance work recently, and while some of that is based entirely on micro benchmarks and focused on low level details such as the number of retired instructions at the CPU level, we also need to see the impact of larger changes. We have been using WRK to do that, but it is hard to get it running on Windows and we had to do some nasty things in Lua scripting to get what we wanted.

I decided that I’ll take the GoBench tool and transform it into a dedicated tool for benchmarking RavenDB.

Here is what we want to test:

- Read document by id

- Query documents by index

- Query map/reduce results

- Write new documents (single document)

- Write new documents (multiple documents in tx)

- Update existing documents

This is intended to be a RavenDB tool, so we won’t be trying to do anything generic, we’ll be writing specialized code.

In terms of the interface, gobench is using command line parameters to control itself, but I think that I’ll pass a configuration file instead. I started to write about the format of the configuration file, when I realized that I’m being absolutely stupid.

I don’t need to do that, I already have a good way to specify what I want to do in the code. It is called the code. The actual code to run the HTTP requests is here. But this is basically just getting a configuration object and using it to generate requests.

Of far more interest to me is the code that actually generate the requests themselves. Here is the piece that tests read requests:

We just spin off a number of go routines, that each does a portion of the work. This gives us concurrent clients and the ability to hammer the server. And the amount of code that we need to write for this is minimal.

To compare, here is the code for writing to the databases:

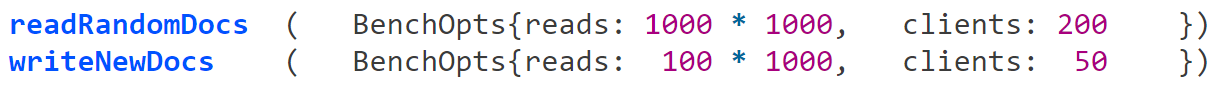

And then we are left with just deciding on a particular benchmark configuration. For example, here is us running simple load test for both reads and writes.

I think that this matches the low overhead for configuration, readability and high degree of flexibility quite well.

Comments

Comment preview