The cost of dog fooding

You might have noticed that there we a few errors on the blog recently.

That is related to a testing strategy that we employ for RavenDB.

In particular, part of our test scenario is to shove a RavenDB build to our own production system, to see how it works in a live production system with real workloads.

Our production systems are entirely running on RavenDB, and we have been playing with all sort of configuration and deployment options recently. The general idea is to give us good indication about the performance of RavenDB and make sure that we don’t have any performance regression.

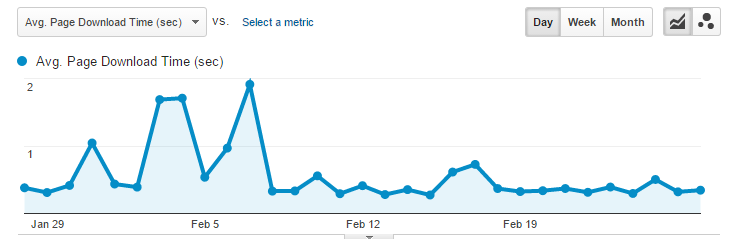

Here is an example taken from our tracking system, for this blog:

You can see that we had a few periods with longer than usual response times. The actual reason for that was that we had some code that was throwing tremendous amount of work to RavenDB, and this is actually exhibiting noisy neighbor syndrome in this case (that is, the blog is behaving fine, but the machine it is on is very busy). That gave us indication about a possible optimization and some better internal metrics.

At any rate, the downside of living on the bleeding edge in production is that we sometimes get a lemon build.

That is the cost of dog fooding, something you need to clean up the results.

Comments

Mister Ayende your customer and readers are not dogs!

Mario,

Do you know what the term dog fooding means?

See

https://en.wikipedia.org/wiki/Eating_your_own_dog_food

More dog food :-)

The above link is not working because of under_scores disappears in the URL.

Dublicate:

02 Mar 2016

10:33 AM Carsten Hansen

More dog food :-)

The above link is not working because of under_scores disappears in the URL.

Carsten Hansen 02 Mar 2016

12:33 PM Carsten Hansen

More dog food :-)

The above link is not working because of under_scores disappears in the URL.

Another possible error:

FUTURE POSTS

Rate limiting fun - less than a minute from now

Was in the top-right corner while I was reading the "Rate limiting fun". The clock was at least 11:03.

First time I noticed it said "less than 3 minutes from now" and the clock was after 11:00 and I was already reading the post of today.

What tracking system do you use?

Tanga,

Zabbix and google analytics

Comment preview