I can a lot about the performance of RavenDB, a you might have noticed from this blog. A few years ago we had a major architecture shift that increased our performance by a factor of ten, which was awesome. But with the meal, you get appetite, and we are always looking for better performance.

One of the things we did with RavenDB is build things so we’ll have the seams in place to change the internal behavior without users noticing how things are working behind the scenes. We have used this capability a bunch of time to improve the performance of RavenDB. This post if about one such scenario that we recently implemented and will go into RavenDB 5.0.

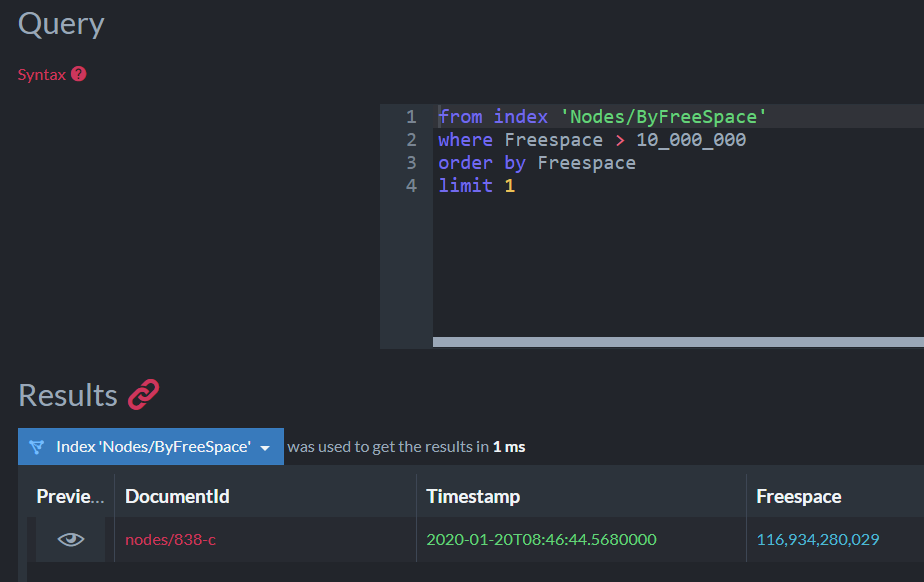

Consider the following query:

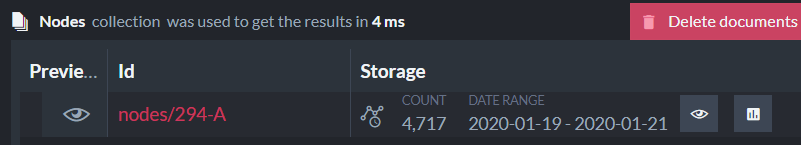

As you can see, we are doing a range based query on a date field. Now, the source collection in this case has just over 5.9 million entries and there are a lot of unique elements in the specified range. Let’s consider how RavenDB will handle this query in version 4.2:

- First, find all the unique CreatedAt values between those ranges (there can be tens to hundreds of thousands).

- Then, for each one of those unique values, find all the match documents (usually, only one).

This is expensive and the problem almost always shows up when doing date range queries over non trivial ranges because that combine the two elements of many unique terms and very few results per term.

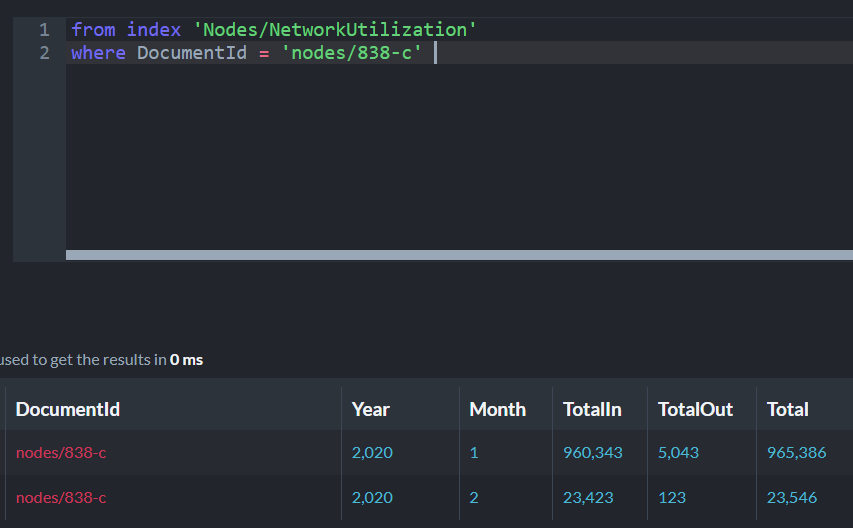

The general recommendation was to avoid running the query above and instead use:

This allows RavenDB to use a different method for range query, based on numeric values, not distinct string values. The performance different is huge.

But the second query is ugly and far less readable. I don’t like such a solution, even if it can serve as a temporary workaround. Because of that, we implemented a better system in RavenDB 5.0. Behind the scenes, RavenDB now translate the first query into the second one. You don’t have to do anything to make it happen (when migrating from 4.2 instances, you’ll need to reset the index to get this behavior). You just use dates as you would normally expect them to be used and RavenDB will do the right thing and optimize it for you.

To give you a sense of the different in performance, the query above on a data set of 5.9 million records will have the following performance:

- RavenDB 4.2 - 7,523 ms

- RavenDB 5.0 – 134 ms

As you can imagine, I’m really happy about this kind of performance boost.