High performance .NETBuilding a Redis Clone–separation of computation & I/O

After achieving 1.25 million ops/sec, I decided to see what would happen if I would change the code to support pipelining. That ended up being quite involved, because I needed to both keep track of all the incoming work as well as send the work to multiple locations. The code itself is garbage, in my opinion. It is worth it only as far as it points me inthe right direction in terms of the overall architecture. You can read it below, but it is a bit complex. We read from the client as much as we are able, then we send it to each of the dedicated threads to run it.

In terms of performance, it is actually slower than the previous iteration (by about 20%!), but it serves a very important aspect, it makes it easy to tell where the costs are.

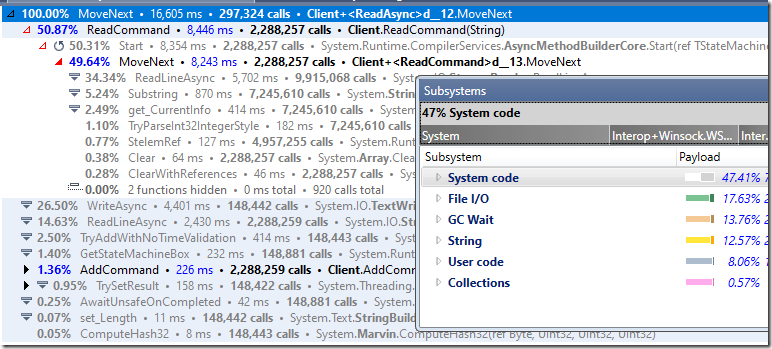

Take a look at the following profiler result:

You can see that we are spending a lot of time in I/O and in string processing. The GC time is also quite significant.

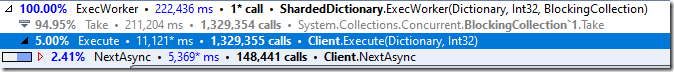

Conversely, when we actually process the commands from the clients, we are spending most of the time simply idling.

I want to tackle this in stages. The first part is to stop using strings all over the place. The next stage after that will likely be to change the I/O model.

For now, here is where we stand:

More posts in "High performance .NET" series:

- (19 Jul 2022) Building a Redis Clone–Analysis II

- (27 Jun 2022) Building a Redis Clone – skipping strings

- (20 Jun 2022) Building a Redis Clone– the wrong optimization path

- (13 Jun 2022) Building a Redis Clone–separation of computation & I/O

- (10 Jun 2022) Building a Redis Clone–Architecture

- (09 Jun 2022) Building a Redis Clone–Analysis

- (08 Jun 2022) Building a Redis Clone–naively

Comments

Sorry is there a part missing?

Christian,

There shouldn't be, but I'm not done in the series.

Ah ok, I was just confused by the rather abrupt ending and the stray "a" at the end of the post.

Christian,

Artifact of how I write the posts, sorry, fixed now

Comment preview