Hidden pitfalls in a trivial domain model

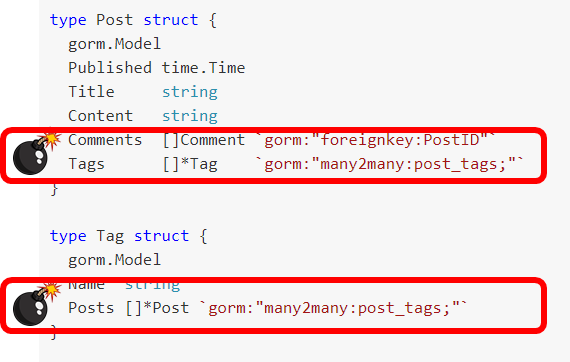

I read this post about using Object Relational Mapper in Go with great interest. I spent about a decade immersed deeply within the NHibernate codebase, and I worked on a bunch of OR/Ms in .NET and elsewhere. My first reaction when looking at this post can be summed in a single picture:

This is a really bad modeling decision, and it is a really common one when people are using an OR/M. The problem is that this kind of model fails to capture a really important aspect of the domain, it’s size.

Let’s consider the Post struct. We have a couple of collections there. Tags and Comments, it is reasonable to assume that you’ll never have a post with more than a few tags, but a popular post can easily have a lot of comments. Using Reddit as an example, it took me about 30 seconds to find a post that had over 30,000 comments on it.

On the other side, the Tag.Posts collection may contains many posts. The problem with such a model is that trying to access such properties is that they are trapped. If you hit something that has a large number of results, that is going to cause you to use a lot of memory and put a lot of pressure on the database.

The good thing about Go is that it is actually really hard to play the usual tricks with lazy loading and proxies behind your back. So the GORM API, at least, is pretty explicit about what you load there. The problem with this, however, is that developers will explicitly call the “gimme the Posts” collection and it will work when tested locally (small dataset, no load on the server). It will fail in production in a very insidious way (slightly slower over time until the whole thing capsize).

I would much rather move those properties outside the entities and into standalone queries, ones that come with explicit paging that you have to take into account. That reflect the actual costs behind the operations much more closely.

Comments

Comment preview