Why IOPS matter for the database?

You knew that this had to come, after talking about memory and CPU so often, we need to talk about actual I/O.

You knew that this had to come, after talking about memory and CPU so often, we need to talk about actual I/O.

Did I mention that the cluster was setup by a drunk monkey. That term was raised in the office today, and we had a bunch of people fighting over who was the monkey. So to clarify things, here is the monkey:

If you have any issues with this being a drunk monkey, you are likely drunk as well. How about you setup a cluster that we can test things on.

At any rate, after setting things up and push the cluster, we started seeing some odd behaviors. It looked like the cluster was… acting funny.

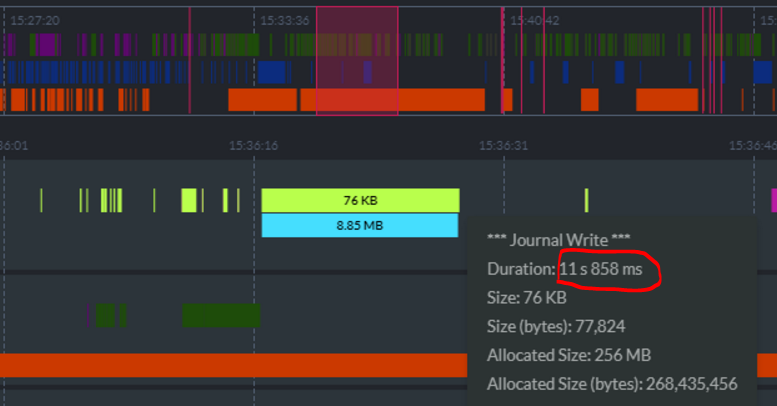

One of the things we build into RavenDB is the ability to inspect its state easily. You can see it in the image on the right. In particular, you can see that we have a journal write taking 12 seconds to run.

It is writing 76Kb to disk, at a rate of about 6KB per second. To compare, a 1984 modem would actually be faster. What is going on? As it turned out, the IOPS on the system was left in their default state, and we had less than 200 IOPS for the disk.

Did I mention that we are throwing production traffic and some of the biggest stuff we have on this thing? As it turns out, if you use all your IOPS burst capacity, you end up having to get your I/O through a straw.

This is excellent, since it exposed a convoy situation in some cases, and also gave us a really valuable lesson about things we should look at when we are investigating issue (the whole point of doing this “setup by a monkey” exercise).

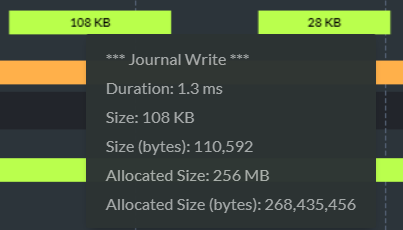

For the record, here is what this looks like when you do things properly:

Why does this matter, by the way?

A journal write is how RavenDB writes to the transaction journal. This is absolutely critical to ensuring that the transaction ACID properties are kept.

It also means that when we write, we must wait for the disk to OK the write before we consider it completed. And that means that there were requests somewhere that were sitting there waiting for 12 seconds for a reply because the IOPS run out.

Comments

How does the IOPS number translate to actual disk performance - i never knew if 500 IOPS is a lot or too slow for any practical purposes and , for example, Azure gives me no idea what their iops means and to what it's comparable. Is it the same as other providers IOPS - i mean, some standard unit of something? How many IOPS does a cheap SSD or laptop HDD provide?

Rafal, Basically, no one tells you what it means exactly on the cloud. You can get IOPS from benchmarking tools. See a good example here: http://www.thessdreview.com/featured/ssd-throughput-latency-iopsexplained/

An NVMe HD should give you hundreds of thousands of IOPS. However, note that this assume a high degree of parallelism. Another important metric is with sequential work, what is the _latency_.

Comment preview