RavenDB 4.0 Unsung HeroesThe indexing threads

A major goal in RavenDB 4.0 is to eliminate as much as possible complexity from the codebase. One of the ways we did that is to simplify thread management. In RavenDB 3.0 we used the .NET thread pool and in RavenDB 3.5 we implemented our own thread pool to optimize indexing based on our understanding of how indexing are used. This works, is quite fast and handles things nicely as long as everything works. When things stop working, we get into a whole different story.

A major goal in RavenDB 4.0 is to eliminate as much as possible complexity from the codebase. One of the ways we did that is to simplify thread management. In RavenDB 3.0 we used the .NET thread pool and in RavenDB 3.5 we implemented our own thread pool to optimize indexing based on our understanding of how indexing are used. This works, is quite fast and handles things nicely as long as everything works. When things stop working, we get into a whole different story.

A slow index can impact the entire system, for example, so we had to write code to handle that, and noisy indexing neighbors can impact overall indexing performance and tracking costs when the indexing work is interleaved is anything but trivial. And all the indexing code must be thread safe, of course.

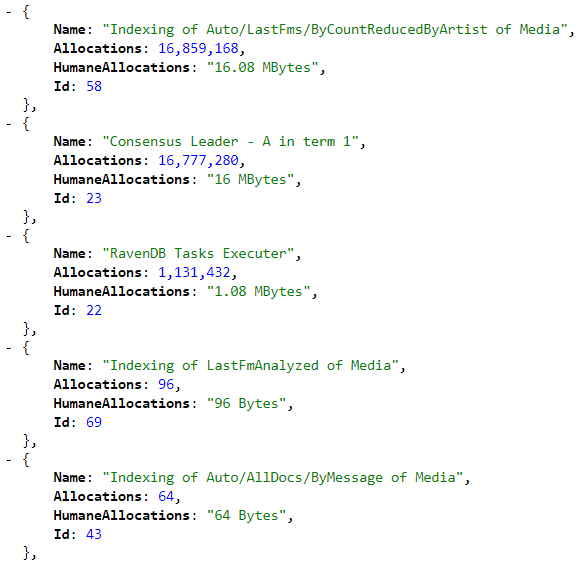

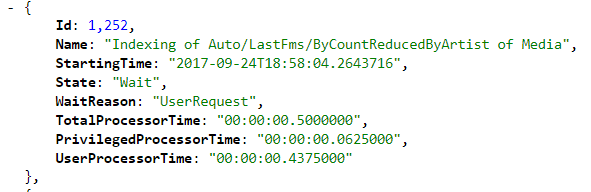

Because of that, we decided we are going to dramatically simplify our lives. An index is going to use a single dedicated thread, always. That means that each index gets their own thread and are only able to interfere with their own work. It also means that we can have much better tracking of what is going on in the system. Here are some stats from the live system.

And here is another:

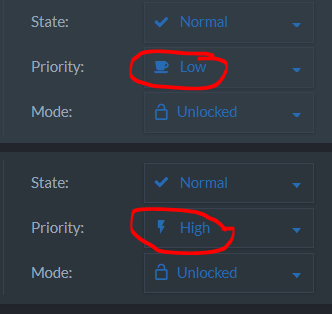

What this means is that we have fantastically detailed view of what each index is doing, in terms of CPU, memory and even I/O utilization is needed. We can also now define fine grained priorities for each index:

The indexing code itself can now assume that it single threaded, which free a lot of complications and in general make things easier to follow.

There is the worry that a user might want to run 100 indexes per database and 100 databases on the same server, resulting in a thousand of indexing threads. But given that this is not a recommended configuration and given that we tested it and it works (not ideal and not fun, but works), I’m fine with this, especially given the other alternative that we have today, that all these indexes will fight over the same limited number of threads and stall indexing globally.

The end result is that thread per index allow us to have fine grained control over the indexing priorities, account for memory and CPU costs as well simplify the code and improve the overall performance significantly. A win all around, in my book.

More posts in "RavenDB 4.0 Unsung Heroes" series:

- (30 Oct 2017) automatic conflict resolution

- (05 Oct 2017) The design of the security error flow

- (03 Oct 2017) The indexing threads

- (02 Oct 2017) Indexing related data

- (29 Sep 2017) Map/reduce

- (22 Sep 2017) Field compression

Comments

Hi Oren, sounds good.

Given the constraints of this, what would be a rough rule of thumb for the number of tenants on a single server assuming 100 indexes in each database. We are not far from that and although we may be able to consolidate some of them it would be 75-80 minimum per tenant (almost all Map Only indexes). So then should we be looking to split onto a seperate server when we hit 20 tenants? 30? I imagine the answer is 'it depends' but I'm just checking.

Hi Ian, The key here is how many of them are going to be changing? If you have a lot of indexes on the same collections, I would suggest merging them. If you have a lot of collections and each served by one index, that is great. Then the amount of work done will be comparable to the number of changes per collection.

That said, on my current machine I have 3K threads right now, with Chrome & VS opened so I wouldn't be afraid of having a few of those, but if you are talking about 80s per db, then getting to 30 - 50 would be my rule of thumb, based on just basic expectations, without really knowing anything about your environment.

That said, one of the things we did in 4.0 is to make it really easy to run in Dokcer, which will give you better isolation between the tenants, and likely allow you to divide resources more evenly.

Ok, thanks for your thoughts on this. Most of these indexes are not doing much at all a lot of the time.

Raven Studio kindly tells me that we have: 95 indexes for 79 collections (4 MapReduce). It think we can get that number down to 85 ish.

Ian, The easiest way to test that is to export the dbs and import them in 4.0 That would give you good indication on the implications. I expect you'll see significant perf benefits.

Comment preview